The paper introduces 2K Retrofit, a model-agnostic sparse refinement framework that enables existing 3D foundation models (e.g., Depth Anything, VGGT) to perform 2K-resolution geometric prediction. It achieves state-of-the-art accuracy in depth and pointmap estimation while being significantly more efficient than dense inference, reaching 8.1 FPS for 2K depth.

TL;DR

High-resolution 3D reconstruction is crucial for autonomous driving and AR, but 2K-resolution dense inference is a memory nightmare. 2K Retrofit offers a "plug-and-play" solution: it freezes your favorite 3D foundation model (like Depth Anything v2 or VGGT), identifies high-error regions using an entropy-based selector, and refines only those sparse pixels. The result? 2K-level geometric precision at 8.1 FPS, with zero backbone retraining.

Background: The Resolution Wall

While 3D foundation models have revolutionized zero-shot depth estimation, they are often "trapped" in low-resolution training regimes (typically sub-1K). Moving to 2K resolution usually implies a quadratic explosion in FLOPs and memory. Previous attempts—such as tile-based processing—often result in inconsistent "seams" or redundant computation on flat, low-frequency surfaces (like walls) where high resolution adds little value.

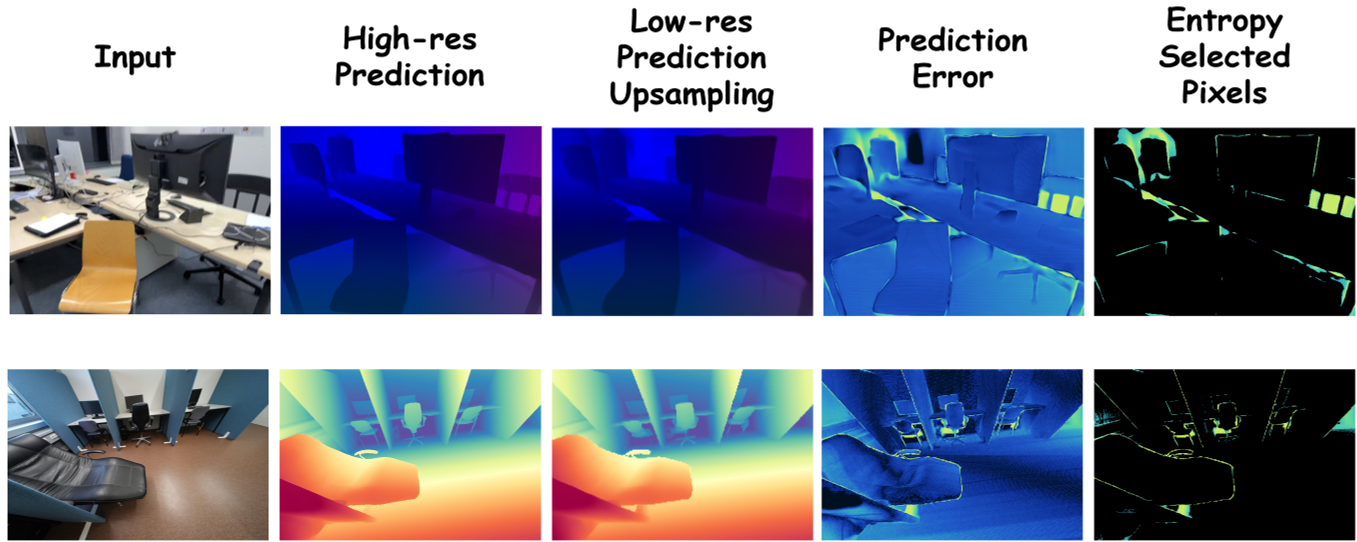

The Core Insight: Error Sparsity

The authors observe that the delta between a low-resolution "coarse" prediction and a true 2K "fine" prediction isn't uniform. Errors are concentrated at semantic boundaries and thin structures (e.g., cables, handles). By focusing computation only on these "uncertain" regions, we can bypass the "Resolution Wall."

Methodology: The Sparse Pipeline

The 2K Retrofit architecture consists of a three-stage refinement loop:

- Coarse Initialization: The 2K image is downsampled, processed by a frozen foundation model (F), and upsampled via nearest-neighbor interpolation to create a base geometric map.

- Entropy-Based Selection: Instead of a complex learnable selector, the authors use the entropy of the backbone's head features. High entropy correlates perfectly with geometric ambiguity (boundaries). This selector identifies the top ~10% of pixels that need help.

- Sparse Refinement & Gated Fusion: A MinkowskiUNet (designed for sparse data) processes these pixels. Finally, a gated mechanism decides whether to trust the global coarse prediction or the local refined one.

Fig 2: The 2K Retrofit pipeline, showing the transition from coarse global estimates to sparse, high-fidelity refinement.

Fig 2: The 2K Retrofit pipeline, showing the transition from coarse global estimates to sparse, high-fidelity refinement.

Experimental Triumphs

The researchers tested 2K Retrofit across monocular depth (ARKitScenes, ScanNet++) and multi-view pointmap tasks (ETH3D).

- Efficiency: Compared to retraining a backbone like VGGT at 2K, 2K Retrofit provides a 17x speedup, reducing GFLOPs from 495 to 172.

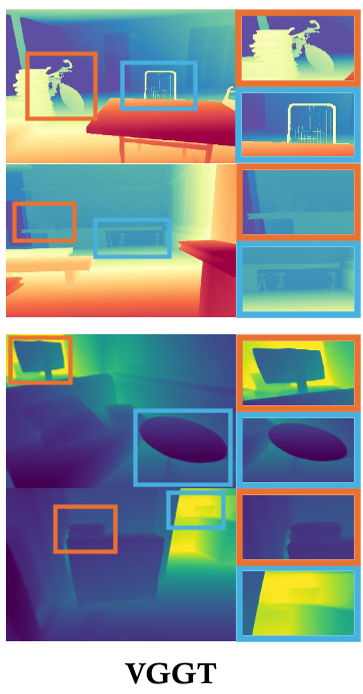

- Accuracy: It consistently outperforms "Patch-based" SOTA like PatchRefiner and PRO, particularly in maintaining global consistency while capturing razor-sharp edges.

Fig 3: Qualitative comparison on ETH3D. Note how 2K Retrofit (right) recovers fine structures like chair legs and thin poles that foundation models often blur (middle).

Fig 3: Qualitative comparison on ETH3D. Note how 2K Retrofit (right) recovers fine structures like chair legs and thin poles that foundation models often blur (middle).

Critical Analysis: Why It Works

The "magic" lies in the Entropy Selector. By deriving uncertainty from the latent features before the final regression, the model taps into the backbone's internal doubt. Using a sparse Minkowski Engine for the refinement branch is a brilliant move—it treats high-resolution pixels like a sparse 3D point cloud, making it incredibly lightweight compared to standard dense CNNs.

Limitations: The model still faces challenges in textureless or highly reflective regions (e.g., glass, mirrors) where neither the coarse nor the refined features provide sufficient geometric cues.

Conclusion & Future Impact

2K Retrofit is a significant step toward making high-resolution 3D vision practical for real-time edge deployment. By treating high resolution as a sparse correction problem rather than a dense reconstruction problem, it paves the way for 4K or even 8K geometric perception in the near future.

Keywords: High-Resolution Depth, Sparse Refinement, 3D Foundation Models, Minkowski Convolutions, Autonomous Driving.