This paper introduces the "Chain-of-Steps" (CoS) mechanism, revealing that reasoning in diffusion-based video models primarily emerges along the denoising steps rather than sequentially across frames. By analyzing the VBVR-Wan2.2 model, the authors demonstrate that models explore multiple hypotheses in early steps before converging to a final solution, achieving a 2.1% performance boost on the VBVR-Bench using a novel training-free latent ensemble strategy.

TL;DR

Contrary to the popular belief that video models reason frame-by-frame (Chain-of-Frames), this breakthrough study reveals that reasoning actually unfolds along the diffusion denoising steps (Chain-of-Steps). By visualizing the "clean" latent estimate at each step, researchers found that models act like biological brains—exploring multiple parallel solutions before pruning them. A simple, training-free latent ensemble during the early steps can boost reasoning accuracy by over 3%, proving that the denoising trajectory is the true frontier of AI logic.

The Paradigm Shift: Steps vs. Frames

For years, the community assumed that if a video model generates a sequence where a ball hits a target, the "reasoning" happens as the model moves from Frame 1 to Frame 100. This is the Chain-of-Frames (CoF) hypothesis.

However, this paper uncovers a deeper truth: Because Video Diffusion Transformers (DiTs) use bidirectional attention, they "see" the entire timeline at every denoising step. The reasoning isn't temporal; it's iterative. The authors call this Chain-of-Steps (CoS).

Figure 1: In a maze-solving task, the model explores multiple paths simultaneously in early steps (ghostly traces) before "pruning" the incorrect ones in later steps.

Figure 1: In a maze-solving task, the model explores multiple paths simultaneously in early steps (ghostly traces) before "pruning" the incorrect ones in later steps.

Methodology: How Video Models "Think"

The researchers investigated the estimated clean latent () at each step . They discovered two fascinating behaviors:

- Multi-Path Exploration: In early denoising, the model effectively performs a Breadth-First Search (BFS). For example, in a Tic-Tac-Toe prompt, it might "hallucinate" an 'O' in several winning spots at once before committing to one.

- Superposition-based Exploration: The model maintains mutually exclusive logical states (like a circle being both medium and large) until the noise level drops enough to force a collapse into a single consistent reality.

The "Aha!" Moment: Noise Perturbation

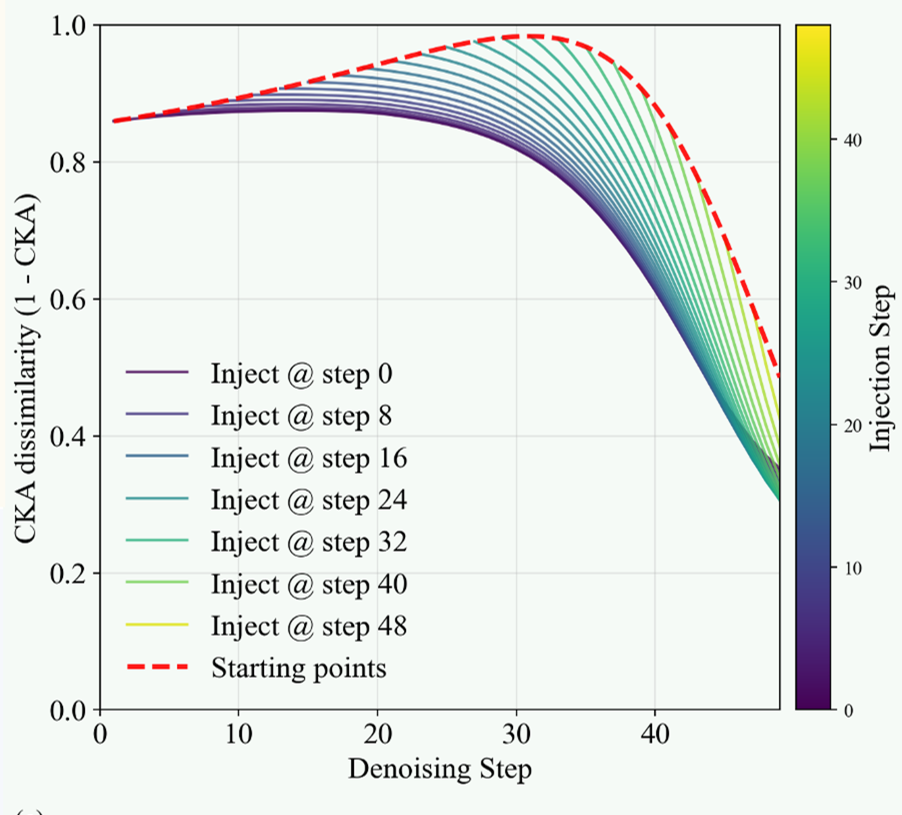

To prove that steps matter more than frames, the team conducted a "stress test." If you inject noise into a single frame across all steps, the model recovers easily. But if you inject noise into a single step across all frames, the reasoning completely collapses. This confirms that the "logical backbone" of the video is built during the denoising process, not the temporal sequence.

Figure 2: Performance collapses when diffusion steps are disrupted, whereas the model is surprisingly robust to frame-wise corruption.

Figure 2: Performance collapses when diffusion steps are disrupted, whereas the model is surprisingly robust to frame-wise corruption.

Layer-wise Mechanics: Perception Before Action

Inside the 40-layer Diffusion Transformer, the authors found a self-evolved division of labor:

- Layers 0-9 (The Observers): Focus on dense perceptual structure—background vs. foreground.

- Layers 20-29 (The Logicians): This is where the "reasoning" happens. Swapping latents in these layers can completely change the model's decision (e.g., changing which object it detects).

- Layers 30-39 (The Refiners): These layers polish the visual textures for the final output.

Improving Performance: Training-Free Ensemble

Armed with the insight that early steps explore multiple paths, the authors proposed a brilliant "Proof-of-Concept." By running three parallel trajectories with different random seeds and averaging their latents in the middle layers (20-29) during the first step, they effectively created an "Expert Jury."

Results:

- Overall Score: 68.5% → 71.6%

- Out-of-Domain Reasoning: Significant gains in knowledge and abstraction tasks.

- Cost: Only requires extra computation at inference time, zero retraining.

Critical Analysis & Conclusion

This work is a landmark in mechanistic interpretability for generative models. It suggests that video models are not just "stochastic parrots" of motion, but internal simulators capable of working memory, self-correction, and structured search.

Limitations: While 50-step models show clear CoS behavior, distilled models (4-step) struggle as the reasoning window is compressed too aggressively. This suggests a "Reasoning-Efficiency Trade-off" that future architectures must solve.

Final Takeaway: To build the next generation of AI, we should treat the diffusion process as a computational "Chain-of-Thought." The path to AGI may not just be about more data, but about giving models the "time to think" across more denoising steps.