The paper introduces Centralized Asynchronous Isolated Delegation (CAID), a multi-agent coordination framework for long-horizon software engineering tasks. Grounded in mature SWE primitives like branch-and-merge, CAID achieves state-of-the-art results on PaperBench and Commit0, improving accuracy by up to 26.7% over single-agent baselines.

TL;DR

Single-agent AI developers are hitting a wall in long-horizon tasks. While multi-agent systems seem like the answer, they often fail due to "too many cooks in the kitchen" (merge conflicts and execution interference). Enter CAID (Centralized Asynchronous Isolated Delegation): a framework from CMU that maps human software engineering (SWE) workflows—specifically git worktrees and branching—directly onto agent coordination. It boosts accuracy by up to 26.7% by ensuring agents literally cannot step on each other's toes.

Problem: The "Linguistic Alignment" Trap

Most multi-agent research focuses on how agents talk (SOPs, role-playing, topologies). However, in software engineering, talking isn't enough. If Agent A renames a function while Agent B writes code calling the old name, the system breaks—even if their conversation was perfectly polite. This "physical interference" in the shared codebase is the primary bottleneck for long-horizon autonomous coding.

The Core Insight: Software Engineering Primitives

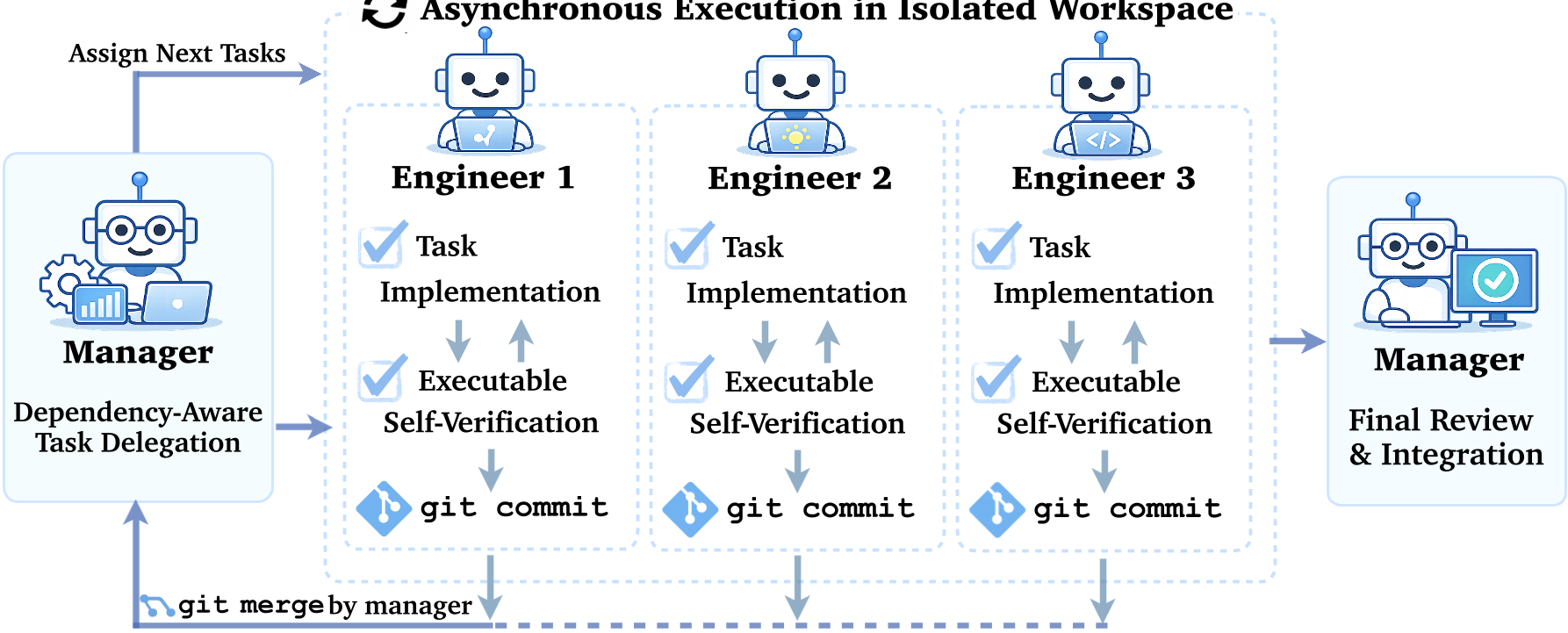

The authors argue that we don't need to reinvent collaboration; human developers already solved this decades ago with Version Control Systems (VCS). CAID is built on three pillars:

- Centralized Manager: Instead of free-form chat, a manager builds a Dependency Graph to decide what can be done in parallel.

- Isolated Workspaces: Every agent gets its own git worktree. They are physically separated; one agent's bugs don't crash another agent's environment.

- Merge-based Integration: Changes only hit the "main" branch after passing executable tests and resolving merge conflicts (handled by the sub-agents themselves).

Figure 1: The CAID loop—from dependency analysis to isolated execution and structured merging.

Figure 1: The CAID loop—from dependency analysis to isolated execution and structured merging.

Methodology: Grounding Coordination in Execution

CAID's workflow is naturally robust because it prioritizes test signals over dialogue.

- Task Specification: The manager decomposes the repo into a directed graph $G = (V, E)$. A task $v_j$ is only delegated when all its dependencies $v_i \in C_t$ are satisfied.

- Asynchronous Loop: Engineers run as independent coroutines. They use a structured JSON protocol to report progress, avoiding the context-window explosion of long-running chats.

- Self-Verification: An agent cannot submit a "Pull Request" until its own tests pass in its local worktree.

Results: More Agents ≠ Better, but Better Coordination = Success

The experiments on Commit0 (building libraries from scratch) and PaperBench (reproducing research papers) yield several critical findings:

- Direct SOTA Improvement: CAID consistently outperforms single-agent setups across different LLMs (Claude 4.5, MiniMax 2.5, GLM 4.7).

- The Iteration Ceiling: Doubling the iteration limit for a single agent often hurts performance due to error accumulation. CAID, however, scales by distributing the iteration budget across agents in isolated branches.

- The Parallelism Sweets-pot: Parallel execution isn't free. Performance peaks at 4 agents for Commit0. At 8 agents, "delegation errors" propagate as the manager fails to maintain clean ownership boundaries, leading to a "coordination tax."

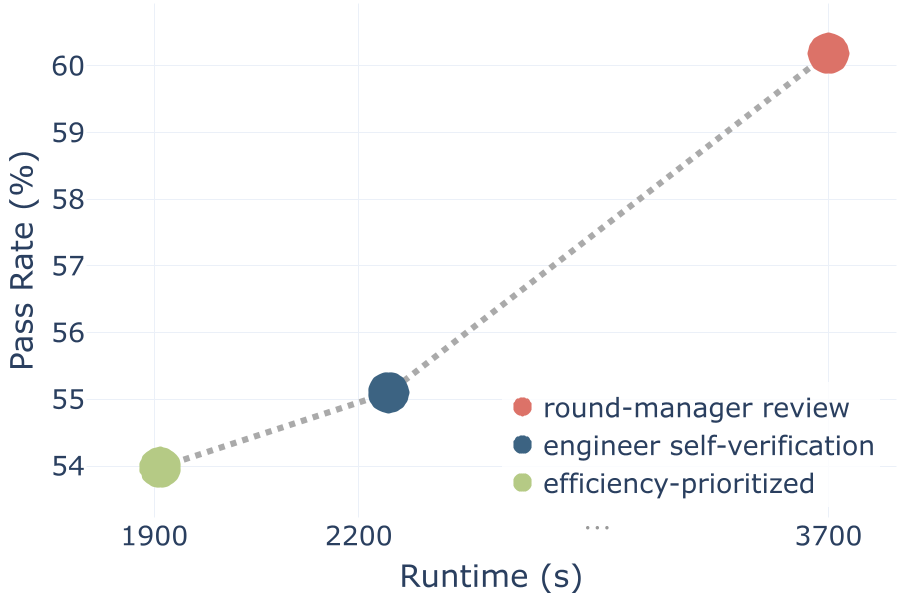

Figure 2: The trade-off between verification intensity and runtime efficiency.

Figure 2: The trade-off between verification intensity and runtime efficiency.

Depth Analysis: Why Isolation is Mandatory

A key ablation study compared Worktree Isolation vs. Soft Isolation (agents sharing one workspace but told to "stay in their lane").

- On PaperBench, soft isolation performed worse than a single agent.

- Without physical boundaries, LLMs frequently "hallucinate" the state of the shared files, leading to catastrophic overwrites.

- Takeaway: Physical isolation (git worktree) is the stabilizer that makes multi-agent execution viable.

Conclusion & Future Outlook

CAID proves that the future of AI software engineering isn't just "smarter models," but "better infrastructure." By treating agents like human developers—giving them branches, tests, and a manager who understands dependencies—we can tackle tasks that were previously too long or complex for LLMs.

Limitations: Coordination still costs money. CAID is more expensive (API calls) and not necessarily faster in wall-clock time due to sequential integration steps. The next frontier? Adaptive delegation where managers learn to partition tasks based on learned dependency risks rather than just heuristics.