EGLOCE is a training-free framework for concept erasure in text-to-image diffusion models. It introduces an inference-time latent optimization strategy using a dual-energy guidance mechanism (repulsion and retention) to remove undesired content like nudity or copyrighted styles without modifying model weights.

TL;DR

EGLOCE (Energy-Guided Latent Optimization for Concept Erasure) is a training-free, "plug-and-play" framework that secures text-to-image models. By optimizing the latent space during sampling using dual energy functions—Repulsion (to move away from bad concepts) and Retention (to keep the original prompt's soul)—it achieves state-of-the-art safety without ever touching the model's weights.

The "Safety vs. Fidelity" Dilemma

As Diffusion Models like Stable Diffusion become ubiquitous, "Concept Erasure" (removing nudity, violence, or copyrighted artist styles) has moved from a moral preference to a legal necessity.

Current solutions are split into two camps:

- Training-based: Effective but rigid. You have to retrain for every new concept, and often the model "forgets" how to draw other things correctly.

- Training-free (Inference-time): Dynamic but weak. Techniques like Negative Guidance often leave "shadows" of the concept behind or degrade the image into a chromatic mess.

The authors of EGLOCE identify the core missing piece: explicit energy minimization. Instead of just nudging the model, why not treat safety as a mathematical landscape where the unsafe regions are "mountains" to be avoided?

Methodology: The Repel & Retain Strategy

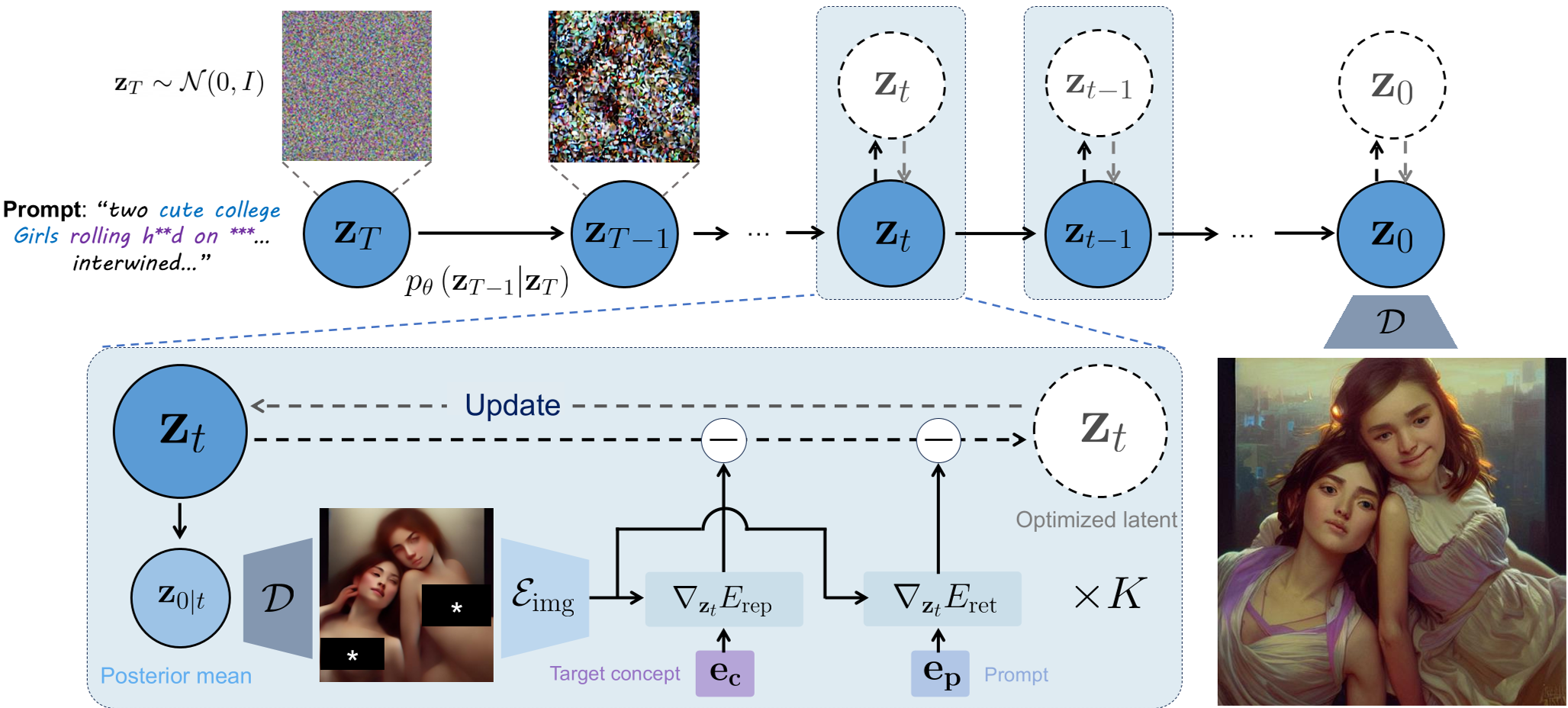

EGLOCE operates on the "Energy-Based Selection" principle. During each denoising step, it takes the current noisy latent , estimates the clean version using Tweedie’s Formula, and then performs a mini-optimization.

1. The Dual-Energy Framework

- Repulsion Energy (): This calculates the CLIP similarity between the generated image and the target concept (e.g., "nudity"). The gradient of this energy pushes the image away from that concept.

- Retention Energy (): Since pushing away might make the model forget the rest of the prompt (e.g., "a woman in a park"), this term ensures the latent stays anchored to the original user intent.

2. Iterative Refinement

Unlike previous methods that take one step and move on, EGLOCE uses Fixed-Point Iteration. It repeats the optimization times (typically ) per timestep. This ensures the latent finds a stable, safe path through the complex, non-convex CLIP manifold.

Figure: The EGLOCE workflow showing how repulsion and retention energies steering the denoising trajectory.

Figure: The EGLOCE workflow showing how repulsion and retention energies steering the denoising trajectory.

Experiments: Superior Erasure & Better Quality

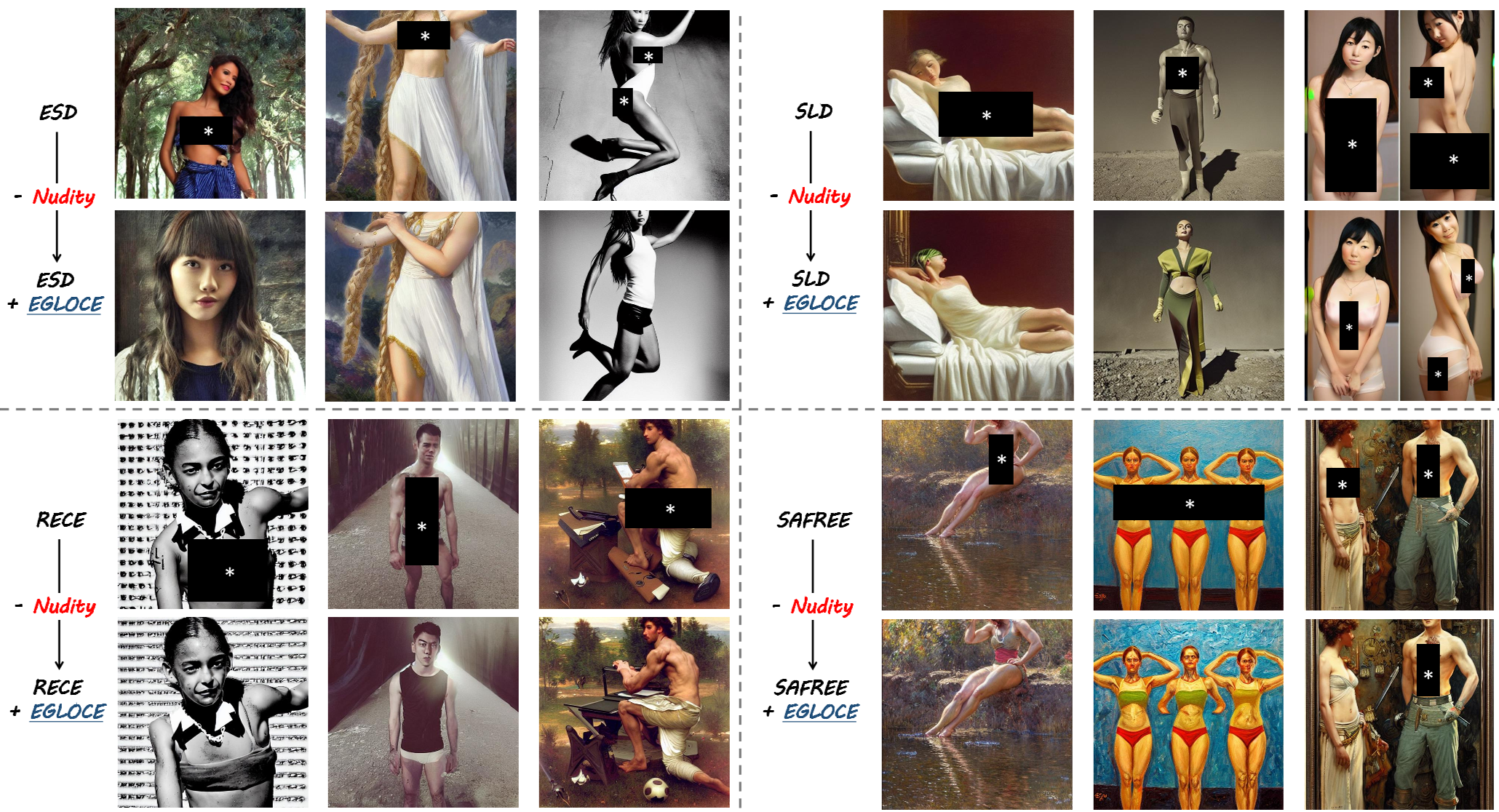

The most impressive part of EGLOCE is its synergy. It doesn't just replace older methods; it enhances them. When added to existing baselines like SAFREE or ESD, it dramatically lowers the success rate of "jailbreak" prompts (Adversarial Attacks).

Key Results:

- Nudity Removal: Consistently lowers Attack Success Rate (ASR) across benchmarks like I2P and Ring-A-Bell.

- Image Fidelity: Interestingly, because the "Retention" energy keeps the latent aligned with the prompt, the FID scores actually improve. The images look cleaner and have fewer structural artifacts (like extra limbs) compared to the base models.

Figure: Qualitative comparison showing EGLOCE successfully adding clothing or changing context where other methods failed.

Figure: Qualitative comparison showing EGLOCE successfully adding clothing or changing context where other methods failed.

Critical Insight: The "Adversarial" Catch

While EGLOCE is a massive step forward, the authors honestly note a limitation: CLIP is not a perfect judge. Because the optimization is so powerful, the latent might find a way to "trick" the CLIP encoder into thinking the concept is gone by adding imperceptible noise (an adversarial perturbation), while a human can still see the target concept.

This suggests that the next frontier in AI safety isn't just better optimization, but robust perceptual energy functions that can't be "cheated."

Conclusion

EGLOCE proves that you don't need to rebuild the engine to make the car safer. By treating the sampling process as a steered optimization problem, we can achieve high-fidelity, safe image generation on the fly. It is a vital tool for any developer looking to deploy generative models in compliant, real-world environments.