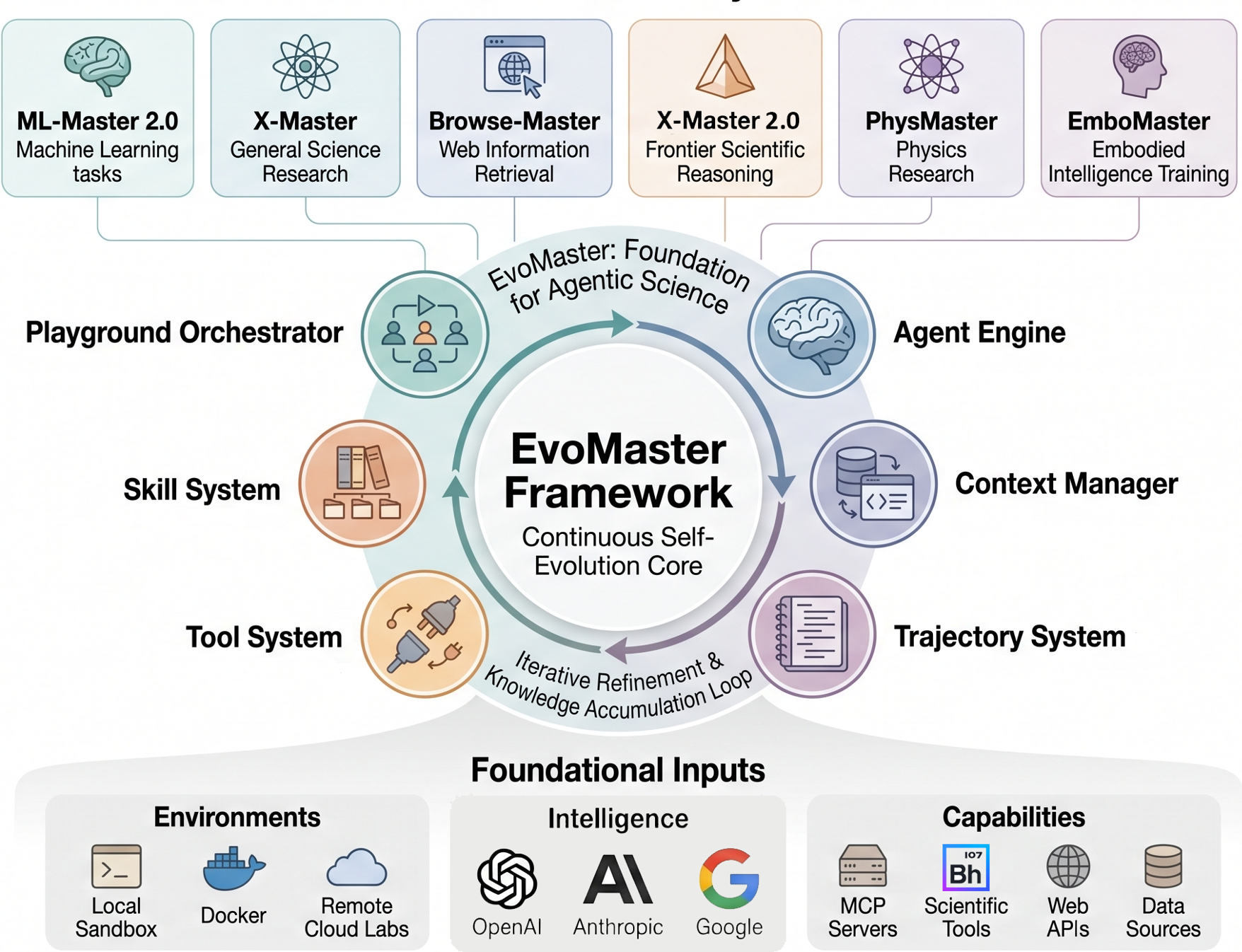

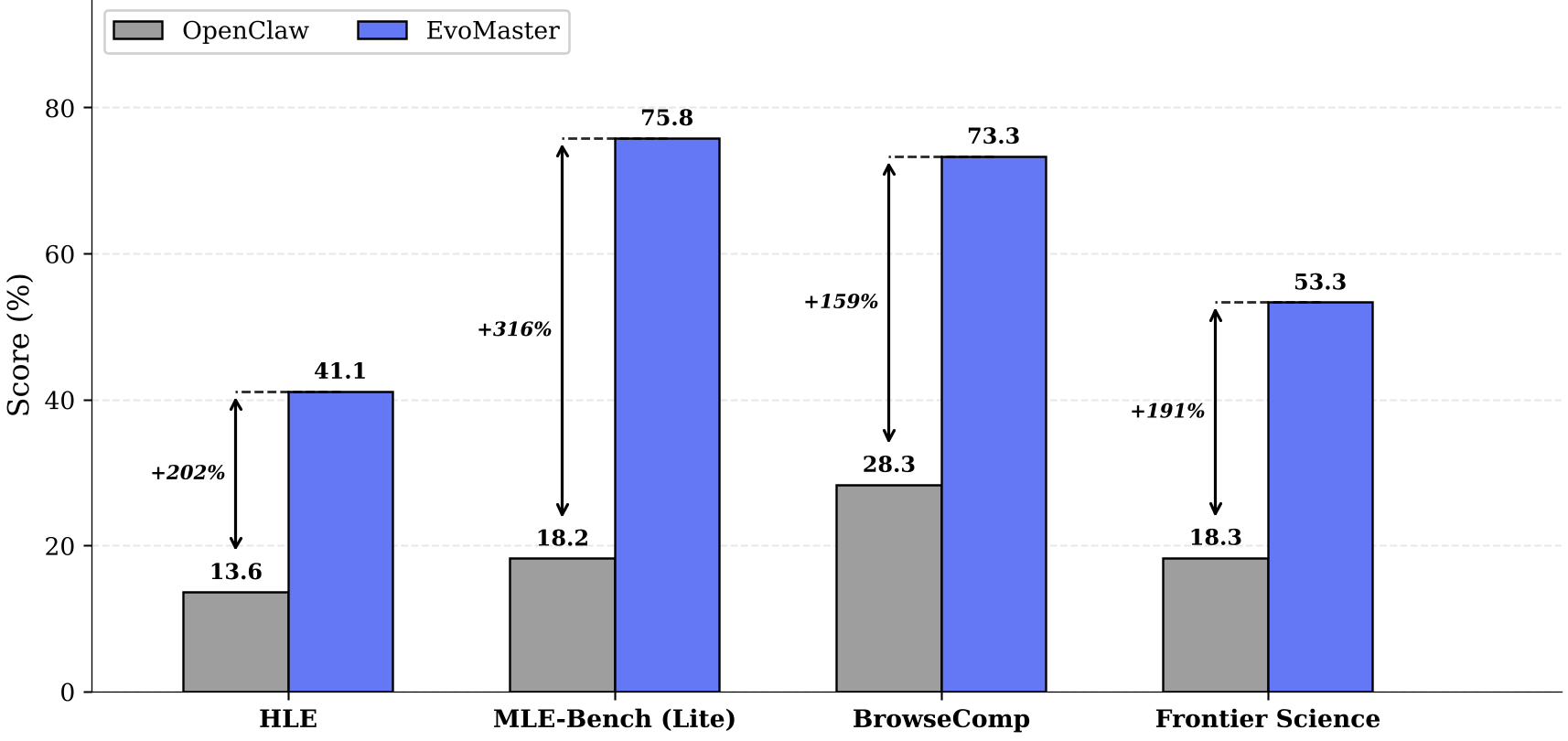

EvoMaster is a foundational evolving agent framework designed for "Agentic Science at Scale," enabling the creation of self-improving scientific agents across various disciplines with minimal code (~100 lines). By integrating iterative self-evolution and modular orchestration, it achieves state-of-the-art performance on representative benchmarks like MLE-Bench (75.8%) and HLE (41.1%).

TL;DR

Scientific discovery is rarely a straight line; it is a messy loop of failure, critique, and refinement. EvoMaster is a new foundational framework that shifts the paradigm from "static" agents to evolving researchers. By providing a modular, experiment-ready harness, it allows developers to deploy PhD-level agents for any scientific field in roughly 100 lines of code, outperforming general-purpose agents by up to 316% on complex tasks.

The "Static" Bottleneck in Agentic Science

While systems like AlphaFold or ChemCrow have revolutionized specific fields, the infrastructure for Agentic Science—autonomous agents driving the full research cycle—has remained fragmented. Current frameworks suffer from two fatal flaws:

- Domain Silos: An agent built for chemistry cannot easily be adapted for physics because the "harness" (tool orchestration and memory) is hardcoded.

- Lack of Evolution: Standard agents perform a task once and stop. They don't "learn" from a failed experiment or refine a hypothesis based on data, which is the cornerstone of human science.

Methodology: The Architecture of Evolution

EvoMaster solves this by decoupling the "intelligence" from the "infrastructure." It is built on four core pillars: Modular Composability, an Experiment-Ready Harness, Iterative Self-Evolution, and Multi-Agent Collaboration.

1. The Execution Layers

The framework separates the workflow into three distinct layers:

- Playground: The orchestration layer where specialized agents (e.g., a "Solver" and a "Critic") collaborate.

- Experiment (Exp): Manages the lifecycle of a single trial, including strict trajectory recording.

- Agent Engine: The reactive core that executes the "reason-act-observe-critique" loop.

2. The Self-Evolution Loop

The "secret sauce" of EvoMaster is its ability to handle long-horizon iteration. Traditional LLMs lose context after many steps. EvoMaster uses an intelligent Context Manager with dynamic summarization and "cognitive caching." This allows the agent to maintain a coherent research strategy over hundreds of tool invocations without "forgetting" its original goal or earlier failures.

Experimental Validation: Breaking the SOTA

The researchers tested EvoMaster against OpenClaw, a leading general AI assistant, across four high-difficulty benchmarks.

- MLE-Bench (ML Engineering): EvoMaster achieved a 75.8% medal rate, a massive 316% improvement over the baseline. The agent didn't just write code; it iteratively optimized models based on Kaggle leaderboard feedback.

- HLE (Humanity's Last Exam): On PhD-level specialist questions, EvoMaster reached 41.1% accuracy, demonstrating that structured multi-agent critique significantly boosts expert-level reasoning.

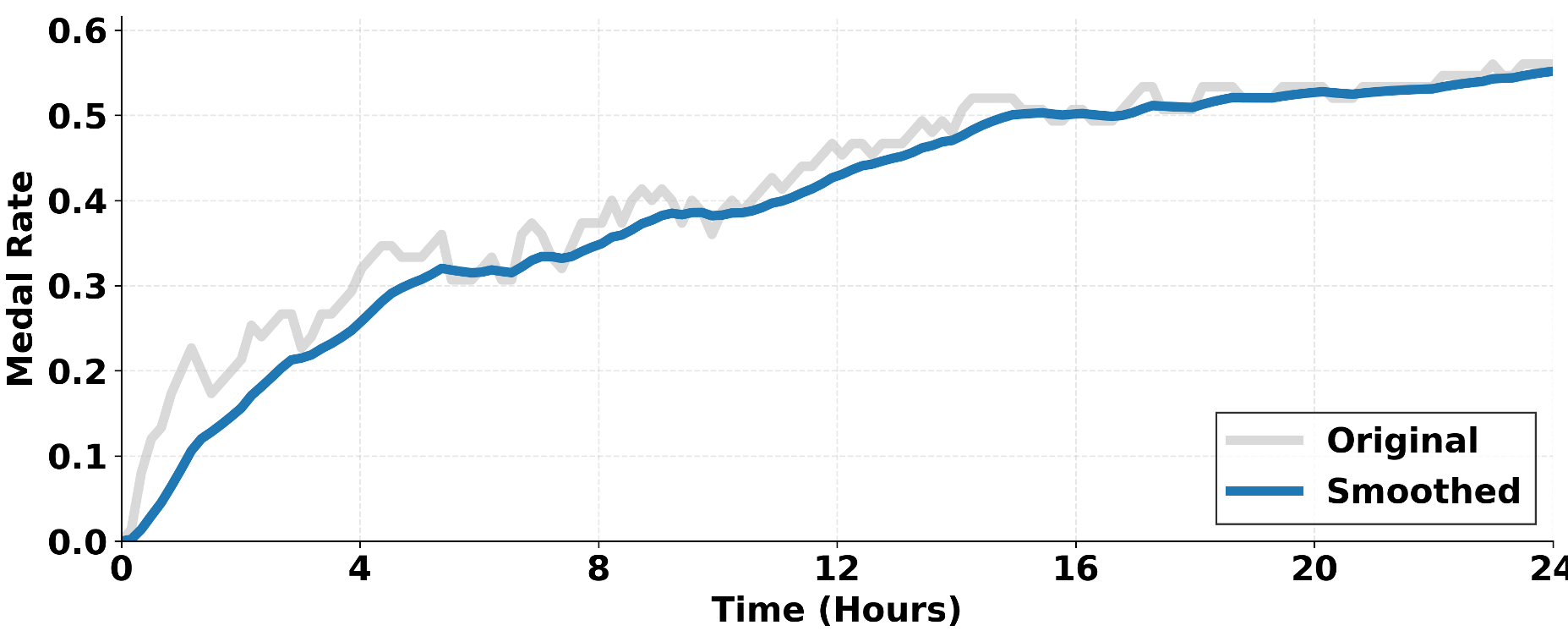

The results in Figure 2 (below) illustrate the importance of time and iteration. As the agent "evolves" through experimental turns, its performance on MLE-Bench climbs steadily—a luxury static agents simply do not have.

Critical Insight: Why Does This Work?

EvoMaster’s success stems from Inductive Bias for Science. By forcing the agent to "self-critique" before its next move and providing a structured "Lab Notebook" (the Trajectory System), the framework mimics the rigor of a real laboratory.

Key Takeaways for the AI Community:

- Scaling is Horizontal, not just Vertical: We don't just need bigger models; we need better "harnesses" that allow models to use tools across different domains seamlessly.

- Memory is Knowledge: The ability to promote "run-level wisdom" (learning what worked across multiple attempts) is more valuable than zero-shot reasoning for complex discovery.

Future Outlook and Limitations

While dominant in "silico" (computational) research, EvoMaster currently lacks native integration with physical robotics (cloud labs). The next frontier will be bridging this digital-to-physical gap, allowing these self-evolving agents to control robotic arms and chemical synthesizers directly.

EvoMaster represents a major step toward a future where the bottleneck of science is no longer human bandwidth, but the speed of our silicon-based researchers.