FIT (Fit-Inclusive Try-on) is a large-scale dataset and model for virtual try-on that focuses on accurate garment sizing and "ill-fit" scenarios (e.g., oversized or undersized clothes). It introduces over 1.13M synthetic-to-real image triplets, achieving a new SOTA in fit-aware synthesis while maintaining high photorealism.

TL;DR

Virtual Try-On (VTO) has long been a "visual-only" affair—it looks right, but does it fit? The FIT (Fit-Inclusive Try-on) framework introduces a massive 1.13M sample dataset that bridges the gap between 3D physics simulations and photorealistic image generation. By conditioning on actual centimeter measurements, FIT allows users to see how an XL shirt truly drapes on an XS body, overcoming the "well-fitted bias" of previous SOTA models.

Problem: The "Everything Fits" Illusion

Current VTO models are trained on catalog data. Retailers don't post photos of models wearing clothes four sizes too big or two sizes too small. Consequently, AI models have developed an Inductive Bias toward a "perfect fit." If you try on a 3XL shirt in a standard VTO app, the AI usually shrinks the shirt to fit your body perfectly, failing the most basic functional requirement of a try-on: helping you choose the right size.

Methodology: High-Fidelity Sim-to-Real

The authors address the data scarcity problem through a sophisticated synthetic pipeline:

- Physics-Driven Draping: Using GarmentCode, the team simulated over 1.1M 3D draping scenarios. Unlike simple geometric scaling, this captures how fabric bunches, stretches, and folds based on physical collisions between the garment and the body mesh.

- Geometry-Preserving Re-texturing: Synthetic 3D renders look like plastic. To fix this without losing the physical accuracy of the folds, the authors used Surface Normal Maps as a bridge. They fine-tuned FLUX.1-dev to generate photorealistic skin and fabric textures conditioned on these normals.

- Paired Data Generation: Because they control the simulation, they can generate "Ground Truth" pairs (the same person in the same pose wearing different shirts), a luxury real-world datasets never provide.

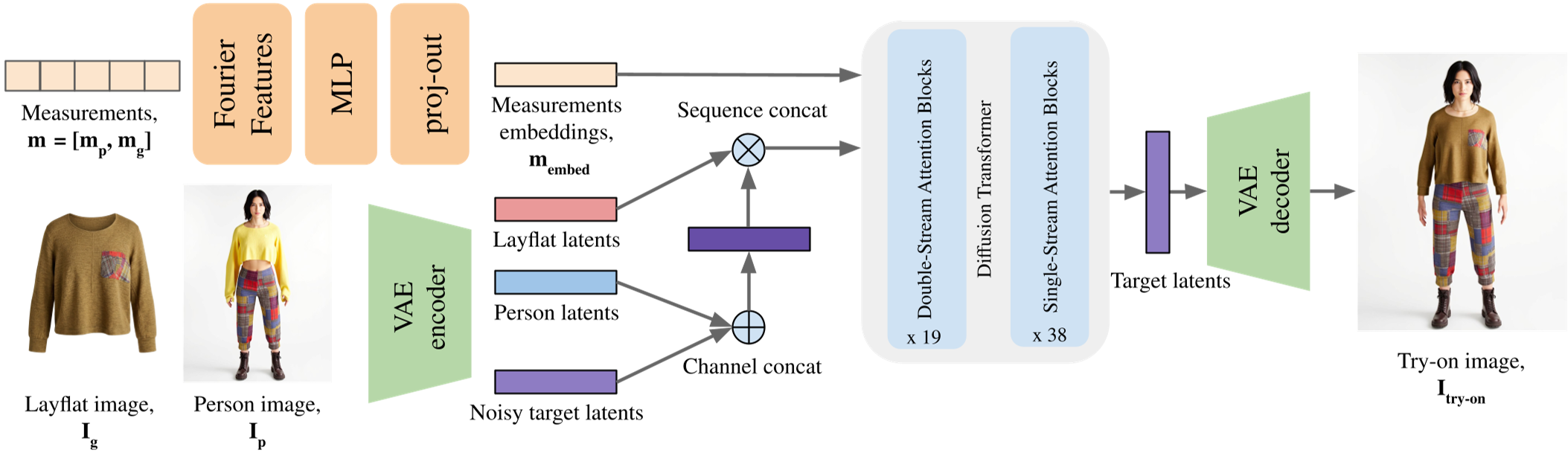

Figure 1: The Fit-VTO Architecture, showing the integration of the Measurement Encoder with the MMDiT backbone.

Figure 1: The Fit-VTO Architecture, showing the integration of the Measurement Encoder with the MMDiT backbone.

Architecture: Beyond Text Prompts

Standard models use CLIP or T5 text embeddings. However, text is poor at representing nuances like "82cm bust." FIT replaces these with a Custom Measurement Encoder.

- Fourier Feature Embeddings: The model maps 7 specific dimensions (Bust, Waist, Height, etc.) into high-dimensional embeddings using Fourier frequencies.

- MMDiT Integration: These metric embeddings are injected into the Multi-modal Diffusion Transformer via cross-attention, allowing the model to "warp" the garment based on numbers, not just visual cues.

Experiments & Results: Real-World Generalization

The results are striking. FIT does more than just swap textures; it adjusts the silhouette. In benchmarks against IDM-VTON and Any2AnyTryon, FIT was the only model capable of maintaining size fidelity (Size-Aware IoU).

Figure 2: Qualitative results showing the model's ability to represent the same garment on different body sizes (XS to 3XL).

Figure 2: Qualitative results showing the model's ability to represent the same garment on different body sizes (XS to 3XL).

Specifically, the Ablation Studies highlighted that while real-world data is necessary for skin/hair realism, the synthetic FIT data is the only reason the model learns to respect garment dimensions. Without it (the "Ours-no-FIT" variant), the model reverts to the old habit of "shrinking to fit."

Critical Insight: The Future of E-Commerce

The transition from qualitative "style" transfer to quantitative "fit" transfer is a paradigm shift. While the current work is limited to upper-body garments and static poses, it provides the first "Proof of Concept" that 12-billion parameter diffusion models can be tamed by physics-based simulations.

Limitations to Watch:

- Metric Correlation: Sometimes increasing "width" causes "length" to increase unintentionally because those values are often correlated in human tailoring.

- Extreme Tightness: Physics engines still struggle to differentiate between "tight" and "skintight" (compression) without complex soft-body deformation.

Conclusion

FIT is more than a dataset; it's a benchmark for the next generation of "Size-Aware" AI. By combining physics simulation with the generative power of Flow-Matching models, this research moves us one step closer to a virtual fitting room that actually tells the truth about how a garment will look.