FlowScene is a novel tri-branch 3D indoor scene generation framework that utilizes Multimodal Graph Rectified Flow to produce style-consistent layouts, shapes, and textures. By representing scenes as multimodal graphs, it achieves SOTA performance in realism and controllability, significantly outperforming diffusion-based baselines.

TL;DR

FlowScene introduces a breakthrough in 3D indoor scene generation by moving beyond simple object retrieval or isolated diffusion. It utilizes Multimodal Graph Rectified Flow to concurrently generate layouts, 3D shapes, and high-fidelity textures. The result? Scenes that are not only architecturally plausible but also share a unified aesthetic "style," all while being generated 85% faster than previous state-of-the-art diffusion models.

Problem & Motivation: The Consistency Gap

Generating a 3D room isn't just about placing a bed and a chair; it's about ensuring the chair belongs in the same design universe as the bed. Existing methods usually fail in two ways:

- Retrieval-based methods (like Holodeck) pick existing meshes from a database. While the meshes look good, they often clash in scale, topology, and style.

- Generative graph-based models (like CommonScenes) offer control via edges (e.g., "left of"), but they struggle with high-fidelity textures and suffer from the typical "slow sampling" curse of diffusion models.

The authors' primary Insight is that objects in a scene should "talk" to each other during the generation process to align their styles.

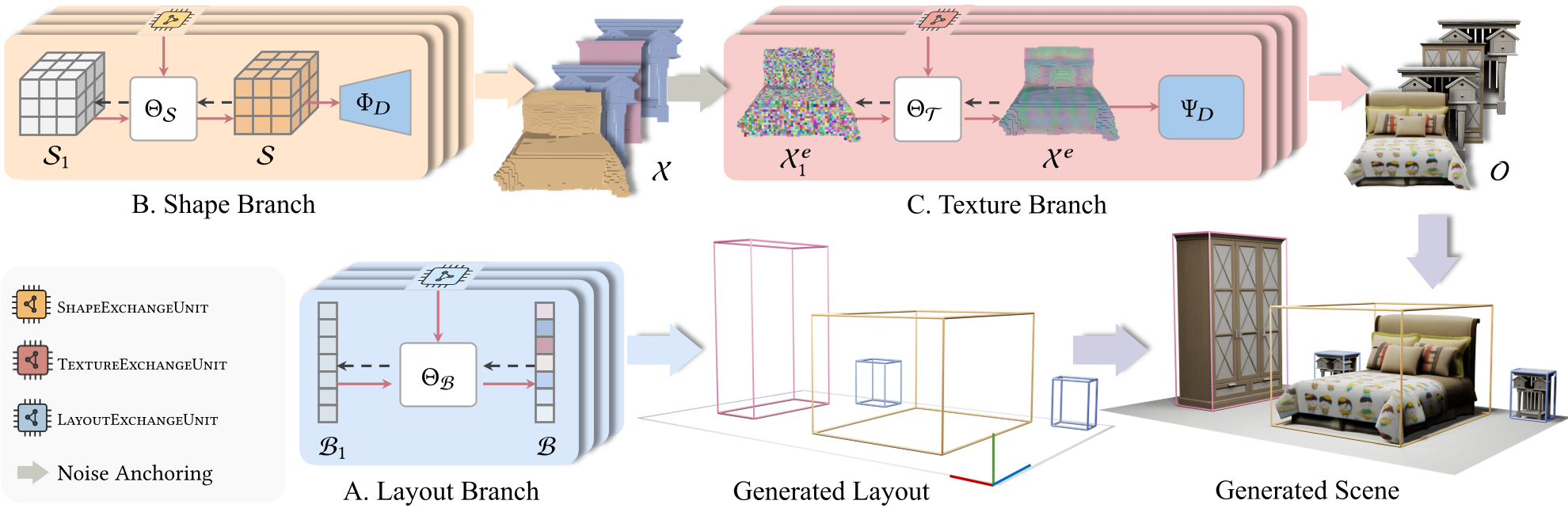

Methodology: The Tri-Branch Architecture

FlowScene treats scene generation as a coordinated dance between three specialized branches: Layout, Shape, and Texture.

1. Multimodal Graph Core

The scene is represented as a graph where nodes contain text (descriptions) or images (visual cues). A triplet-GCN (Graph Convolutional Network) acts as an InfoExchangeUnit, allowing features to flow along edges.

2. Rectified Flow Backbone

Unlike traditional diffusion that follows a curved, noisy path, Rectified Flow learns a straight-line trajectory between noise and data. This makes the ODE (Ordinary Differential Equation) integration much simpler and faster.

3. The InfoExchangeUnit

How do objects maintain style consistency? During each denoising step , the InfoExchangeUnit takes the current noisy state of all objects and passes messages between them. If Node A is a "wooden chair" and Node B is a "dining table" connected by a "same style as" edge, the model ensures their latent features converge toward similar materials and textures.

Experiments & Results: Speed Meets Realism

The performance gains are substantial across four dimensions: realism, controllability, style, and efficiency.

- Holistic Realism: In bedroom generation, FlowScene achieved an FID of 35.01, significantly lower than MMGDreamer's 42.38.

- Object-Level Precision: For complex objects like nightstands, the Minimum Matching Distance (MMD) decreased by 43.9%, meaning the generated shapes are much closer to real-world distributions.

- Inference Speed: The move to Rectified Flow paid off. Generating a full layout and shape takes only 6.83 seconds, compared to ~45 seconds for previous diffusion-based methods.

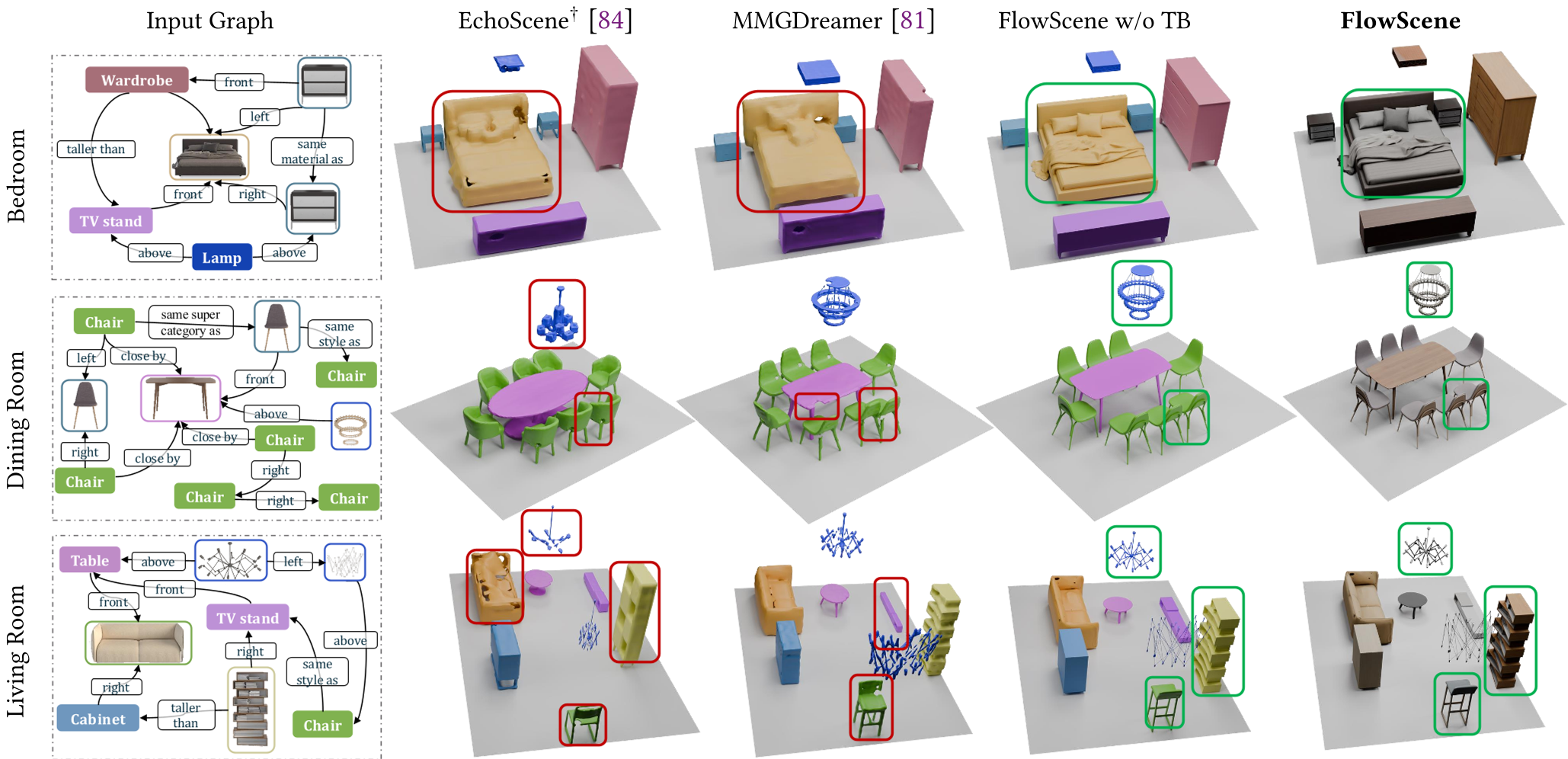

The figure above demonstrates how competitors (red boxes) often fail at texture consistency or object placement, whereas FlowScene (green boxes) maintains unified aesthetics.

Critical Analysis & Conclusion

The most impressive part of FlowScene is its robustness to modality ratios. Ablation studies showed that the model maintains corectness (SC and VQ scores) whether 10% or 90% of the input nodes have visual cues—proving the graph-based information exchange is doing the heavy lifting of "filling in the blanks."

Limitations:

- Data Dependency: Currently optimized for 3D-FRONT, its performance on messy, real-world outdoor data is unproven.

- Upstream Reliance: If the LLM/VLM used to build the graph makes a mistake (e.g., impossible spatial relations), FlowScene will faithfully generate a "broken" scene.

Conclusion: FlowScene proves that the "Rectified Flow + Graph" combination is a superior alternative to standard Diffusion for multi-object compositional tasks. It paves the way for interactive, real-time 3D interior design tools.