The paper introduces Geometric Latent Diffusion (GLD), a framework that repurposes the feature space of geometric foundation models (e.g., Depth Anything 3) as the latent space for multi-view novel view synthesis (NVS). GLD achieves state-of-the-art 3D consistency and image quality, outperforming VAE-based diffusion models while accelerating training convergence by 4.4x.

Executive Summary

TL;DR: Geometric Latent Diffusion (GLD) shifts the paradigm of Novel View Synthesis (NVS) by moving the diffusion process from a standard VAE space to the feature space of Geometric Foundation Models (like Depth Anything 3). By leveraging latents that already "understand" 3D structure, GLD achieves superior geometric consistency, 4.4x faster training convergence, and zero-shot 3D reconstruction capabilities—all without requiring massive text-to-image pretraining.

Context: In the landscape of generative AI, this work sits at the intersection of Visual Foundation Models and Diffusion Probabilistic Models. It is a "structural optimization" work that proves the latent space itself is the bottleneck for geometry-aware generation.

The Motivation: Why 2D Latents Fail 3D Tasks

Current state-of-the-art NVS methods usually fine-tune Stable Diffusion. However, Stable Diffusion lives in a VAE latent space optimized for texture and 2D semantics, not 3D spatial relationships.

The authors argue that this forces the diffusion model to do "double duty": it must learn to generate pixels while simultaneously trying to rediscover the laws of epipolar geometry. This leads to common failure modes like "flickering" textures or warped structures when moving between viewpoints.

The Insight: If we use a latent space from a model already trained for depth estimation and point matching, the diffusion process "inherits" a coordinate system that is naturally 3D-aware.

Methodology: The GLD Framework

1. Repurposing the Geometric Backbone

GLD utilizes Depth Anything 3 (DA3) as its core. Instead of a VAE, it uses the DA3 encoder to transform images into a multi-level feature hierarchy. The key discovery is the Boundary Layer Selection:

- Shallow layers (Level 0): High texture/color detail, low geometric consistency.

- Deep layers (Level 2-3): High geometric abstraction, loss of fine-grained photometric detail.

- The Sweet Spot (Level 1): The optimal boundary that provides enough spatial grounding for 3D consistency while retaining enough appearance info for high-fidelity decoding.

2. Cascaded Feature Synthesis

Synthesizing all four levels of a foundation model is expensive. GLD uses a "Propagate-and-Cascade" strategy:

- Direct Synthesis: A diffusion model generates Level 1 features.

- Propagation: Deeper features (Lv 2-3) are derived by simply passing Lv 1 through the frozen DA3 blocks.

- Cascaded Generation: A smaller diffusion model () generates the high-res Lv 0 features, conditioned on Lv 1 to ensure they don't drift apart.

Experiments: Breaking the "Pretraining" Dependency

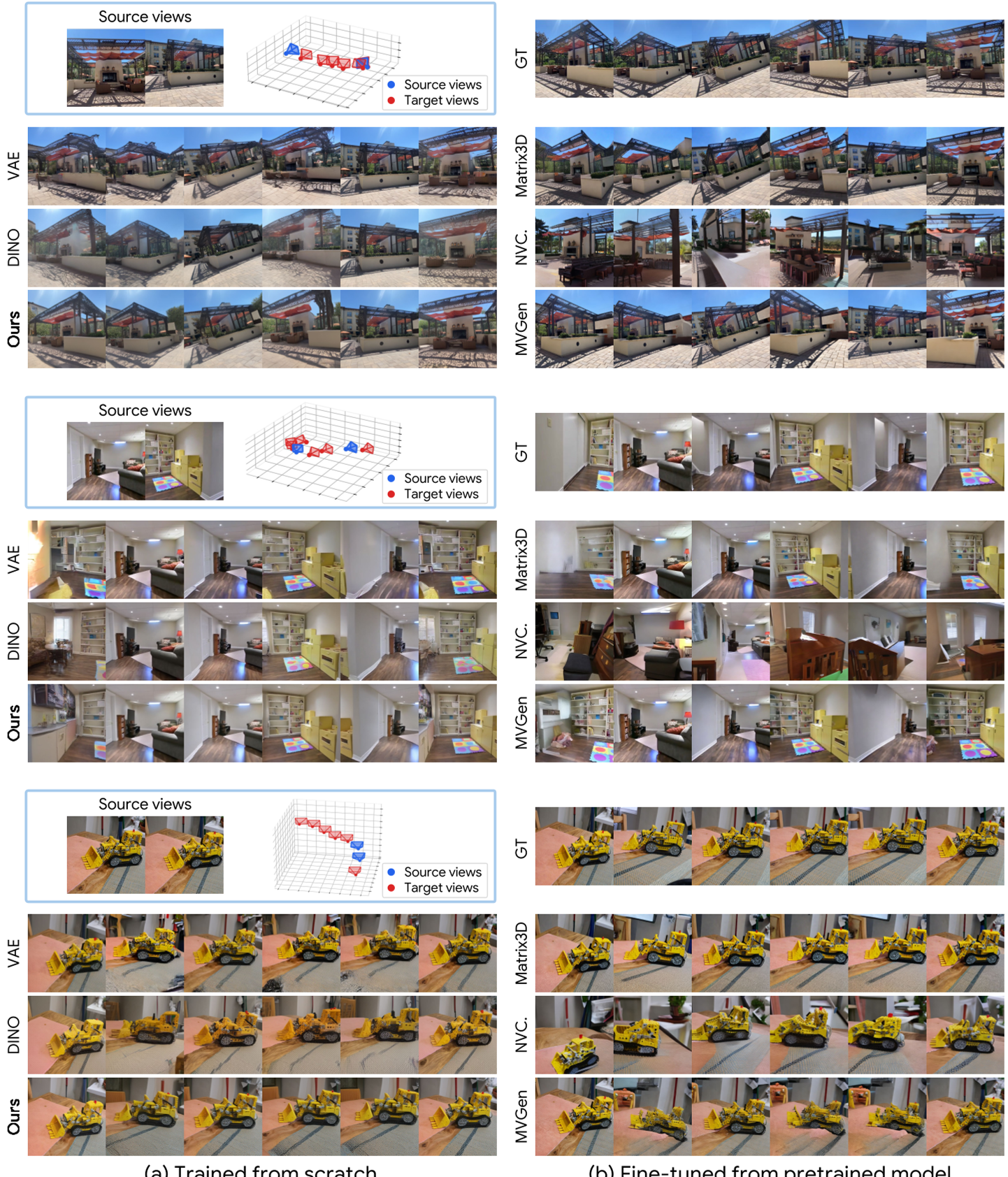

One of the most striking results is that GLD, trained from scratch on relatively small datasets (RealEstate10K, DL3DV), outperforms or matches models like MVGenMaster and CAT3D which rely on the massive priors of Stable Diffusion.

Quantitative Superiority

| Metric | VAE (Scratch) | GLD (Ours) | Improvement | | :--- | :--- | :--- | :--- | | PSNR (Higher is better) | 15.65 | 16.36 | +4.5% | | ATE (Lower is better) | 0.278 | 0.211 | -24% Error | | Training Speed | 1.0x | 4.4x | Much Faster |

The 3D metrics (ATE, RPE) show that GLD's camera adherence is significantly more precise, meaning the generated views actually "stay in place" relative to the requested camera movement.

Zero-Shot 3D Reconstruction

Because GLD generates features in the DA3 space, you can plug the generated latents into the original DA3 depth head. This means for every image you generate, you get a perfectly aligned depth map for free, allowing for instant 3D point cloud unprojection.

Visual evidence shows GLD maintaining sharp edges and correct perspective even where VAE-based models hallucinate or blur.

Visual evidence shows GLD maintaining sharp edges and correct perspective even where VAE-based models hallucinate or blur.

Critical Insights & Limitations

- The Power of Inductive Bias: This paper proves that choosing the right "language" (latent space) for a task can be more powerful than just throwing more data or larger T2I priors at the problem.

- Efficiency: The 4.4x training speedup suggests that the model spends less time learning "how 3D works" and more time learning "what the scene looks like."

- Limitations: The model still struggles with extreme occlusions—places where the foundation model itself hasn't seen enough data to provide a reliable prior. Furthermore, the two-stage sampling (Lv 1 then Lv 0) adds some inference latency.

Conclusion

Geometric Latent Diffusion is a compelling argument for Task-Specific Foundation Latents. As we move toward more specialized AI applications (Robotics, AR/VR, Med-Tech), the era of using a one-size-fits-all 2D VAE may be coming to an end. GLD paves the way for generative models that aren't just "painting" 3D scenes, but truly "constructing" them.