GSEM (Graph-based Self-Evolving Memory) is a novel framework for clinical decision-making agents that organizes prior experiences into a dual-layer memory graph. It achieves SOTA performance on MedR-Bench and MedAgentsBench, reaching up to 70.90% accuracy with DeepSeek-V3.2 by leveraging relational dependencies and online feedback-driven calibration.

TL;DR

Clinical decision-making is high-stakes and context-dependent. GSEM (Graph-based Self-Evolving Memory) moves beyond simple "flat" retrieval by organizing clinical experiences into a dual-layer graph. By modeling both the internal logic of a case and the relationships between cases, it achieves SOTA results on major medical benchmarks (MedR-Bench, MedAgentsBench), specifically excelling in complex treatment planning where traditional RAG fails.

Problem & Motivation: The Failure of Flat Memory

While Large Language Models (LLMs) are powerful, they often struggle with the "narrow boundaries" of medicine. The authors identify two critical failure modes in current memory-augmented agents:

- Boundary Failure: An agent retrieves a past experience that looks similar but ignores a "contraindication" (a reason not to do something), leading to dangerous errors.

- Collaboration Failure: The agent retrieves multiple snippets that are individually true but collectively incoherent, failing to form a unified treatment plan.

The core insight of GSEM is that clinical experience is not a list of records; it is a web of relationships.

Methodology: The Dual-Layer Architecture

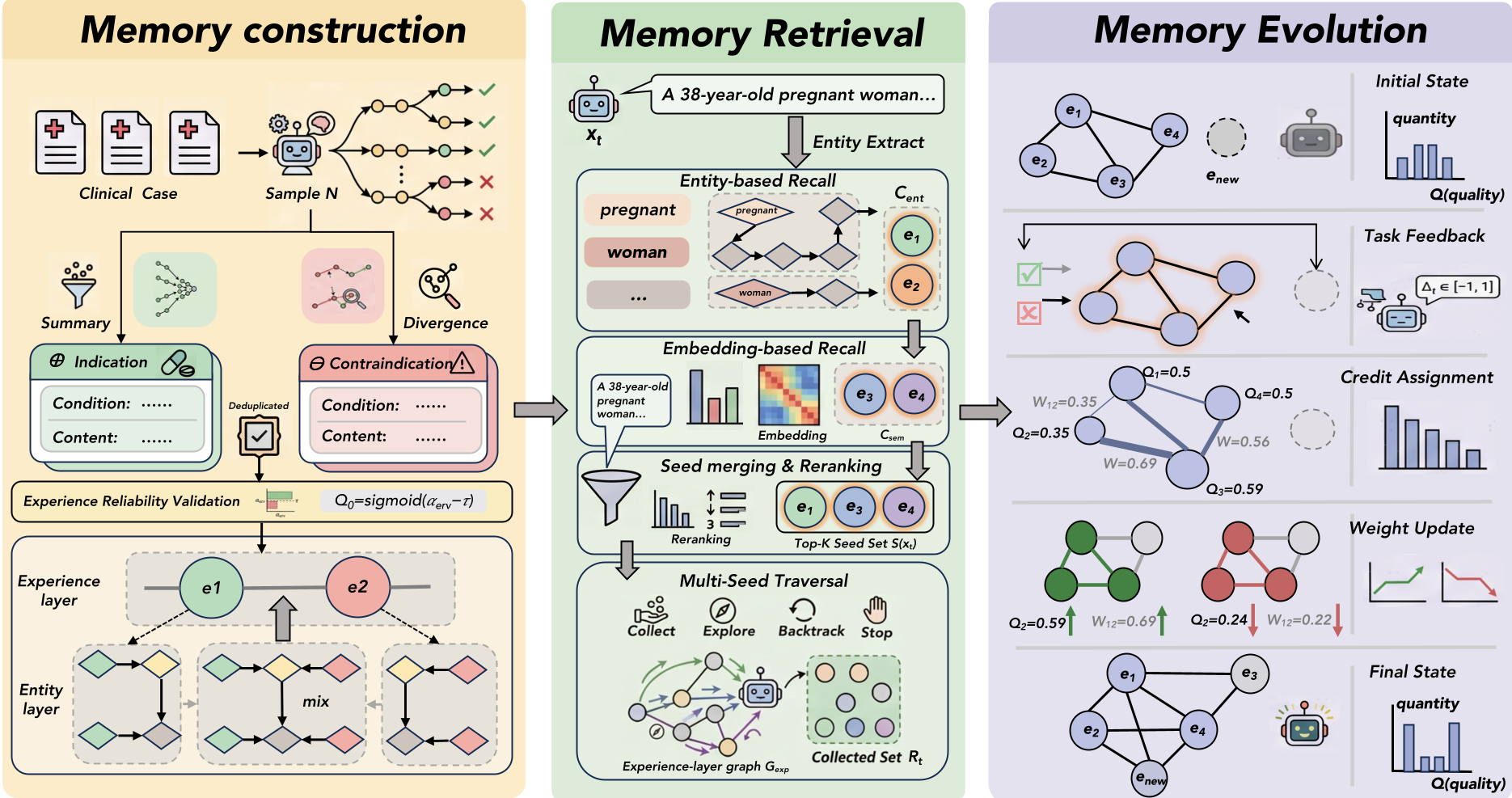

GSEM structures memory into three distinct stages: Construction, Retrieval, and Evolution.

1. The Dual-Layer Memory Graph

The framework doesn't just store text; it parses experiences into:

- Entity Layer: Maps out the "Condition $ o$ Action $ o$ Outcome" flow within a single experience.

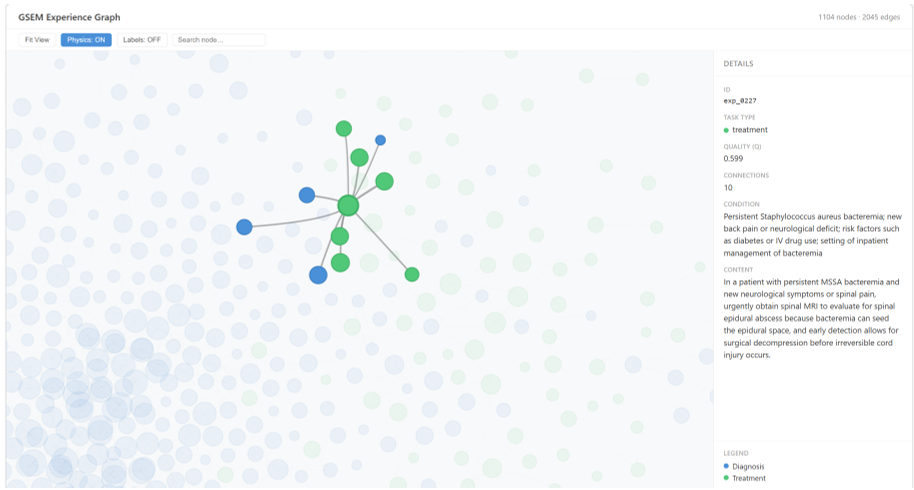

- Experience Layer: Captures how different experiences relate to one another using weights ($W$) and quality scores ($Q$).

2. Applicability-Aware Retrieval

Instead of a simple vector search, GSEM uses Multi-Seed Traversal. It identifies "seed" experiences and then uses the LLM to navigate the graph, "collecting" related experiences or "exploring" neighbors based on learned transition weights. This ensures the retrieved context is both applicable and collaborative.

3. Self-Evolution (The "Learning" Loop)

Unlike static RAG, GSEM evolves. After a task, it receives feedback (correct/incorrect). It then backpropagates this "credit" to the nodes and edges used:

- Node Quality ($Q$): Reliability of a specific medical strategy.

- Edge Weight ($W$): How well two strategies work together.

Experiments & Results: Dominating the Benchmarks

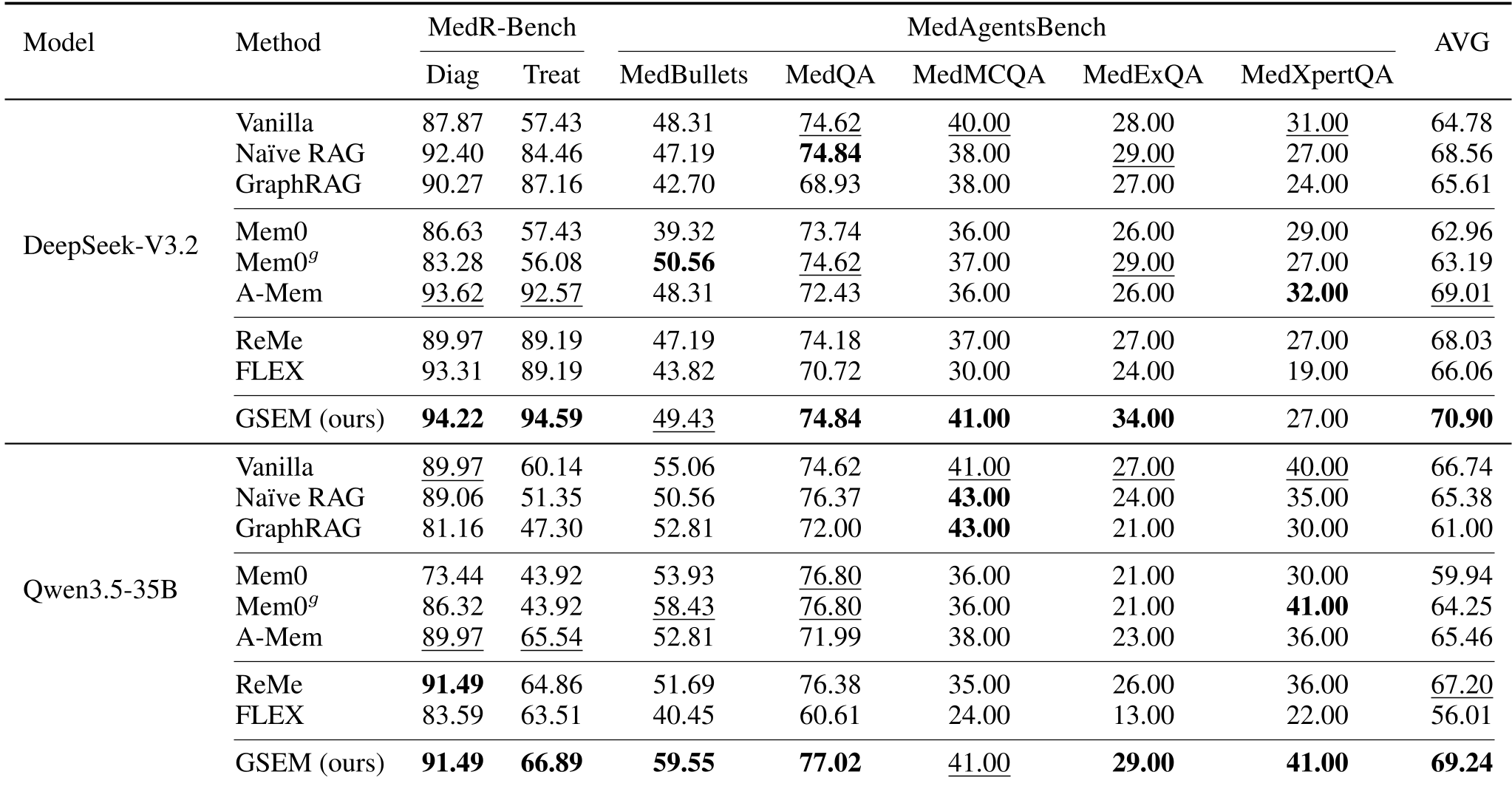

GSEM was tested against heavyweights like GraphRAG and Mem0.

- Accuracy Boost: On MedR-Bench Treatment tasks, GSEM reached 94.59% accuracy, a massive leap over vanilla LLM inference (57.43%) and standard Naïve RAG (84.46%).

- Consistency: Across diverse subsets like MedBullets and MedQA, GSEM maintained the highest average performance, proving its robustness.

Ablation Insight

The "Self-Evolution" (SE) mechanism showed that as the system processes more cases, its internal graph becomes more "polarized"—clearly separating high-quality life-saving strategies from unreliable ones.

Critical Analysis & Conclusion

GSEM represents a shift from Retrieval-Augmented Generation to Experience-Augmented Reasoning. By treating memory as a living graph, it mimics how expert clinicians build intuition over years of practice—not just by remembering cases, but by understanding how those cases connect.

Limitations: The current system relies on LLM-guided traversal, which can be computationally expensive. Future iterations may need more light-weight graph traversal policies to scale to millions of experiences.

Takeaway: The future of AI in specialized fields isn't just bigger models, but better-structured memory systems that can "evolve" alongside the professional.