This paper introduces a Graph-RAG (Retrieval-Augmented Generation) framework that combines Knowledge Graphs (KGs) with Large Language Models (LLMs) to provide explainable machine learning (XAI) in manufacturing. By extending the ML-Schema ontology to store domain-specific context alongside ML results, it achieves structured, user-friendly explanations through an iterative, multi-turn retrieval mechanism.

TL;DR

In the complex world of manufacturing, knowing that a model predicted a failure isn't enough; engineers need to know why in the context of their specific machinery. This paper presents a novel Graph-RAG approach that links Knowledge Graphs (KG) with LLMs to transform cryptic ML outputs into structured, domain-aware natural language explanations. By treating the KG as a dynamic reference library, the system provides transparent insights that both developers and factory workers can actually use.

The "Context Gap" in Industrial AI

The Achilles' heel of modern Machine Learning in industry isn't just accuracy—it's interpretability. Current Explainable AI (XAI) techniques like SHAP or LIME often output mathematical feature importances that look like "Feature 42 = 0.85." To a technician on the shop floor, this is useless.

The authors identify two critical failures:

- Lack of Domain Context: ML models don't "know" what a robotic arm or a screw angle is; they only see tensors.

- LLM Hallucinations: While LLMs can speak fluently, they often invent facts when asked about specific, private industrial data.

Methodology: The Branch-and-Bound Traversal

Rather than forcing an LLM to learn an entire factory's documentation through fine-tuning (which is expensive and prone to "catastrophic forgetting"), the authors utilize Graph-RAG.

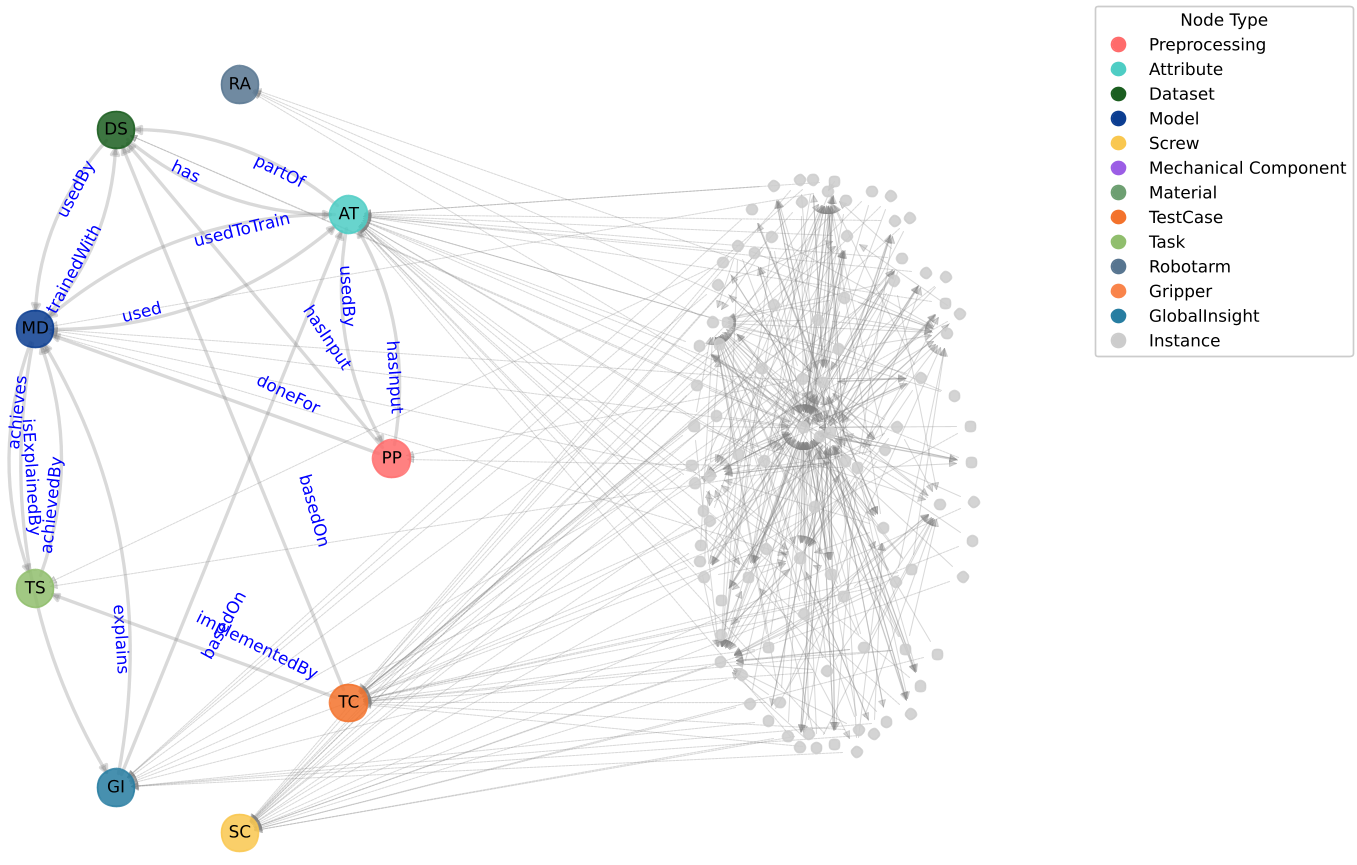

1. Extending ML-Schema

The researchers extended the standard ML-Schema ontology. This allows them to store not just the model's weights, but the entire lineage: the dataset, the specific ML task (e.g., predictive maintenance), and the resulting XAI explanations (Shapley values) all in one linked data structure.

2. Multi-turn Retrieval Logic

Instead of translating natural language into complex SPARQL queries (which often fail if the syntax is slightly off), the system uses an iterative "Branch-and-Bound" approach:

- Step 1 & 2: The LLM identifies relevant node classes (e.g., "Dataset") and starting nodes.

- Step 3: The LLM "walks" the graph. It looks at a node, asks for its neighbors, evaluates if that information is enough to answer the user, and either continues or stops.

Figure 1: The system architecture showing the relationship between ML-Schema classes and specific manufacturing instances.

Figure 1: The system architecture showing the relationship between ML-Schema classes and specific manufacturing instances.

Experiments: Real-World Robot Manipulation

The method was tested on a robotic manipulator task: predicting if a screw would be successfully placed based on geometry and arm attributes.

User Perception

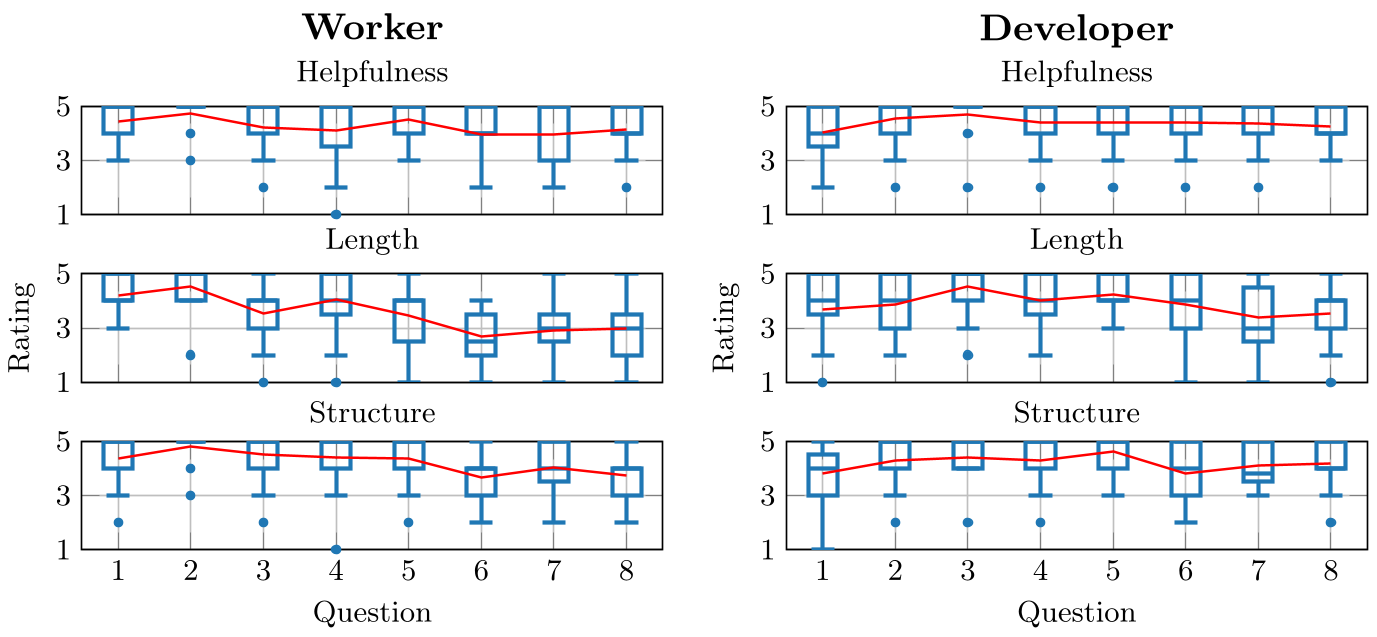

The study involved 20 AI professionals acting as "Developers" and "Workers."

- Clarity & Structure: Most users rated the system highly (4/5), noting that the structure of the explanation made the "black box" model feel transparent.

- Role Sensitivity: Interestingly, workers required more "definitions" of technical terms, while developers preferred conciseness—suggesting that future XAI must be persona-aware.

Figure 2: Distribution of user ratings across helpfulness, understandability, and structure.

Figure 2: Distribution of user ratings across helpfulness, understandability, and structure.

Critical Insight: Why This Matters

This work represents a shift from Agentic AI that simply "searches" to Agents that "reason" over structured symbolic knowledge. By using a KG, the system is:

- Traceable: Every part of the explanation can be traced back to a specific triplet in the KG.

- Dynamic: If the manufacturing process changes, you only update the KG—you don't need to retrain the LLM.

Limitations & Future Work

The authors honestly note that "interaction discipline" remains a challenge. The LLM sometimes overestimates its capabilities (e.g., claiming it can execute a command it can't). Future iterations will likely move toward smaller, local LLMs optimized for graph traversal to reduce latency and cost.

Conclusion

By grounding LLMs in the structured reality of Knowledge Graphs, we move closer to a "Digital Twin" that can not only simulate what's happening but explain why it's happening in plain English. For manufacturing, this is the bridge between experimental AI and reliable production-grade tools.