The paper introduces Natural-Language Agent Harnesses (NLAHs), a framework that externalizes the complex control logic of AI agents—reasoning loops, tool use, and state management—into portable, editable natural-language artifacts. These harnesses are executed by an Intelligent Harness Runtime (IHR), achieving State-of-the-Art (SOTA) consistency across coding and computer-use benchmarks like SWE-bench Verified and OSWorld.

TL;DR

The performance of modern AI agents depends less on the "base model" and more on the harness—the stack of logic managing memory, tools, and multi-step reasoning. However, these harnesses are usually buried in messy Python code. This paper introduces Natural-Language Agent Harnesses (NLAHs) and an Intelligent Harness Runtime (IHR), turning agent logic into edible, portable natural-language files. By moving from "code-as-harness" to "text-as-harness," researchers achieved a 55% performance boost on computer-use benchmarks while making agent behavior fully ablatable and transparent.

The Problem: The "Black Box" of Harness Engineering

In the current LLM landscape, we often compare "System A" to "System B," but we rarely know why one wins. Is it the prompt? The way it handles tool errors? Or the specific way it saves files?

Most agent systems scatter their logic across:

- Hard-coded controller scripts

- Hidden framework defaults (e.g., LangChain or AutoGPT internal logic)

- Implicit state assumptions

This makes "fair comparisons" impossible. The authors argue that we need a Pattern Layer—an explicit representation of the design patterns (like reflection, search, or verification) that can be studied as a scientific object.

Methodology: The NLAH + IHR Architecture

The researchers' core insight is that an LLM can not only perform a task but also interpret the rules of the orchestration itself.

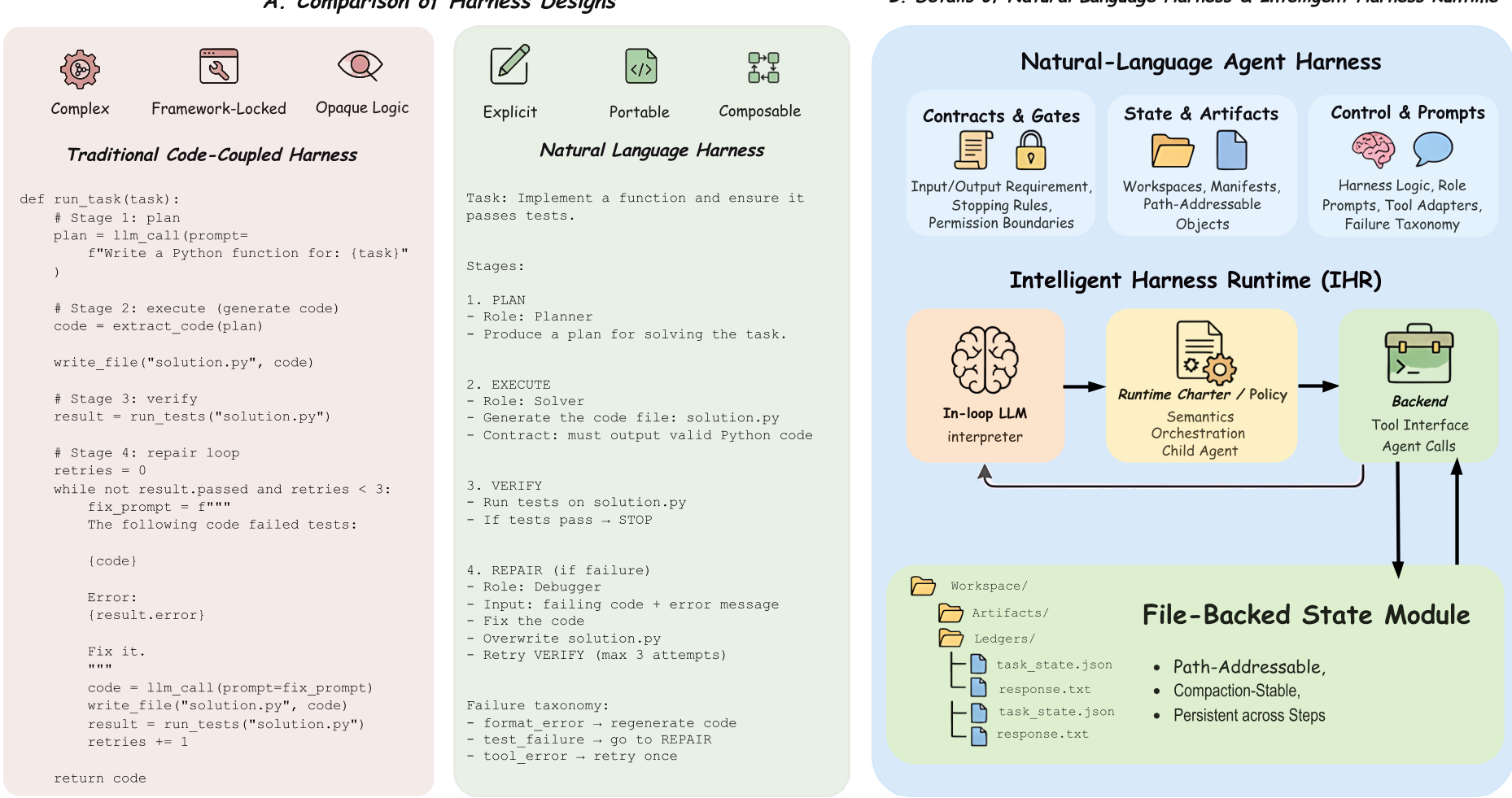

1. Natural-Language Agent Harnesses (NLAHs)

Instead of writing a complex while loop in Python to handle retries, an NLAH defines:

- Contracts: What must the output look like? (Validation gates)

- Roles: Who does what? (Solver vs. Verifier)

- State Semantics: How is data saved to disk so it survives a context reset?

2. Intelligent Harness Runtime (IHR)

The IHR is the "Operating System" for the harness. It contains an in-loop LLM that reads the NLAH and decides which tool to call or which sub-agent to spawn.

Figure 1: The architecture of IHR executing an NLAH. Note the separation between the "Charter" (runtime policy) and "Harness Logic" (task-specific strategy).

Figure 1: The architecture of IHR executing an NLAH. Note the separation between the "Charter" (runtime policy) and "Harness Logic" (task-specific strategy).

Experiments: Proving the "Pattern Layer"

The authors tested their system on SWE-bench Verified (software engineering) and OSWorld (operating system use).

Key Insight 1: Behavior over Benchmarks

Interestingly, "Full IHR" (using the complete runtime + harness) didn't just marginally increase scores; it fundamentally changed how the agent worked. In the TRAE harness, 90% of all activity moved to delegated child agents, meaning the harness successfully managed a complex "manager-worker" hierarchy.

Key Insight 2: The Power of Module Ablation

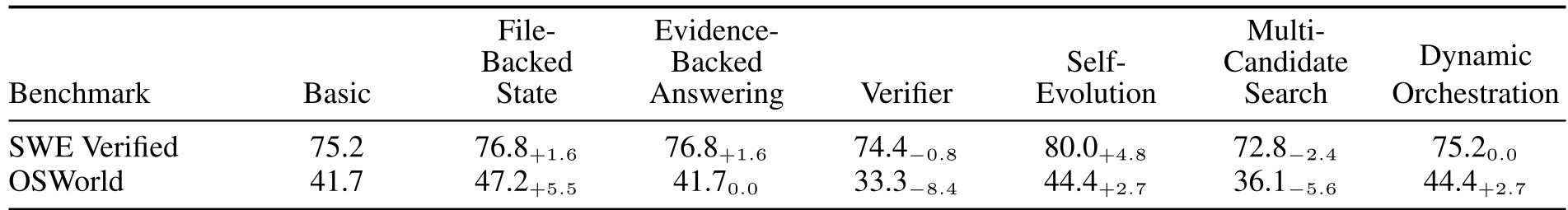

Because the harness is now modular, we can "plug and play" different strategies:

- Self-Evolution: Added +4.8% to SWE-bench. It forced the model to be more disciplined about its first attempt.

- File-Backed State: Added +5.5% to OSWorld. Externalizing memory to actual files (TASK.md, STATE.md) prevented the agent from "forgetting" its goal during long UI interactions.

Table 1: The impact of different harness modules on performance across benchmarks. Note how Self-Evolution and File-Backed State offer consistent gains.

Table 1: The impact of different harness modules on performance across benchmarks. Note how Self-Evolution and File-Backed State offer consistent gains.

Deep Insight: Why Natural Language?

One might ask: "Isn't code more precise than natural language?"

The authors' counter-intuitive finding is that Natural Language carries high-level intent better. While code is great for the "how" (using a tool), natural language is superior for the "what" (defining a contract or a role). By using text, the agent can adapt its orchestration strategy dynamically, which is vital for open-ended tasks like fixing a repository bug it has never seen before.

Conclusion & Radical Analysis

NLAH is a step toward Harness Representation Science. It proves that the "scaffold" is just as important as the "model."

Critical Limitation: The cost is high. Using an LLM to "orchestrate" at every step increases token usage significantly. However, as "inference-time scaling" becomes the new meta, these costs may be the necessary price for agents that can reliably solve multi-hour tasks without drifting off-track.

Future Outlook: We are moving toward a world where "Agent Developers" won't write code; they will design "Logic Modules" in natural language that are automatically optimized by faster, cheaper runtime models.