This paper introduces Multi-Dependency PIBT (MD-PIBT), a general framework for Multi-Agent Path Finding (MAPF) that treats agent planning as a search over dependency graphs. It extends the popular Priority Inheritance with Backtracking (PIBT) algorithm to handle multi-step planning where a single agent can conflict with multiple others, achieving state-of-the-art performance in complex, large-agent environments.

TL;DR

Planning for thousands of robots in a heartbeat is the "Holy Grail" of automated warehousing. While algorithms like PIBT have dominated this space due to their speed, they fail when a single large robot blocks multiple smaller ones. This paper introduces MD-PIBT, a framework that breaks the "one-agent-at-a-time" limitation by modeling agent conflicts as a dynamic Dependency Graph.

Problem & Motivation: The "One-Step" Bottleneck

Modern Multi-Agent Path Finding (MAPF) requires sub-second solutions for up to 10,000 agents. Priority Inheritance with Backtracking (PIBT) succeeds because it is rule-based: if Agent A bumps into Agent B, B inherits A's priority to move out of the way.

However, PIBT is myopic. It assumes:

- Single Dependency: A path can only conflict with one other agent at a time.

- Short Horizons: It primarily reasons about one-step actions.

When we introduce large agents (e.g., a heavy-duty pallet mover) or kinodynamic constraints (rotation, acceleration), this assumption collapses. A large agent might conflict with three small agents at once; PIBT simply doesn't know who to "push" first, leading to deadlocks.

Methodology: Thinking in Graphs, Not Rules

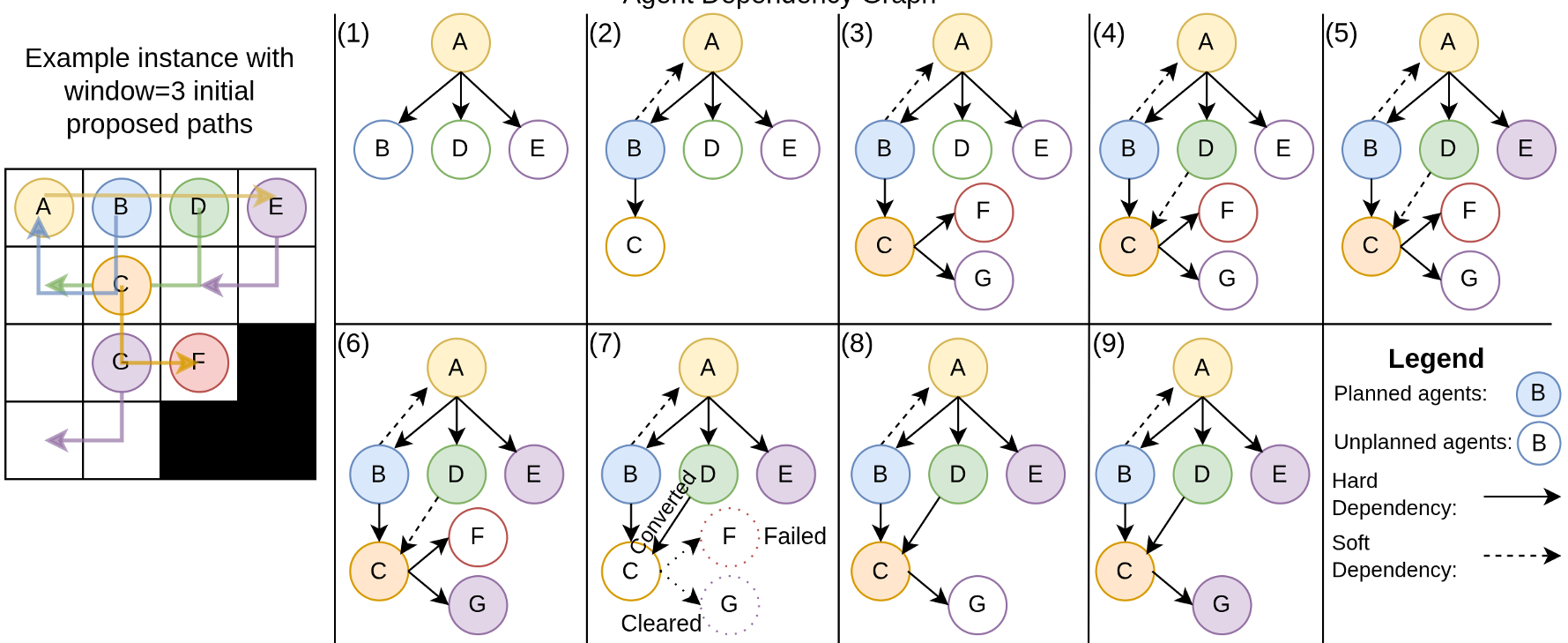

The core insight of MD-PIBT is the Agent Dependency Graph (AgDG). Instead of following a fixed list of rules, the algorithm dynamically builds a graph of who is blocking whom.

1. Hard vs. Soft Dependencies

- Hard Dependency ($a_i o a_j$): Agent $a_i$ wants a path that occupies the "safe zone" of unplanned Agent $a_j$. $a_j$ must find a new path.

- Soft Dependency ($a_i \dashrightarrow a_j$): $a_i$ conflicts with $a_j$, but $a_j$ is already planned. This serves as a "wait-and-see" flag in case $a_j$ needs to replan later.

2. Multi-Step Planning with $C$-Collisions

Unlike previous methods, MD-PIBT allows an agent to propose a path that conflicts with $C$ other agents. This allows for "aggressive" planning where a high-priority agent can force multiple low-priority agents to coordinate their escape simultaneously.

Fig 1: The AgDG in action. In step (1), Agent A creates hard dependencies for B, D, and E simultaneously. The algorithm then recursively resolves these based on the graph topology.

Fig 1: The AgDG in action. In step (1), Agent A creates hard dependencies for B, D, and E simultaneously. The algorithm then recursively resolves these based on the graph topology.

Experiments & Results

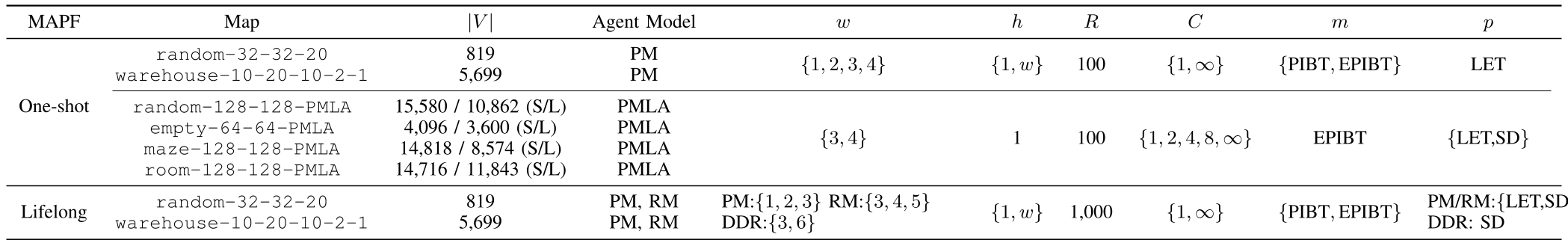

The authors evaluated MD-PIBT across four models: Pebble Motion (PM), Large Agents (PMLA), Rotation Motion (RM), and Differential Drive (DDR).

Solving the "Large Agent" Crisis

In environments where robots vary in size, standard EPIBT fails quickly as density increases. MD-PIBT, by setting $C > 1$, allows large robots to effectively clear paths.

- Success Rate: Significantly higher in maze and warehouse maps for large agents.

- Suboptimality: Lower sum-of-cost compared to EPIBT.

Table 1: Hyper-parameter search across different MAPF variants. MD-PIBT acts as a "super-set" that can replicate PIBT/EPIBT or unlock new capabilities.

Table 1: Hyper-parameter search across different MAPF variants. MD-PIBT acts as a "super-set" that can replicate PIBT/EPIBT or unlock new capabilities.

Scalability to 10,000 Robots

The algorithm maintains the "rule-based" speed advantage. Even with the overhead of graph manipulation, it remains orders of magnitude faster than complete search methods like CBS (Conflict-Based Search) while being more robust than simple PIBT.

Critical Analysis & Conclusion

Takeaway: MD-PIBT is a unifying framework. By generalizing "Priority Inheritance" into "Dependency Search," the authors have provided a tool that is agnostic to the underlying robot physics.

Limitations:

- Exponential Worst-Case: Theoretically, the dependencies could lead to exponential replanning calls (though the $R$ limit hyper-parameter effectively mitigates this in practice).

- Hyper-parameter Sensitivity: The choice of window size $w$, simulation window $h$, and collision limit $C$ is map-dependent, requiring some tuning for optimal performance.

Future Outlook: MD-PIBT could serve as a "safety shield" for reinforcement learning policies, where the RL policy proposes multi-step actions and MD-PIBT ensures they are collision-free via graph-based conflict resolution.