WildWorld is a massive action-conditioned world modeling dataset containing 108 million frames and 450+ semantically rich actions (attacks, skills, movement), curated from the AAA game Monster Hunter: Wilds. It introduces explicit state annotations (skeletons, HP, coordinates) and the WildBench benchmark to evaluate "Action Following" and "State Alignment" in generative world models.

TL;DR

WildWorld is a landmark 108M-frame dataset derived from Monster Hunter: Wilds, designed to solve the "blindness" of current world models. By providing 450+ actions and explicit state data (HP, skeletons, equipment), it enables the training of models that don't just "guess" the next pixel, but actually understand the underlying game logic and state transitions required for a true Generative ARPG.

Problem & Motivation: The "Pixel-State" Entanglement

Current video world models (like Sora or early Genie) often suffer from a fundamental flaw: they treat the world as a sequence of pixels rather than a dynamical system.

In a typical dataset, the action "Shoot" is directly mapped to the visual effect of a muzzle flash. But what happens if the gun is out of ammo? Without access to the latent state (ammo count), a model will likely hallucinate a flash because it only knows the visual correlation. This lack of structured world dynamics leads to "drifting" (where the world becomes inconsistent over time) and a lack of true interactivity.

The authors argue that to build a generative game, the model must differentiate between:

- Actions: The control inputs.

- States: The underlying variables (HP, position, animation frame).

- Observations: The rendered RGB frames.

WildWorld: A AAA-Scale Lab for World Models

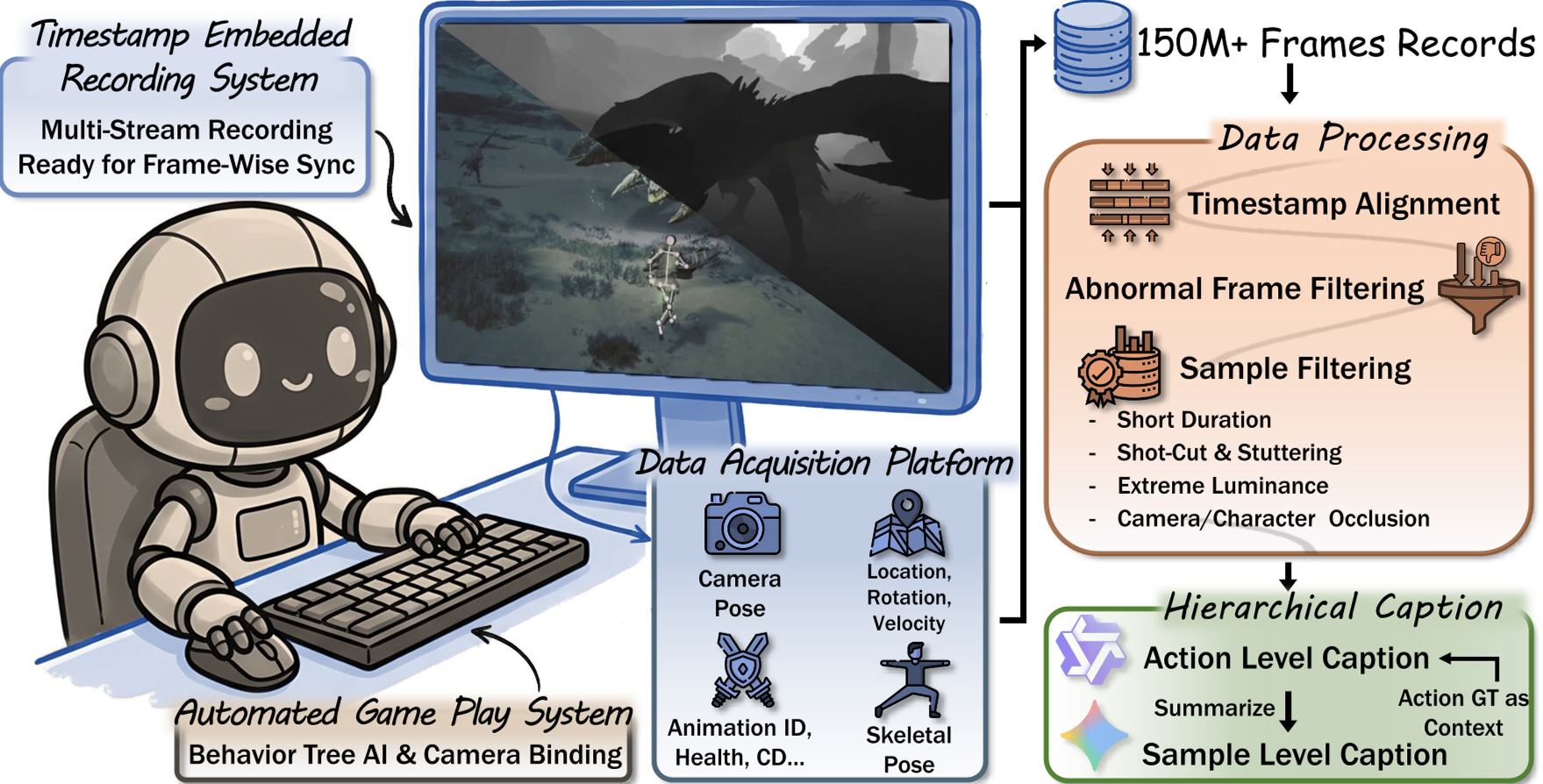

To bridge this gap, the researchers built a toolchain within Monster Hunter: Wilds to record synchronized data at the engine level.

1. The Dataset Composition

- Scale: 108 Million frames, 5,960 unique action triplets.

- Modality: RGB, Depth, Camera Poses, 3D Skeletons, and World States (Attributes like HP/Stamina).

- Automation: They used rule-based companion AI to play the game autonomously, ensuring diverse, high-dimensional trajectories across 29 monster species and multiple weapon types.

Methodology: Injecting "State" into Diffusion

The core technical contribution is the StateCtrl architecture. Unlike traditional models that only take a text prompt or a previous frame, StateCtrl treats "State" as a first-class citizen:

- State Embedding: Discrete states (weapon type) and continuous states (coordinates/health) are mapped into a unified feature space.

- State-Aware DiT: These embeddings are injected into the intermediate layers of a Diffusion Transformer.

- Autoregressive Prediction (StateCtrl-AR): During inference, the model predicts the next state and then uses that predicted state to generate the next frame, mimicking a real game engine's update loop.

WildBench: How to Measure a World Model?

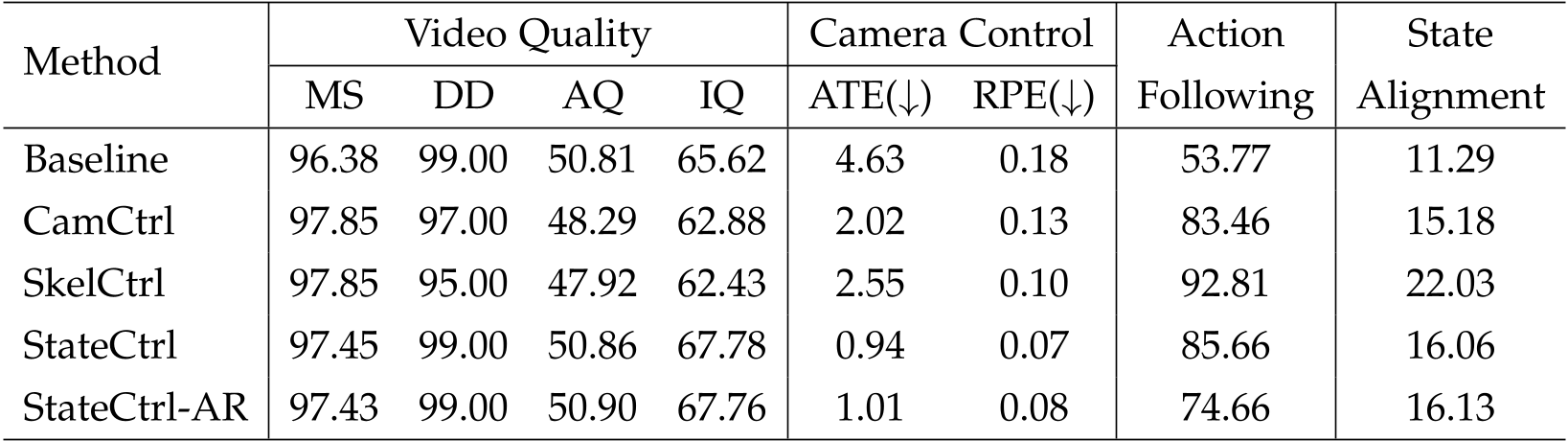

Standard metrics like FVD or PSNR only measure visual quality. WildWorld introduces two critical metrics:

- Action Following: Does the generated character actually perform a "Heavy Attack" when told to? (Evaluated via VLM/Human judgment).

- State Alignment: Using point tracking (TAPNext) to see if the character's movement in the video matches the ground-truth 3D skeleton trajectory.

Key Results

| Method | Video Quality (MS) | Action Following | State Alignment | | :--- | :--- | :--- | :--- | | Baseline | 96.38 | 53.77 | 11.29 | | SkelCtrl | 97.85 | 92.81 | 22.03 | | StateCtrl | 97.45 | 85.66 | 16.06 |

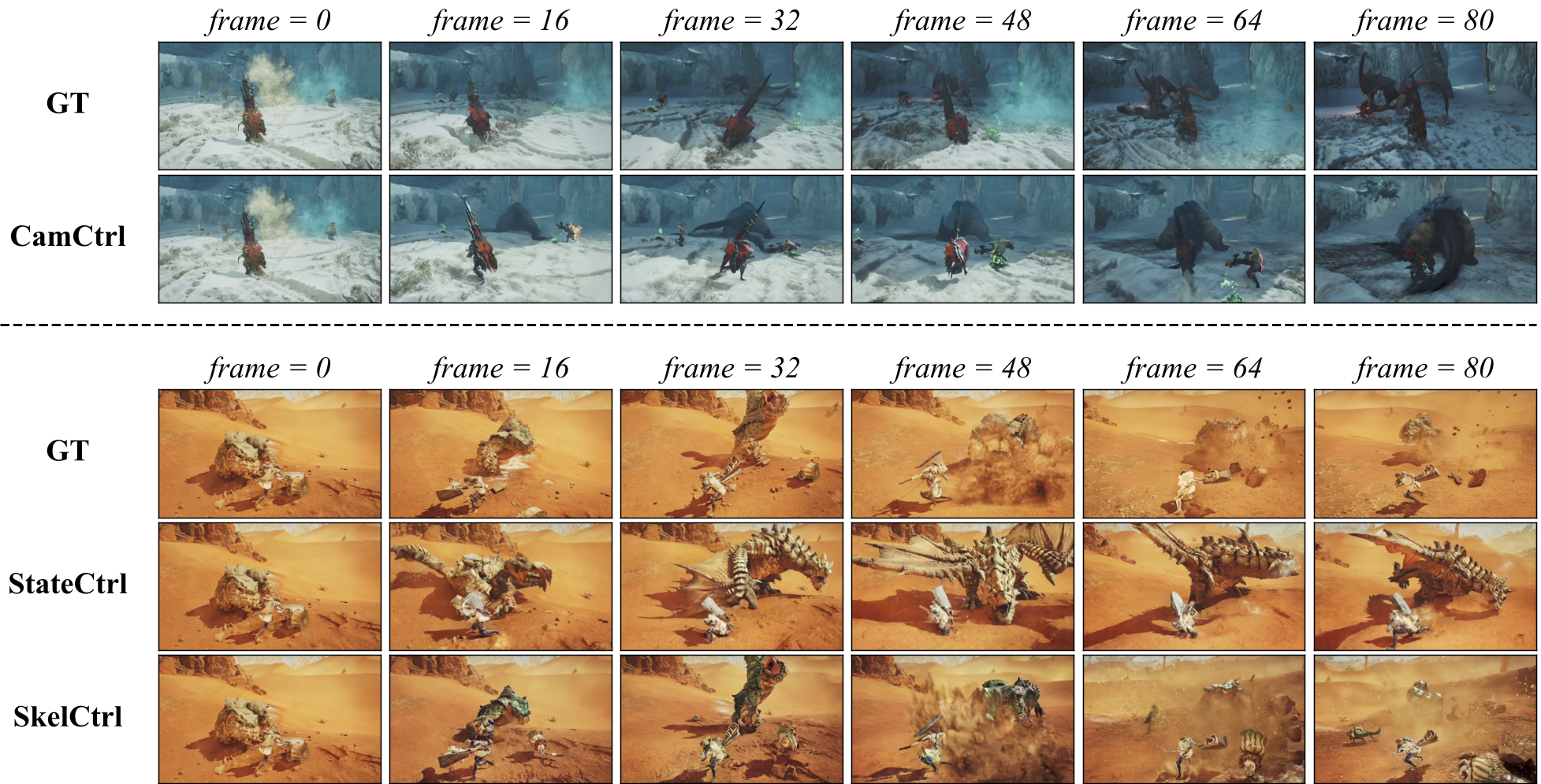

The results highlight a fascinating trade-off: Skeleton-based control yields the highest accuracy because it provides a strong visual "grid" for the model, but State-based control maintains higher aesthetic quality (AQ) because it allows the model more freedom in how it renders the pixels.

Critical Analysis & Conclusion

WildWorld represents a shift toward "AI-native games." By proving that explicit state annotations are necessary for maintaining long-horizon consistency, the authors have laid the groundwork for generative environments that aren't just "dreaming" videos, but are actually simulating 3D logic.

Limitations:

- The current State Alignment score (22.03 for SkelCtrl) is still relatively low, showing that even with 100M frames, following exact 3D trajectories in a 2D diffusion process remains a massive challenge.

- Autoregressive state prediction (StateCtrl-AR) suffers from error accumulation, a classic "compounding error" problem in RL and time-series forecasting.

Future Outlook: The next step is likely moving beyond "capturing" game data to "closed-loop" generation—where a generative world model replaces the game engine entirely, controlled only by a player's controller inputs and a latent state buffer.