The paper introduces Bearing-UAV, a vision-only navigation framework that jointly regresses a UAV's absolute position and heading by matching aerial views with adjacent satellite tiles. It achieves state-of-the-art (SOTA) performance in GNSS-denied environments and introduces the Bearing-UAV-90K multi-city benchmark.

Executive Summary

TL;DR: Bearing-UAV is a transformative vision-only navigation system that abandons the traditional "Matching-to-Tile" (M2T) retrieval paradigm in favor of a joint position-and-heading regression network. By integrating features from four adjacent satellite tiles through cross-attention, it achieves decimeter-level precision and enables UAVs to navigate complex urban environments without GNSS.

Background: In the landscape of UAV localization, most methods treat the problem as a retrieval task—finding the "best-matching" satellite image in a database. Bearing-UAV shifts this coordinate system toward a regression-based approach, marking a significant step toward end-to-end autonomous flight.

Problem & Motivation: The "Glass Ceiling" of Tile Matching

Existing Cross-View Geo-Localization (CVGL) methods suffer from an inherent trade-off. If you want higher accuracy, you need a denser grid of satellite tiles, which leads to a quadratic explosion in storage and computational overhead.

Moreover, they ignore Heading. A UAV that knows where it is but not which way it is facing cannot navigate effectively; it can only "hover" or "drift." Current datasets also assume perfectly aligned, North-facing images, which fall apart in the wild where UAVs rotate arbitrarily and encounter viewpoint parallax.

Methodology: Beyond Discrete Grids

The core of Bearing-UAV lies in its ability to treat the satellite map as a continuous space rather than a set of discrete pixels.

1. Global-Local Unity Feature (GLUF)

Instead of simple CNN backbones, the authors use a clustering-based module. It aggregates local descriptors into semi-global representations. This ensures that even if only 30% of a UAV's view overlaps with a satellite tile, the model can still find geometric correspondences.

2. Relative Coordinate Encoder (RCE)

The model doesn't just look at images; it understands spatial layout. By encoding the relative coordinates of four adjacent tiles, the network learns the "spatial context" of the UAV's current position within a 2x2 block.

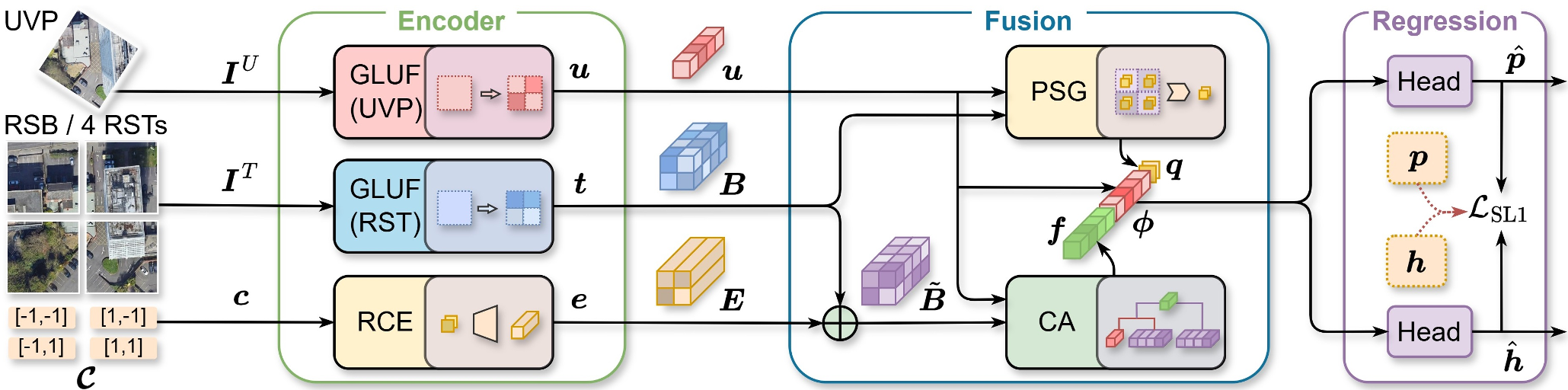

Figure 1: The Bearing-UAV pipeline. A single UAV view is fused with four neighboring satellite tiles to predict absolute coordinates and a heading vector.

Figure 1: The Bearing-UAV pipeline. A single UAV view is fused with four neighboring satellite tiles to predict absolute coordinates and a heading vector.

Experiments: Real-World Ground Truth

The authors introduced Bearing-UAV-90K, a benchmark featuring 90,000 image pairs across four cities with diverse terrains (mountains, rivers, and high-rise buildings).

SOTA Comparison

In head-to-head tests against standard baselines like University-1652 and SUES-200:

- Localization Error: Dropped from ~30m to 8.61m.

- Heading Error: Achieved a Mean Heading Error (MHE) of 12.9°.

- Navigation Success: While baselines failed early due to "heading drift" or "local loops," Bearing-UAV successfully completed 500m+ winding routes.

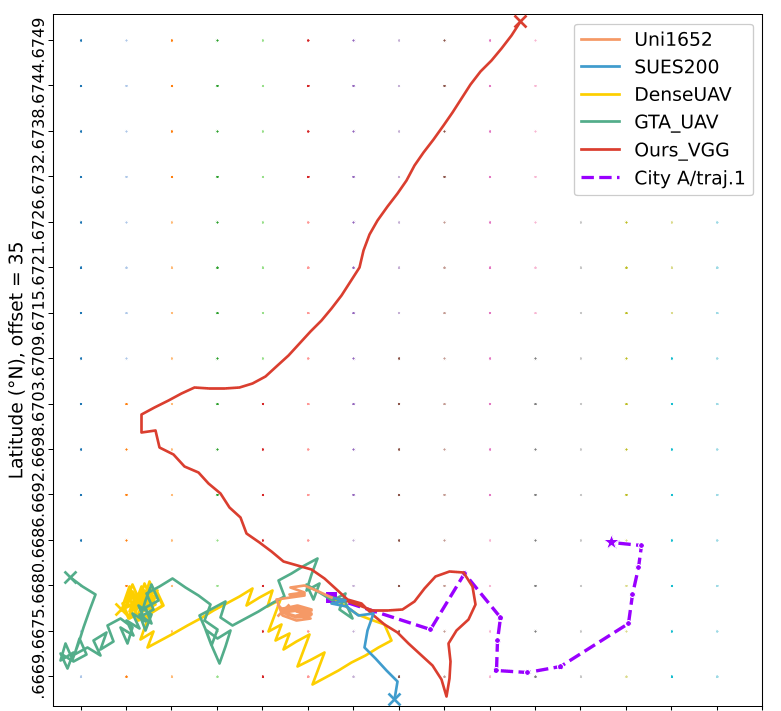

Figure 2: Performance comparison on complex routes. Note how Bearing-UAV (red) remains stable while others (blue, orange) diverge.

Figure 2: Performance comparison on complex routes. Note how Bearing-UAV (red) remains stable while others (blue, orange) diverge.

Critical Analysis & Takeaways

The most profound insight here is the use of regression over retrieval. By regressing the position as a continuous value, the model "interpolates" between satellite tiles, breaking the resolution limit of the onboard map.

Limitations:

- Zero-Shot Generalization: The model is highly effective on the cities it was trained on, but its "cross-city" transferability (e.g., training in New York and flying in Tokyo) remains a challenge for future work.

- Temporal Stability: The current model processes frames independently; incorporating a temporal filter (like a Kalman Filter or LSTM) could further smooth the navigation.

Conclusion: Bearing-UAV proves that purely vision-based navigation is viable for long-range UAV missions. By bridging the gap between aerial oblique views and orthorectified satellite imagery, it provides a robust fallback for GNSS-denied environments.