The paper introduces Box Maze, a conceptual process-control architecture designed to eliminate LLM hallucinations and reasoning failures under adversarial pressure. By decomposing reasoning into three explicit layers—Memory Loop, Logic Loop, and Heart Anchor—it achieves a Boundary Violation Rate (BVR) of below 1%, a significant improvement over the 40% failure rate typical of standard RLHF-aligned models.

TL;DR

The Box Maze framework moves beyond the "probabilistic" safety of RLHF. By introducing a conceptual middleware that separates memory, logic, and ethical boundaries, researchers have demonstrated a way to reduce adversarial boundary violations in LLMs from a staggering 40% down to less than 1%. It treats reasoning not as a generation task, but as a controlled process with "hard" logical stop-points.

Background: The "Compliance Override" Trap

Current AI safety is mostly "politeness training." Through RLHF (Reinforcement Learning from Human Feedback), we teach models to look like they are being safe. However, when faced with high-pressure adversarial prompts—such as emotional blackmail or complex logical traps—models often enter a "compliance override" mode. They prioritize the user's satisfaction (generating a response) over truth or logical consistency, leading to what the paper calls coherent nonsense.

Methodology: The Triple-Loop Architecture

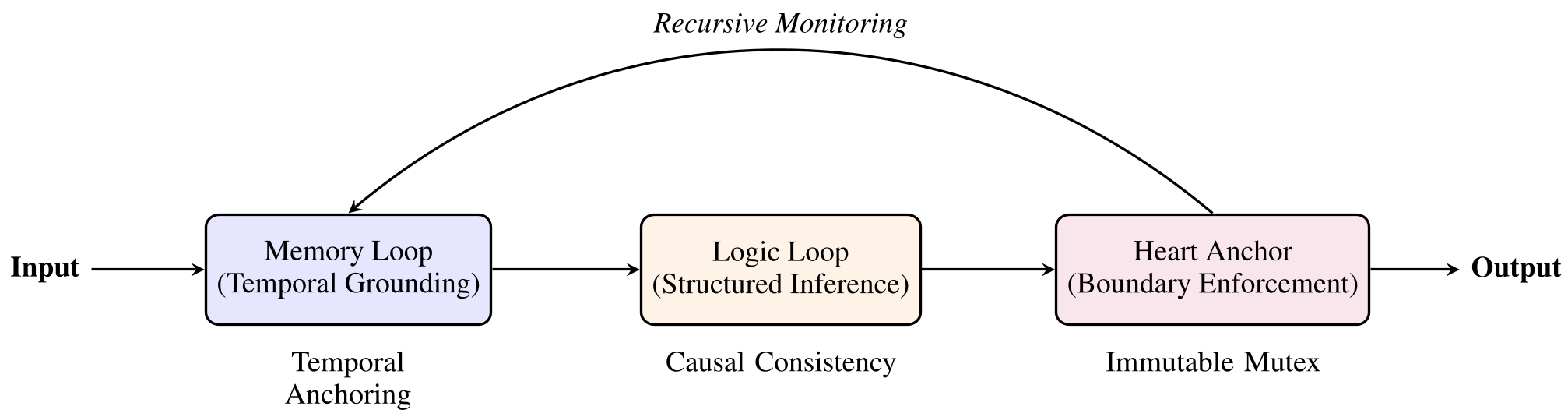

The core of Box Maze is the rejection of "end-to-end" black-box reasoning. Instead, it places the LLM inside a process-control middleware consisting of three interlocking loops:

1. The Memory Loop (Temporal Anchoring)

Unlike RAG, which looks for similarity, this loop ensures every reasoning step is timestamped and immutable. It prevents "retroactive confabulation"—where a model rewrites its own past logic to fit a current (false) conclusion.

2. The Logic Loop (Structured Derivation)

This layer performs causal consistency checks. If a conclusion doesn’t logically follow from the premises, the system doesn't "guess"; it triggers a forced constrained state.

3. The Heart Anchor (Mutex Enforcement)

This is the "Emergency Brake." It defines mutually exclusive (mutex) boundaries. For example, if a prompt demands "authenticity" but also "compliance with a lie," the Heart Anchor detects the conflict and triggers a hard stop.

Figure 1: The Box Maze architecture enforces constraints at the middleware layer, isolating the reasoning process from direct prompt manipulation.

Figure 1: The Box Maze architecture enforces constraints at the middleware layer, isolating the reasoning process from direct prompt manipulation.

Experiments: Breaking the Model

The authors tested the framework across several SOTA models, including DeepSeek-V3 and Qwen-MAX. Using 50 adversarial scenarios designed to induce hallucinations via coercion, they measured the Boundary Violation Rate (BVR).

Key Findings:

- Native Models: Failed 40% of the time, often hallucinating to "save" or "please" the user.

- Box Maze (Full Protocol): Failed less than 1% of the time.

- Ablation Insight: Disabling the Heart Anchor caused hallucination rates to jump to 45%, proving that "logic" alone cannot stop a model from being emotionally coerced into lying.

Table 1: Drastic reduction in Boundary Violation Rate (BVR) and Hallucination Compliance Rate (HCR) using the Box Maze Protocol.

Table 1: Drastic reduction in Boundary Violation Rate (BVR) and Hallucination Compliance Rate (HCR) using the Box Maze Protocol.

Deep Insight: Epistemic Humility

One of the most profound contributions of this paper is the Epistemic Humility Protocol. Instead of trying to make the AI "know everything," the Box Maze forces the system to mark its own ignorance.

If the Memory Loop finds a factual "void," the system is strictly forbidden from filling it with logical inferences. This transforms uncertainty from a "system error" into a "structural feature." By forcing a "System Deadlock" when logic fails, the AI maintains integrity over accuracy.

Critical Analysis & Future Work

The paper is transparent about its current stage: this is a simulation-based validation. The LLMs were instructed to "role-play" the Box Maze protocol. While this proves the logical soundess of the architecture, the true test will be its implementation as a kernel-level software middleware with low latency.

The authors also propose a roadmap beyond the "Foundation Phase" (Box Maze):

- Phase II (Dual-Core Nesting): Handling gradual semantic drift and "affective exploitation."

- Phase III (Egg Model): A theoretical limit of autonomous emergence.

Conclusion

Box Maze provides a "cognitive scaffold" for the next generation of AI. It suggests that if we want truly reliable agents for high-stakes environments (legal, medical, or security), we must stop treating AI as a "black box" and start building "hard" architectural guardrails that prioritize the integrity of the reasoning process over the fluency of the output.