The paper introduces a confidence-based framework for high-fidelity mesh extraction from 3D Gaussian Splatting (3DGS). By incorporating self-supervised learnable confidence values and variance-reducing losses, the method achieves state-of-the-art results for unbounded mesh reconstruction (e.g., F1-score of 0.521 on Tanks & Temples) within a competitive 20-minute optimization window.

TL;DR

3D Gaussian Splatting (3DGS) is fast, but extracting a clean mesh from it is notoriously difficult due to "view-dependent geometry"—where the model uses fake shapes to represent shiny reflections. This paper introduces the CoMe framework, which uses self-supervised confidence values and variance-reduction losses to force Gaussians to represent actual surfaces rather than optical illusions. The result is a SOTA mesh extraction pipeline that works on unbounded scenes in under 20 minutes.

Background: The Price of Speed

While 3DGS revolutionized Novel View Synthesis (NVS), its explicit nature is a double-edged sword for geometry. Because Gaussians are optimized primarily to minimize photometric error ($L_1 + ext{D-SSIM}$), the system often cheats: it creates a "cloud" of semi-transparent Gaussians to mimic complex lighting. When you try to extract a mesh, you get a "hairy" or "noisy" surface.

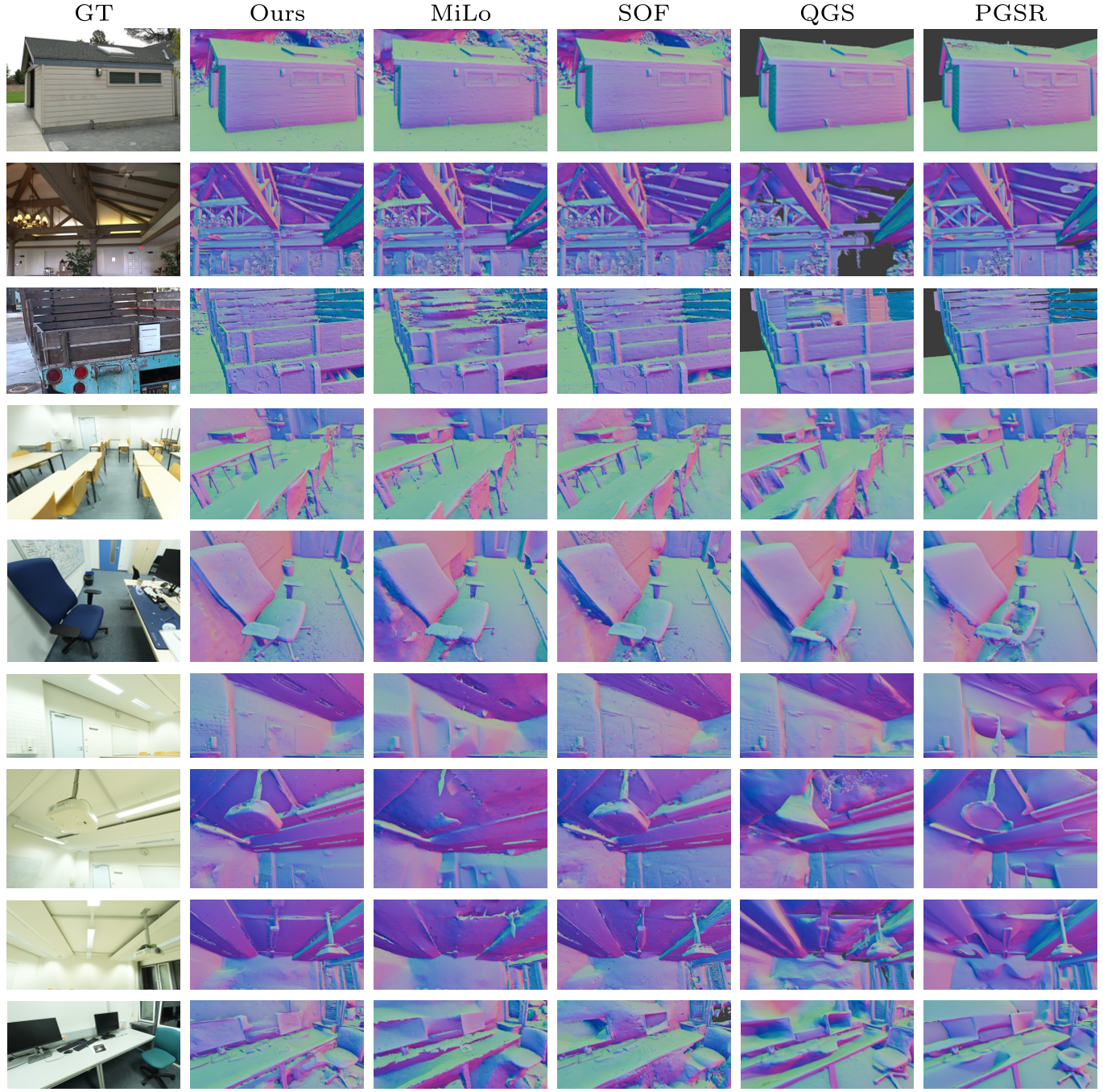

Current solutions like MiLo or PGSR try to fix this with heavy multi-view constraints or external depth priors (like Omnidata), but these add massive computational overhead and often over-smooth fine details.

The Logic of Confidence: Why it Works

The core insight of this paper is that not all pixels are equally "trustworthy" for geometry.

1. Self-Supervised Confidence

The authors endow each Gaussian with a learnable confidence value $\gamma_i$. During optimization, the photometric loss is weighted: $$\mathcal{L}{conf} = \mathcal{L}{rgb} \cdot \hat{C} - \beta \cdot \log \hat{C}$$ If a region is geometrically ambiguous (like a mirror or a blurry edge), the model can "admit" uncertainty by lowering $\hat{C}$, thus preventing these regions from driving wild geometric updates or over-densifying (cloning too many Gaussians).

2. Variance Reduction: Forcing Unity

To stop Gaussians from having wildly different colors/normals along a single ray, the authors introduce Color and Normal Variance Losses.

- Color Variance: Minimizes the difference between an individual Gaussian’s color and the blended pixel color.

- Normal Variance: Forces the normals of individual Gaussians to align with the blended surface normal.

This effectively turns a "cloud" of Gaussians into a singular, well-defined surface layer.

Methodology: Decoupled Appearance

Real-world captures have varying exposures and lighting. Previous methods used "Appearance Embeddings," but these often corrupted the structural gradient. The authors propose Decoupled D-SSIM:

- They apply the appearance compensation only to the luminance term of the SSIM loss.

- The contrast and structure terms remain tied to the original rendering.

This ensures the appearance model fixes lighting without "fixing" (masking) bad geometry.

Experimental Results

The method was tested on Tanks & Temples and ScanNet++.

- Tanks & Temples: Reached an F1-score of 0.521, a significant lead over the previous SOTA (MiLo at 0.485).

- Efficiency: Optimization and extraction take ~18 minutes, whereas comparable high-quality methods take 40-60 minutes.

As seen in the figure above, the proposed method (last column) captures the intricate details of foliage and machinery that other methods either over-smooth or fail to reconstruct.

Critical Analysis & Conclusion

The Takeaway: This paper proves that we don't necessarily need "big" external priors (like depth networks) to get good meshes. By better managing the intrinsic uncertainty of the splatting process, we can get cleaner surfaces faster.

Limitations: Like all 3DGS methods, it still requires a moderately dense set of input views. In extremely sparse sceneries or deep occlusions, it may still produce holes. Furthermore, the $\beta$ parameter (confidence penalty) requires some tuning, though the authors provide a robust default.

Future Outlook: The confidence-steered densification mechanism could likely become a standard part of the 3DGS optimization pipeline, potentially replacing the current heuristic-based densification (splitting/cloning based solely on gradient magnitude).