The paper introduces CounterScene, a framework for safety-critical closed-loop scenario generation in autonomous driving using generative BEV world models. It leverages structured counterfactual reasoning to identify and intervene on causally critical agents, achieving state-of-the-art results in both trajectory realism and adversarial effectiveness.

TL;DR

CounterScene is a novel framework that endows generative BEV world models with the ability to reason about "what-if" scenarios. By identifying the specific agent whose cautious behavior maintains a scene's safety (the causal variable) and surgically removing its spatiotemporal safety margins during a diffusion-based generation process, it creates highly realistic yet dangerous driving scenarios for testing autonomous vehicles.

Field Positioning: This work moves beyond simple adversarial perturbations (SOTA "falsification") towards a "Causal Generative Evaluation" paradigm.

The "Why": Beyond Brutal Collisions

In the world of autonomous driving (AD) evaluation, we face a paradox: natural driving data is too safe to be useful for stress-testing, but synthetically "forced" collisions often look like "teleporting" cars or physics-defying maneuvers.

Existing models like STRIVE or CTG++ often fail because they lack Causal Logic. They don't understand why a scene is safe. Is it because the ego vehicle yielding? Or is it because a specific pedestrian decided to wait? If you perturb the wrong agent, the world model struggles to maintain social coherence, leading to the dreaded "Realism-Adversarial Trade-off."

Methodology: The Who, How, and What-if

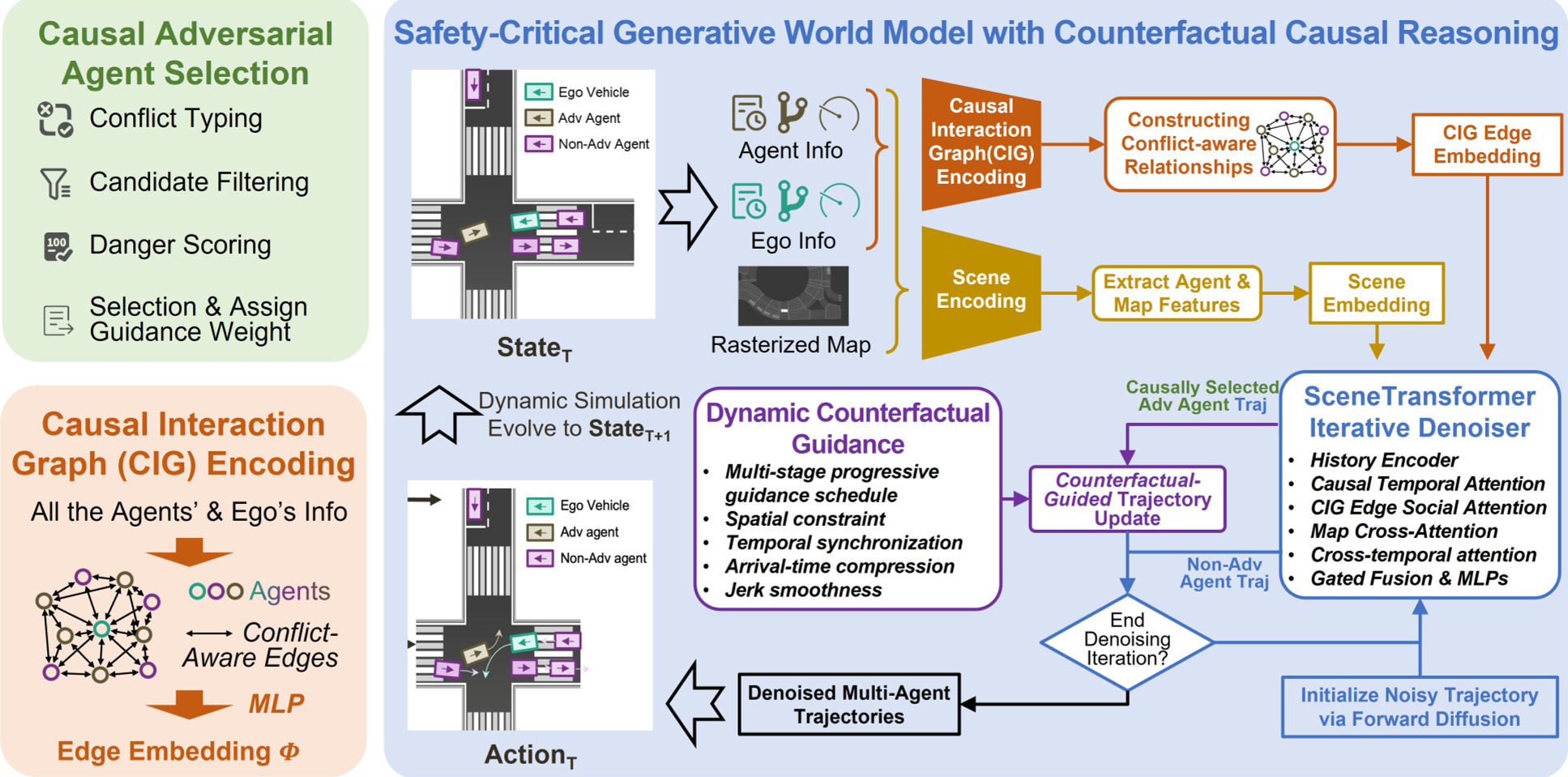

CounterScene transitions from correlation to causation through a structured pipeline:

1. Causal Identifying "The Who"

Instead of picking the closest car, CounterScene uses Conflict Analysis. it classifies interactions into "Intersection" or "Following" types and calculates a Danger Score. This identifies the agent whose behavior is actively suppressing the most latent risk.

2. Modeling "The How" (Causal Interaction Graph)

The backbone is a SceneTransformer augmented with a Causal Interaction Graph (CIG). This directed graph ensures that if we intervene on the critical agent, the behavioral changes propagate realistically to others.

Figure: The CounterScene framework and its Causal Interaction Graph.

Figure: The CounterScene framework and its Causal Interaction Graph.

3. Executing "The What-if" (Counterfactual Guidance)

The core intervention happens during the Diffusion Reverse Process. The model applies two types of guidance:

- Spatial Guidance: Drawing the agent toward the conflict point.

- Temporal Guidance: Deleting the "time-gap" (safety margin). By ensuring both agents arrive at the same place at the same time, a collision emerges naturally from the dynamics.

Experimental Mastery

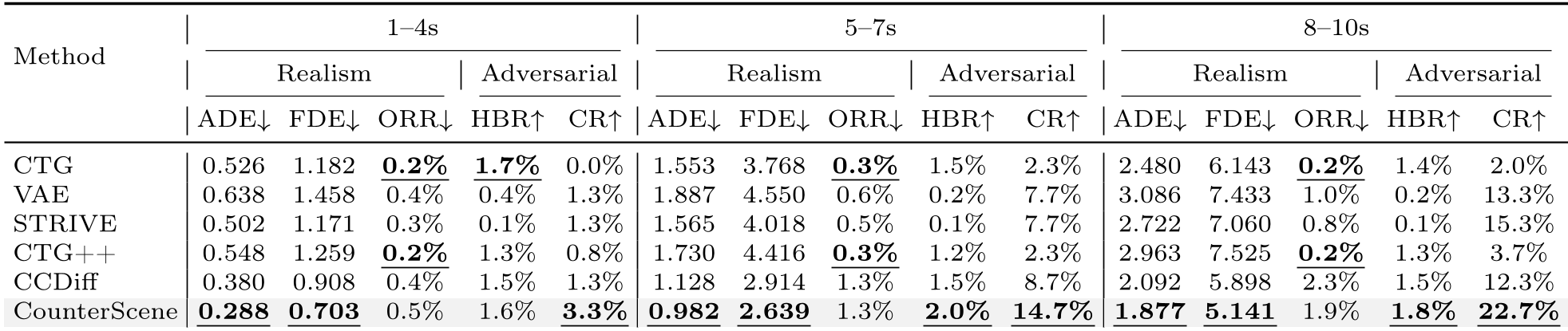

The results on nuScenes are striking because the advantage actually widens over time. In long-horizon simulations (8-10s), CounterScene doesn't just crash more; it crashes more realistically.

Table: Comparison across different horizons. Note the significant jump in CR (Collision Rate) for CounterScene at 8-10s.

Table: Comparison across different horizons. Note the significant jump in CR (Collision Rate) for CounterScene at 8-10s.

Zero-Shot Generalization: From Boston to Singapore

Remarkably, a model trained only on nuScenes (Boston/Singapore) worked perfectly on nuPlan (Las Vegas/Pittsburgh) without any retraining. Why? Because Causal Physics is Invariant. While driving styles change, the physical requirements for a collision—occupying the same space at the same time—are universal.

Critical Insight: Who to Perturb Matters

A key ablation study showed that choosing the "Wrong Agent" (proximity-based selection) degrades realism 44% more than picking the causal agent. If you try to force a car 50 meters away to cause a crash, the diffusion model has to "warp" the trajectory to make it happen, destroying the scene's plausibility.

Conclusion

CounterScene proves that we don't need "weird" data to find "weak" spots in AD planners. By understanding the causal interaction structure of traffic, we can generate a "Counterfactual World" where one small, realistic change in a neighbor's behavior leads to a critical safety test. This points toward a future where generative world models serve as the ultimate, interpretable "digital twins" for safety validation.

Limitations: Currently, the framework focuses on single-variable interventions. Real-world edge cases often involve multi-agent causal chains, which remains a frontier for future work.