The paper presents a near-linear time $O(n \log n)$ algorithm for 4-coloring planar graphs, significantly improving upon the $O(n^2)$ complexity established in 1996. The core methodology, termed "Linear Reducibility," identifies a linear number of non-touching reducible configurations or non-crossing obstructing cycles concurrently.

TL;DR

For nearly 30 years, the best algorithm to 4-color a planar graph took quadratic time ($O(n^2)$). A team of researchers has finally broken this barrier, proposing an $O(n \log n)$ algorithm. Their secret? Finding a way to perform linearly many reductions simultaneously by discovering reducible configurations even in the "flat" (zero-curvature) parts of the graph.

The Curvature Bottleneck

The Four Color Theorem (4CT) is famously a "computer-aided" proof. The classic approach uses discharging rules—a way of moving "combinatorial curvature" around a graph until a point of positive curvature is found. This point reveals a reducible configuration (a small subgraph that can be removed, colored, and re-inserted).

However, traditional proofs only guaranteed the existence of one such point. In computational terms:

- Remove 1 configuration (constant size).

- Recurse on the remaining $n-1$ vertices.

- Cost: $O(n)$ per step $ imes$ $n$ steps = $O(n^2)$.

Current SOTA methods were stuck here because they couldn't see reductions in "flat" parts of the graph where the curvature didn't cluster.

The "Flat" Insight: Finding Reductions Everywhere

The paper’s major technical leap is proving that planar triangulations contain linearly many pairwise non-touching reducible configurations.

The authors moved beyond the "local neighborhood" view. They demonstrated that even in large, flat triangulations (like the hexagonal tiling of a plane), reducible configurations are unavoidable if you look at a large enough radius (up to distance 12-14 from a vertex).

Methodology: The D-Reducibility Scale

To handle this at scale, the authors used:

- A Massive Library: 8,202 D-reducible configurations (compared to ~600 in the 1990s).

- Pseudo-Configurations: A category-theoretic approach to modeling graph embeddings that allows a computer to "batch-check" whether a neighborhood is blocked by a known reducible pattern.

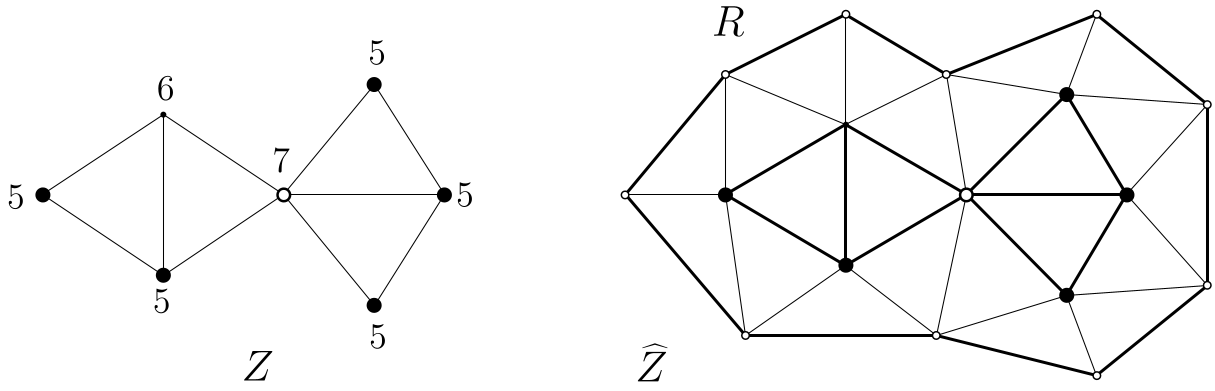

Figure 1: Illustration of a D-reducible configuration used for the induction step.

Figure 1: Illustration of a D-reducible configuration used for the induction step.

From Randomness to Determinism

The algorithm initially uses a randomized approach to apply Kempe Changes (swapping color pairs in a connected component). They prove that a random Kempe change has a constant probability of improving the "reducibility level" of a ring.

By applying the method of conditional expectations, they derandomize this process. This allows them to:

- Remove $\Omega(n)$ vertices.

- Find a valid coloring for the remainder.

- Re-insert the vertices and fix the colors using $O(\log n)$ global Kempe change passes.

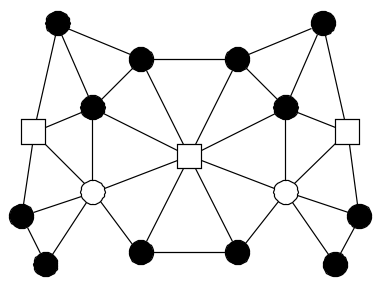

Figure 2: The exceptional 'X' configuration discovered during 'flat' case analysis.

Figure 2: The exceptional 'X' configuration discovered during 'flat' case analysis.

Experimental Validation: 256 Cores and GitHub

The proof is "pragmatic." Because the human-readable part of the proof must account for thousands of neighborhoods, the authors provided C++ code to verify the structure. On a 256-core machine, the verification of all 2,100+ "flat" neighbor cases took only a few hours.

| Metric | Robertson et al. (1996) | This Work (2026) | | :--- | :--- | :--- | | Time Complexity | $O(n^2)$ | $O(n \log n)$ | | Configurations | ~633 | 8,202 | | Reduction Type | Single (Additive) | Batch (Constant Factor) |

Critical Insight & Future Outlook

The move from $O(n^2)$ to $O(n \log n)$ is a watershed moment for topological algorithms. The authors suggest that the final frontier—$O(n)$ Linear Time—is possible. The current bottleneck is the "simulation" of Kempe changes across the whole graph.

If a data structure can be designed to simulate these changes in $O(n / \log n)$ time, the 4-coloring problem will officially enter the realm of perfectly efficient algorithms.

Note: This blog post is a technical summary of the arXiv paper "The Four Color Theorem with Linearly Many Reducible Configurations and Near-Linear Time Coloring."