Hitem3D 2.0 is a novel framework for high-fidelity 3D texture generation that integrates 2D multi-view generative priors with native 3D representations. It overcomes consistency and alignment issues by combining a 3D position-aware multi-view synthesis pipeline with a sparse voxel-based native 3D texture diffusion model.

TL;DR

Generating high-quality, consistent textures for 3D meshes has long been a tug-of-war between Visual Detail (2D methods) and Spatial Coherence (3D methods). Hitem3D 2.0 ends this conflict by proposing a multi-view guided native 3D texture generation framework. It uses 2D diffusion models to "hallucinate" consistent views and then projects those views into a native 3D sparse voxel space for a final, seamless synthesis.

The Core Conflict: 2D Projection vs. 3D Native Generation

Why is 3D texturing so hard?

- Multi-view Reprojection (The 2D Way): Methods like TEXTure or Text2Tex paint a 3D object view-by-view. However, since the AI doesn't "see" in 3D, the front and back of a model rarely match perfectly, leading to visible seams and "ghosting" artifacts.

- Native 3D Generation (The 3D Way): Methods like Trellis or NaTex generate textures directly on voxels or point clouds. While spatially perfect, they are limited by the scarcity of high-quality 3D datasets, often leading to blurry or generic results.

Hitem3D 2.0 acts as a bridge, leveraging the massive prior knowledge of 2D image models while maintaining the structural integrity of 3D representations.

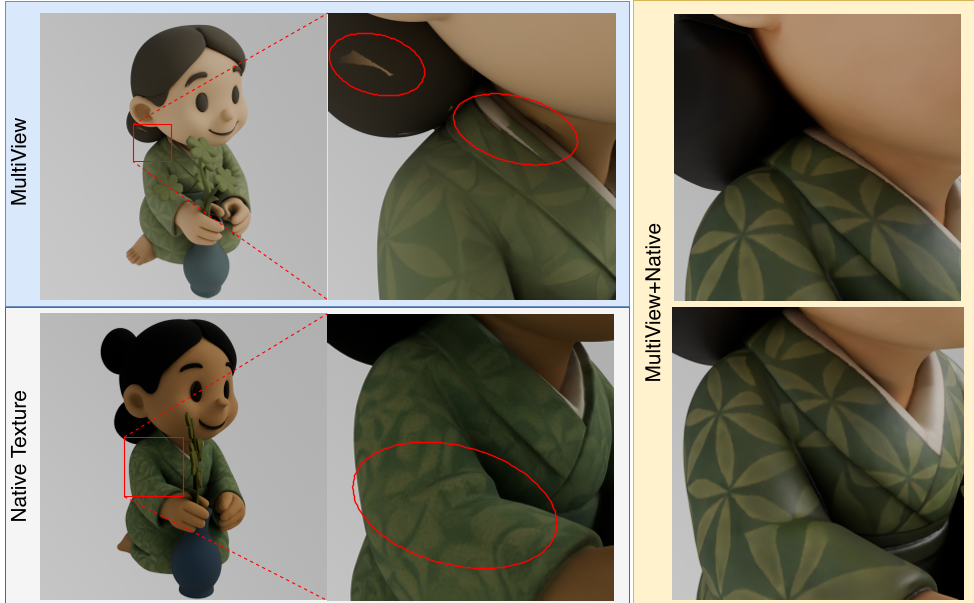

Figure 1: Comparison between reprojection-based, native, and the Hitem3D 2.0 hybrid approach.

Figure 1: Comparison between reprojection-based, native, and the Hitem3D 2.0 hybrid approach.

Methodology: A Two-Stage Masterpiece

1. 3D Position-Aware Multi-View Synthesis

The first goal is to generate 2D reference views that actually align. The authors implemented a 4-stage pipeline:

- Domain Adapter: Syncs the model to the "rendered" look of 3D data.

- Geometry ControlNet: Uses normal maps to ensure the texture fits the shape.

- 3D RoPE (Rotary Positional Encoding): This is the secret sauce. By injecting 3D coordinates into the 2D attention layers, the model learns that a pixel in the "front view" corresponds directly to a pixel in the "side view."

- Delight LoRA: Removes baked-in shadows, essential for professional-grade 3D assets.

2. Native 3D Texture DiT

Once the consistent views are generated, they are fed into a Diffusion Transformer (DiT) that operates on Sparse Voxels.

- The framework uses a Dual-Branch VAE to decouple geometry and texture, ensuring that "painting" the object doesn't accidentally deform the mesh.

- Cross-attention layers align the 2D multi-view features with the 3D voxel space, filling in hidden/occluded regions with plausible details.

Figure 2: The Hitem3D 2.0 architecture showing the flow from geometry-aligned multiviews to the native 3D representation.

Figure 2: The Hitem3D 2.0 architecture showing the flow from geometry-aligned multiviews to the native 3D representation.

Experimental Excellence

Testing Hitem3D 2.0 against commercial engines reveals a massive leap in Texture Fidelity. The Ablation Study (Fig 7) shows that without the MultiView Module or Delight LoRA, the models suffer from inconsistent colors and muddy artifacts.

Figure 3: Ablation results proving the necessity of each component—Geometry ControlNet for alignment and Delight LoRA for lighting uniformity.

Figure 3: Ablation results proving the necessity of each component—Geometry ControlNet for alignment and Delight LoRA for lighting uniformity.

Critical Insight: Why This Matters

The most impressive part of Hitem3D 2.0 isn't just the pixels—it's the spatial alignment. By using 3D RoPE (3D Rotary Positional Encoding) within a 2D diffusion backbone, the authors have effectively taught a 2D model how to "think" in 3D.

Limitations: The dependence on a pre-trained image editing model means the quality is capped by the 2D teacher. Extremely complex topologies (like intricate jewelry or hyper-porous structures) might still challenge the sparse voxel resolution.

Conclusion

Hitem3D 2.0 sets a new SOTA by proving that 3D texturing shouldn't choose between 2D and 3D. By centering the generation in a Native 3D Voxel Space while being guided by Geometry-Aware 2D Priors, it achieves production-ready results that are visually stunning and topologically sound.