The paper introduces a "Local Bernstein Theory" to extend classical "global" results on exponential-type functions to holomorphic functions within rectangular regions. This theoretical framework is applied to solve longstanding conjectures by Erdős and Turán, establishing sharp lower bounds for Lebesgue constants of Lagrange interpolation on subintervals.

TL;DR

Terence Tao introduces Local Bernstein Theory, a framework that brings the sharp precision of classical entire function theory to local rectangular domains in the complex plane. This mathematical "microscope" is used to prove sharp lower bounds for the Lebague constant in Lagrange interpolation, finally answering questions posed by Erdős and Turán over 60 years ago.

Context: The Global vs. Local Gap

Classical Bernstein theory provides powerful inequalities (like the Bernstein and Boas inequalities) for entire functions of exponential type. These functions are "ideal" mathematical objects—smooth, predictable, and globally defined.

However, practical approximation theory deals with high-degree polynomials on finite intervals (like $[-1, 1]$). While these polynomials should behave like sinusoids locally, they grow much slower than exponential functions at infinity. This discrepancy created a technical barrier: how do you apply global Bernstein tools to a localized problem?

The "Local" Insight

The core contribution of this paper is Theorem 1.2, which provides a bridge. By constraining a holomorphic function within a thin rectangle $R^+(I, y_0)$, Tao proves that if the function is real-valued on the bottom edge and bounded exponentially on the top, it must obey Bernstein-type derivative bounds within the interior.

Key Methodology: The Blended Function

To handle the "microscopic" scale (where roots are extremely close together), Tao utilizes a blended function $G(z)$.

- It shares the same roots as the polynomial $P(z)$ in a tiny micro-region.

- It transitions into a pure sinusoid at larger scales.

By applying a Weighted Residue Theorem, the author proves that if the Lebesgue constant were too small, it would violate the fundamental relationship between the function's value at a point and its residues at its poles (roots).

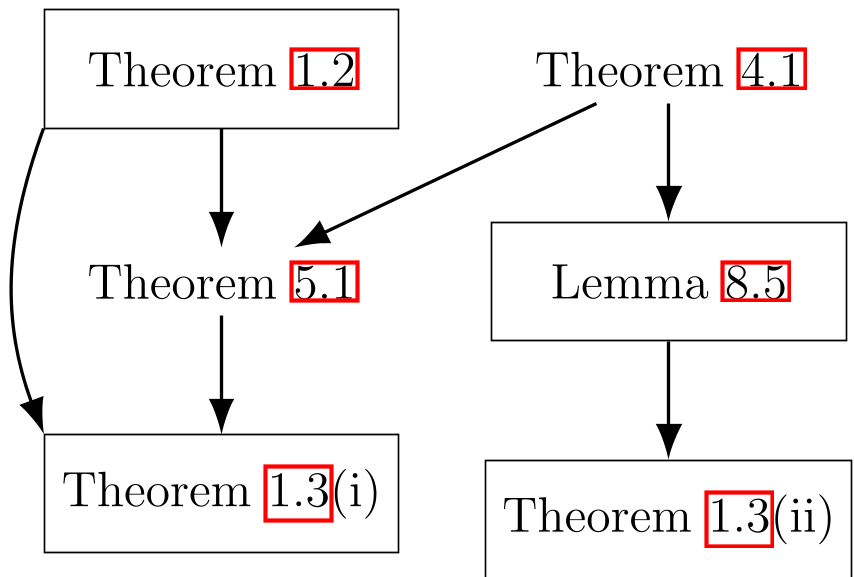

The complex web of dependencies: Local Bernstein theory (middle) acts as the engine to drive the main Lebesgue constant results from basic harmonic analysis.

The complex web of dependencies: Local Bernstein theory (middle) acts as the engine to drive the main Lebesgue constant results from basic harmonic analysis.

Experiments & Results: Cracking the Conjectures

The paper focuses on the Lebesgue function $\lambda(x)$, which measures the stability of polynomial interpolation.

1. The Sup-Norm Bound

Erdős conjectured that even on a tiny subinterval $I$, the maximum instability must grow like $\frac{2}{\pi} \log n$. Tao proves: $$\sup_{x \in I} \lambda(x) \geq \frac{2}{\pi} \log n - O(1)$$ This matches the global bound discovered in the 1960s, showing that "bad" interpolation points are ubiquitous.

2. The Integral Bound

Addressing the "average" stability, the paper establishes: $$\int_I \lambda(x) dx \geq \frac{4|I|}{\pi^2} \log n - o(\log n)$$ This confirms that the Chebyshev nodes are not just a good choice; they are asymptotically the best possible choice for minimizing the average error.

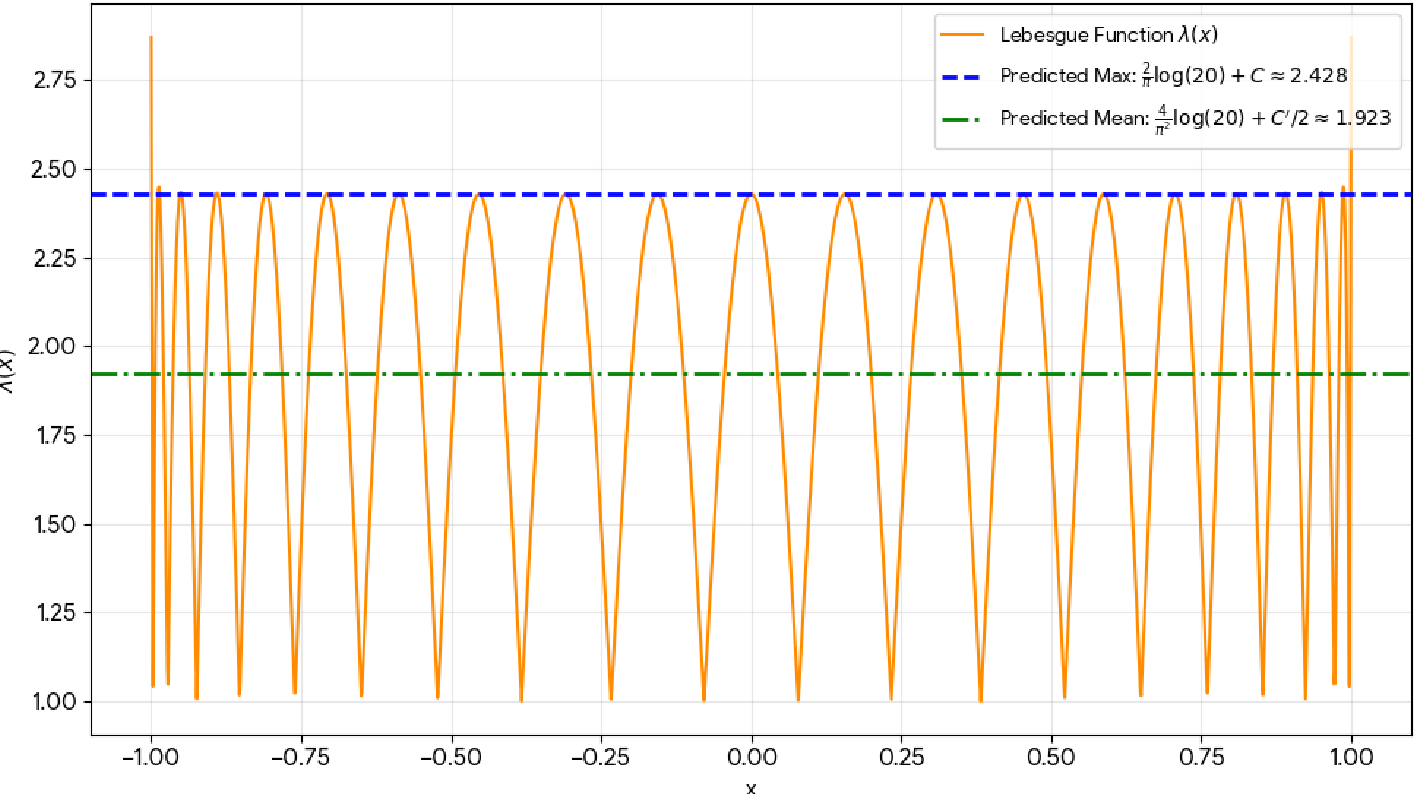

The Lebesgue function $\lambda(x)$ for n=20. The theory accurately predicts the peak values and the mean (indicated by the red and green dashed lines) across the interval.

The Lebesgue function $\lambda(x)$ for n=20. The theory accurately predicts the peak values and the mean (indicated by the red and green dashed lines) across the interval.

Scientific Impact & AI Collaboration

Interestingly, Tao notes the use of AI tools like ChatGPT DeepResearch and Claude to identify obscure references and AlphaEvolve to discover proofs for the trigonometric toy models. This represents a modern shift in mathematical research: using LLMs to suggest "lemmas" which the human then rigorously verifies and integrates.

Conclusion

This paper is a masterclass in Localization. By proving that high-degree polynomials are locally "Bernstein-like," Tao provides a general toolset that likely extends beyond interpolation theory into any field where complex oscillations on a real interval must be controlled.

Limitations

The error terms for the integral bound are $o(\log n)$; a more refined $O(1)$ error term remains a target for future research.