This paper proposes a theoretical framework interpreting the generative process in diffusion models as out-of-equilibrium phase transitions. By utilizing analytically tractable patch models and Ginzburg-Landau field theory, the author demonstrates that architectural constraints like locality and translation equivariance transform simple memorization into the emergence of coherent, large-scale spatial patterns via "softening" Fourier modes.

TL;DR

Diffusion models don't just "denoise" linearly; they undergo a spontaneous symmetry-breaking phase transition. A new paper from Radboud University argues that architectural constraints (like convolutions) turn simple memorization into collective spatial modes. By identifying a "critical window" where the model is most "soft" and susceptible, we can control image generation with surgical precision using targeted guidance pulses.

Background: Beyond Simple Curve Fitting

In the standard view, a diffusion model is a gradient descent on a log-density surface. But if you watch a diffusion model work, the image doesn't emerge uniformly. It starts as a vague "blob" and suddenly, at a specific noise level, the structure "crystallizes."

The author, Luca Ambrogioni, posits that this isn't just an observation—it's physics. Specifically, it's an instance of an out-of-equilibrium phase transition similar to how magnetic domains form in a cooling metal or how structure emerged in the early universe (the Kibble-Zurek mechanism).

The Core Insight: Architectural Constraints as Pattern Seeders

Why does a model generate a new cat instead of just repeating a training image? The paper argues that locality and translation equivariance (the hallmarks of ConvNets) are the keys.

- Memorization vs. Generalization: In an unconstrained model, the "instability" is a simple choice between training points (a Pitchfork Bifurcation).

- Spatial Extension: In a ConvNet, the interaction is local. This transforms a single choice into a softening of Fourier modes. Suddenly, the model isn't choosing between Image A or Image B; it's allowing many spatial frequencies to grow simultaneously, forming a "pattern" instead of a "memory."

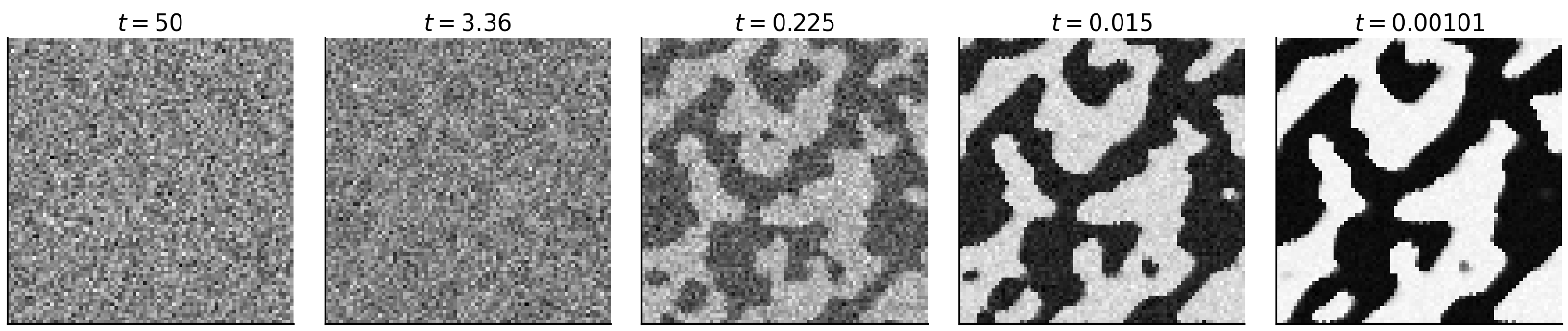

Figure 1: Snapshots of a reverse diffusion trajectory showing the transition from noise to coherent spatial domains.

Figure 1: Snapshots of a reverse diffusion trajectory showing the transition from noise to coherent spatial domains.

Methodology: The Ginzburg-Landau Connection

Ambrogioni uses a Patch Score Model to show that beneath the neural network lies a Hamiltonian structure. By coarse-graining the lattice, he derives an effective field theory of the Ginzburg-Landau type:

$$ \mathcal{H}[\phi; t] = \int d^d r \left[ \frac{1}{2} r(t) \phi^2 + \frac{1}{2} \kappa(t) ( abla \phi)^2 + \frac{u(t)}{4} \phi^4 \right] $$

When the $r(t)$ term changes sign, the "symmetric" noise state becomes unstable. This is the Critical Point. At this moment, the "Correlation Length" ($\xi$) diverges, meaning distant pixels suddenly start talking to each other to decide what the image will become.

Experimental Proof: Targeted Guidance

The most striking evidence of this theory is the Guidance Pulse experiment. If the theory is right, there's a specific time ($t_c$) when the model is most "undecided" (maximal susceptibility).

The author tested this by applying Classifier-Free Guidance (CFG) only as a short pulse:

- Random Pulse: CFG applied at a random noise level.

- Critical Pulse: CFG applied only when the correlation length peaks.

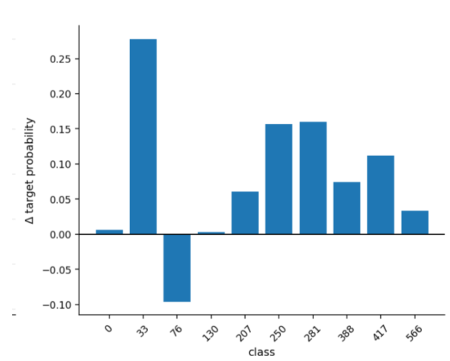

Figure 2: Applying guidance at the "critical time" results in significantly higher class alignment (DINOv2 scores) than random pulses.

Figure 2: Applying guidance at the "critical time" results in significantly higher class alignment (DINOv2 scores) than random pulses.

The results were clear: pulses at the critical time had significantly higher leverage over the final content. This suggests that "more guidance" isn't always better—"better-timed guidance" is what actually matters.

Critical Analysis & Takeaways

This work elevates diffusion theory from "black-box optimization" to "statistical mechanics." It provides a diagnostic toolkit (mode spectra, correlation length proxies) that researchers can use to:

- Optimize Sampling: Spend more compute resources inside the critical window.

- Improve Control: Use the peak susceptibility to apply fine-grained style or content control.

- Understand Generalization: See how architectural choices directly influence the "universality class" of the patterns generated.

Limitations: The "thermodynamic limit" for neural networks is still a bit fuzzy, and for non-convolutional models (like pure Transformers/ViT), the locality argument might need to be replaced with "Attention-induced" long-range glassy dynamics.

Future Outlook

This paper sets the stage for a new generation of "Physics-Aware Samplers" that don't just follow a schedule, but react to the internal "criticality" of the denoising process in real-time.