PhyGenesis is a physics-aware driving world model designed for high-fidelity, physically consistent multi-view video generation. It utilizes a two-stage pipeline—a Physical Condition Generator and a Physics-enhanced Video Generator—to achieve SOTA performance in simulating complex, safety-critical driving scenarios like collisions and off-road departures.

TL;DR

PhyGenesis is a breakthrough in autonomous driving simulation that addresses the "uncanny valley" of physical interactions. By integrating a Physical Condition Generator to rectify impossible trajectories and a Physics-enhanced Video Generator trained on a mix of real-world and CARLA-simulated crashes, it produces multi-view videos that honor the laws of physics even when the input commands don't.

Academic Standing: This work moves beyond "nominal" scene generation (SOTA for sunny-day cruising) into the realm of safety-critical world modeling, providing a crucial tool for closed-loop testing of end-to-end planners.

The Problem: Generative Models are Bad Physicists

Most current world models (e.g., GAIA-1, MagicDrive) act as "condition-to-pixel" translators. They work beautifully on the nuScenes dataset because nuScenes contains safe, professional driving. However, if a simulator or a faulty planner asks the model to "drive through a wall" or "collide with a truck," these models break down. Instead of a realistic collision, the vehicles might melt, overlap, or simply disappear.

This is due to two gaps:

- Feasibility Gap: Trajectories from planners often violate physical constraints (overlapping 2D coordinates).

- Interaction Gap: Models haven't "seen" enough collisions or off-road departures to know how pixels should behave during impact.

Methodology: The PhyGenesis Blueprint

PhyGenesis solves this by splitting the hallucination process into two distinct, physics-grounded stages.

1. Physical Condition Generator (The "Physical Brain")

Before any pixels are drawn, this module takes raw 2D trajectories ($T_{orig}$) and rectifies them.

- Architecture: It uses Spatial Cross-Attention to ground agents in the current scene and Agent Self-Attention to detect potential collisions (penetration conflicts).

- Output: It converts 2D points into a 6-DoF state (x, y, z, roll, pitch, yaw). This is vital because a car's "pitch" during a sudden brake or "roll" during a collision cannot be captured in 2D.

- Time-Wise Head: Unlike standard MLPs that smooth out motion, PhyGenesis uses a TCN-based head to capture the "impulse" or sudden velocity drop of an impact.

2. Heterogeneous Data: Learning from Failure

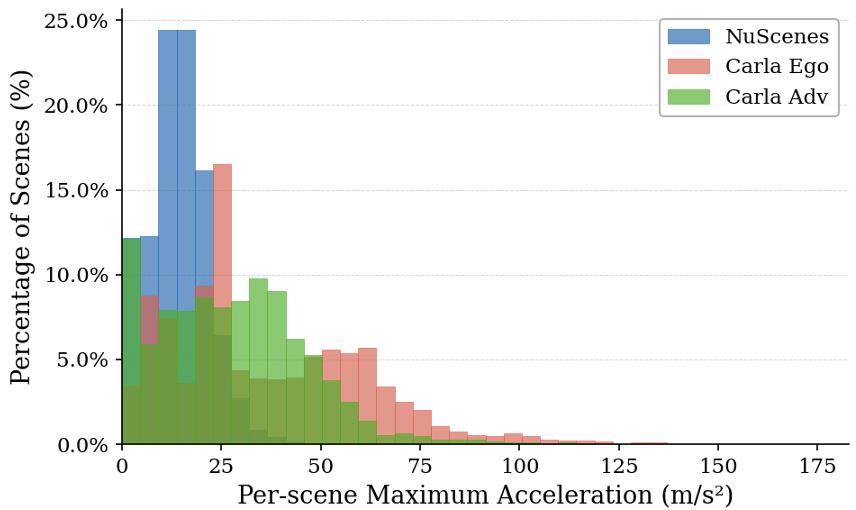

You cannot learn physical consistency from safe driving alone. The authors curated 31 hours of CARLA data, specifically inducing:

- Ego-failures: Off-road departures and wall collisions.

- Adversarial events: Nearby agents causing chaotic interactions.

By co-training on nuScenes (for visual realism) and CARLA (for physical dynamics), the model learns an Inductive Bias for how objects interact.

Experimental Results: Surviving the "Stress Test"

The authors conducted a "nuScenes Stress Test," intentionally corrupting trajectories to force collisions. While baselines like DiST-4D and MagicDrive-V2 suffered from ghosting and artifacts, PhyGenesis maintained structural integrity.

| Metric | MagicDrive-V2 | DiST-4D | PhyGenesis (Ours) | | :--- | :--- | :--- | :--- | | FVD (CARLA Ego) ↓ | 207.64 | 197.57 | 72.48 | | Physical Score (PHY) ↑ | 0.60 | 0.39 | 0.71 | | Human Pref. ↑ | 0.06 | 0.10 | 0.71 |

Figure 5: Notice how prior methods exhibit "melting" agents under collision conditions, whereas PhyGenesis maintains rigid body constraints.

Figure 5: Notice how prior methods exhibit "melting" agents under collision conditions, whereas PhyGenesis maintains rigid body constraints.

Ablation Insight: Why both modules matter?

The ablation study confirms that removing the Physical Condition Generator leads to a massive jump in FVD (from 72 to 116), proving that even a powerful video generator cannot "fix" a physically impossible input command on its own.

Critical Analysis & Conclusion

Takeaway: PhyGenesis demonstrates that "Scaling Laws" aren't just about data volume, but data diversity in the physics domain. It effectively bridges the gap between high-fidelity simulators (CARLA) and photorealistic generative models.

Limitations:

- The model relies on a "Style Transfer" step to make CARLA data look like real-world footage, which might introduce its own set of visual biases.

- Generating 6-view 448x800 video is computationally expensive (trained on 48 H20 GPUs), making real-time interactive simulation a remaining challenge.

Future Work: We expect this "rectification-then-generation" paradigm to become standard in Physical AI, moving towards world models that can serve as "Digital Twins" for end-to-end autonomous driving validation.