The paper introduces a novel approach to predicting listener understanding states in explanatory dialogues using German GPT-2 and BERT. By quantifying cognitive load-related cues—specifically linguistic surprisal, syntactic complexity, and gaze entropy—the authors classify four distinct states of understanding: Understanding, Partial Understanding, Non-Understanding, and Misunderstanding.

TL;DR

Researchers from Bielefeld and Paderborn Universities have developed a method to predict whether a listener actually understands an explanation by looking at their eyes and the complexity of the words they hear. By mapping linguistic surprisal and gaze entropy to "Cognitive Load," they achieved high-accuracy classification of four states of understanding—moving beyond simple binary "yes/no" feedback.

Positioning: This work is a significant step in Social XAI, shifting the focus from the agent's explanation logic to the listener's internal cognitive state.

Problem & Motivation: The Gradient of Understanding

In everyday conversation, "understanding" isn't a toggle switch. We often find ourselves in the "Partial Understanding" zone or, worse, the "Misunderstanding" trap—where we think we know what's going on, but we don't.

Current AI systems struggle to detect these states because:

- The Subtle Signal: Listeners often nod ("mhm", "ja") even when confused to maintain social flow.

- The Cognitive Gap: Understanding is an internal process. Prior works focused on what was said, ignoring the Cognitive Load required to process the information.

The authors' insight is brilliant: If we can measure the effort the brain is making (via gaze and language complexity), we can infer the result of that effort.

Methodology: Operationalizing the "Mental Effort"

The study utilizes the MUNDEX Corpus, a dataset of people explaining board games. They extracted three primary pillars of features:

- Information Value (Surprisal): Using a German GPT-2 model, they calculated how "predictable" each word was. High surprisal equals high cognitive effort.

- Syntactic Complexity: They measured the "depth" of the sentence trees. A sentence with many nested clauses (high tree depth) strains the working memory.

- Gaze Entropy: This is the "X-factor." By tracking gaze direction and calculating its unpredictability (entropy), they captured the "thinking face"—the moments where a listener looks away to process a difficult concept.

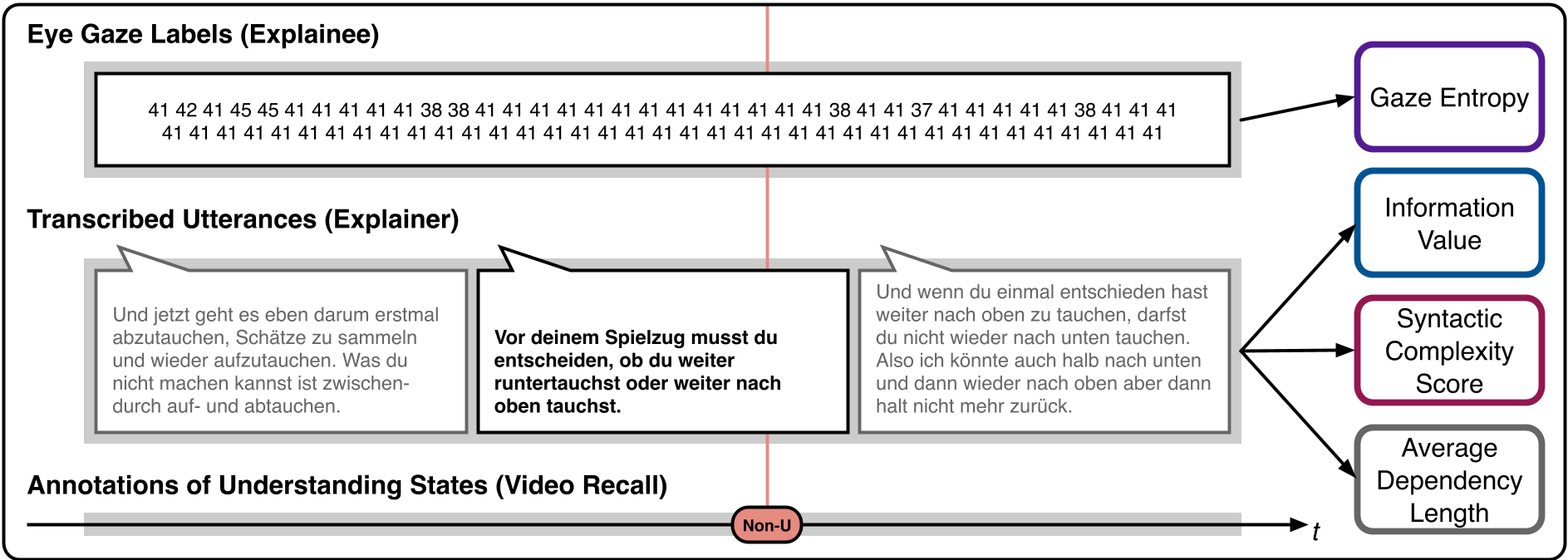

Figure 1: The quantification pipeline transforming raw dialogue and gaze data into cognitive load metrics.

Figure 1: The quantification pipeline transforming raw dialogue and gaze data into cognitive load metrics.

These features were then fed into a German BERT model. Instead of just looking at the current sentence, the model looks at the context (preceding and succeeding utterances) to finalize its "guess" on the listener's state.

Experiments: More Than Just Text

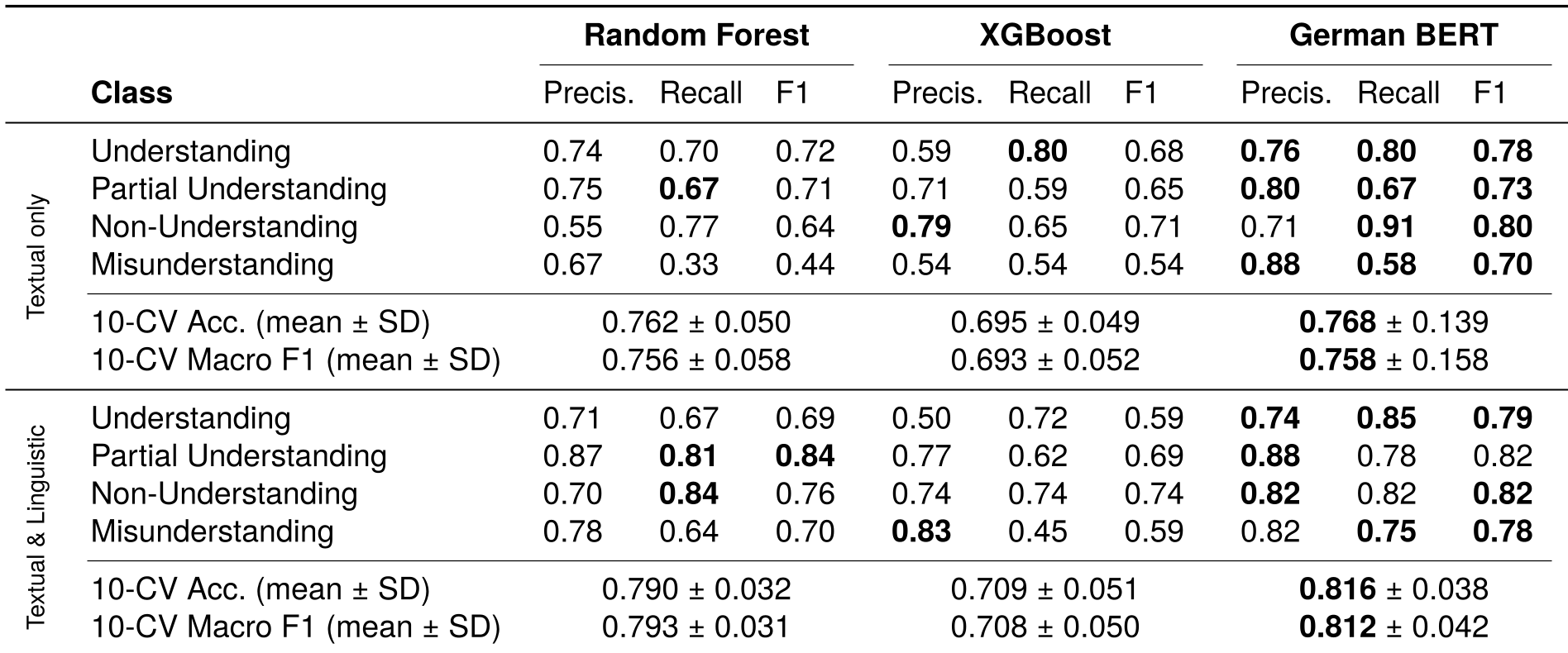

The researchers compared Random Forest, XGBoost, and a fine-tuned BERT model across two settings: Textual Only vs. Textual + Linguistic (Cognitive) Cues.

Key Findings:

- The "Cognitive Boost": In every classifier, adding Information Value, Gaze Entropy, and Syntactic Complexity improved the Macro F1 score. For BERT, accuracy jumped from ~76% to over 81%.

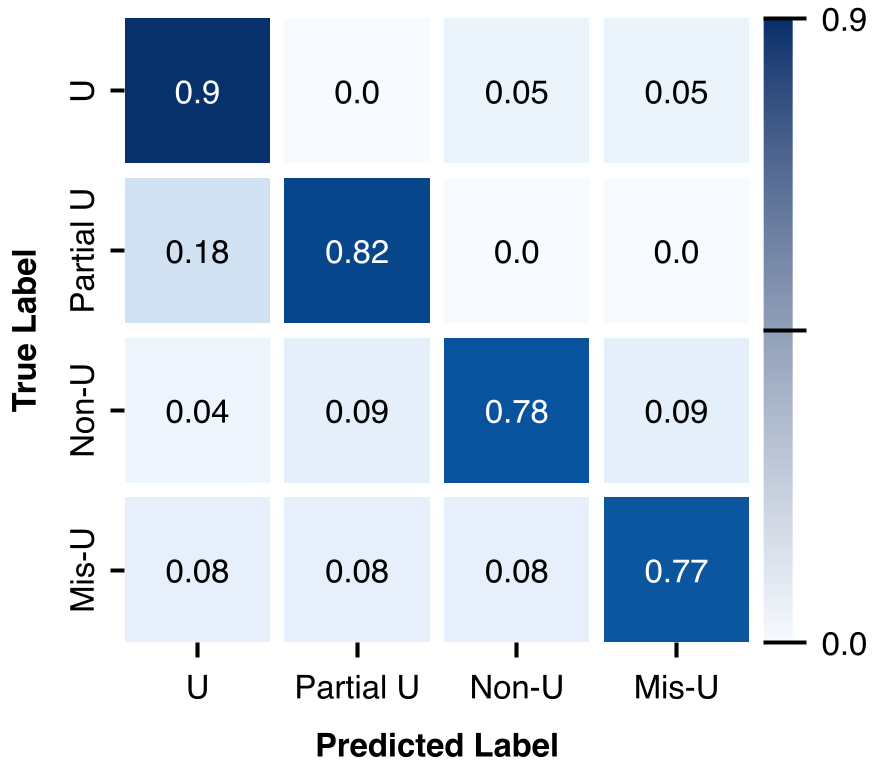

- Non-Understanding is Easy, Misunderstanding is Hard: The model is excellent at catching when a user is totally lost (F1: 0.82). However, "Misunderstanding" remains the "Holy Grail" of detection because the listener's behavior often mimics "Understanding" until the error is discovered much later.

Table 1: Performance comparison showing the BERT-based model outperforming off-the-shelf classifiers.

Table 1: Performance comparison showing the BERT-based model outperforming off-the-shelf classifiers.

Critical Insight: Why Gaze Matters

The statistical analysis revealed a fascinating trend: Higher gaze entropy (more eye movement) was actually associated with higher levels of reported understanding.

This suggests that "active listening" isn't just staring blankly at the speaker. It involves a dynamic visual search—perhaps looking at the game board or shifting gaze to process information. When a listener "checks out" (Non-Understanding), their gaze patterns ironically become more static or restricted.

Conclusion & Future Look

The paper successfully bridges the gap between psycholinguistic theory (Surprisal Theory) and machine learning.

The Takeaway: If you are building a tutor robot or a complex support bot, you cannot rely on the user saying "I don't get it." You must monitor the predictability of your own language and the visual feedback of the user.

Limitations: The study is currently focused on German and a relatively small sample size (21 dialogues). Future iterations will need to incorporate acoustic features (pitch, jitters) and broader cultural datasets to ensure these signals are universal.

Figure 2: Confusion matrix highlighting the challenge of distinguishing "Misunderstanding" from "Understanding".

Figure 2: Confusion matrix highlighting the challenge of distinguishing "Misunderstanding" from "Understanding".