RealRestorer is a generalizable real-world image restoration model based on a large-scale Diffusion Transformer (DiT) architecture. By leveraging the Step1X-Edit backbone and a novel two-stage training strategy, it achieves state-of-the-art results among open-source methods across nine degradation tasks, narrowing the performance gap with top-tier closed-source models like Nano Banana Pro.

Executive Summary

TL;DR: RealRestorer is an open-source powerhouse designed to handle the "messiness" of real-world image degradation—everything from motion blur and moiré patterns to lens flare and low light. By fine-tuning a Large Image Editing model (Step1X-Edit) on a massive hybrid dataset, the researchers have created a system that rivals the performance of elite closed-source models like Nano Banana Pro.

Background: Within the academic landscape, image restoration is shifting from "single-task, small-model" solutions to "all-in-one, foundation-model" approaches. RealRestorer positions itself as a state-of-the-art open-source alternative that emphasizes generalization over narrow synthetic performance.

The Problem: The Synthetic Trap

Most restoration models suffer from the "laboratory effect." They perform beautifully on datasets where noise or blur is mathematically synthesized (e.g., Gaussian blur), but crumble when faced with the irregular, multi-layered degradations of a real smartphone photo.

Current SOTA models are often:

- Closed-source: Models like GPT-Image-1.5 provide excellent results but offer zero transparency for researchers.

- Data-Stagnant: Relying on fixed synthetic distributions that don't match web-style artifacts.

Methodology: The Two-Stage Evolution

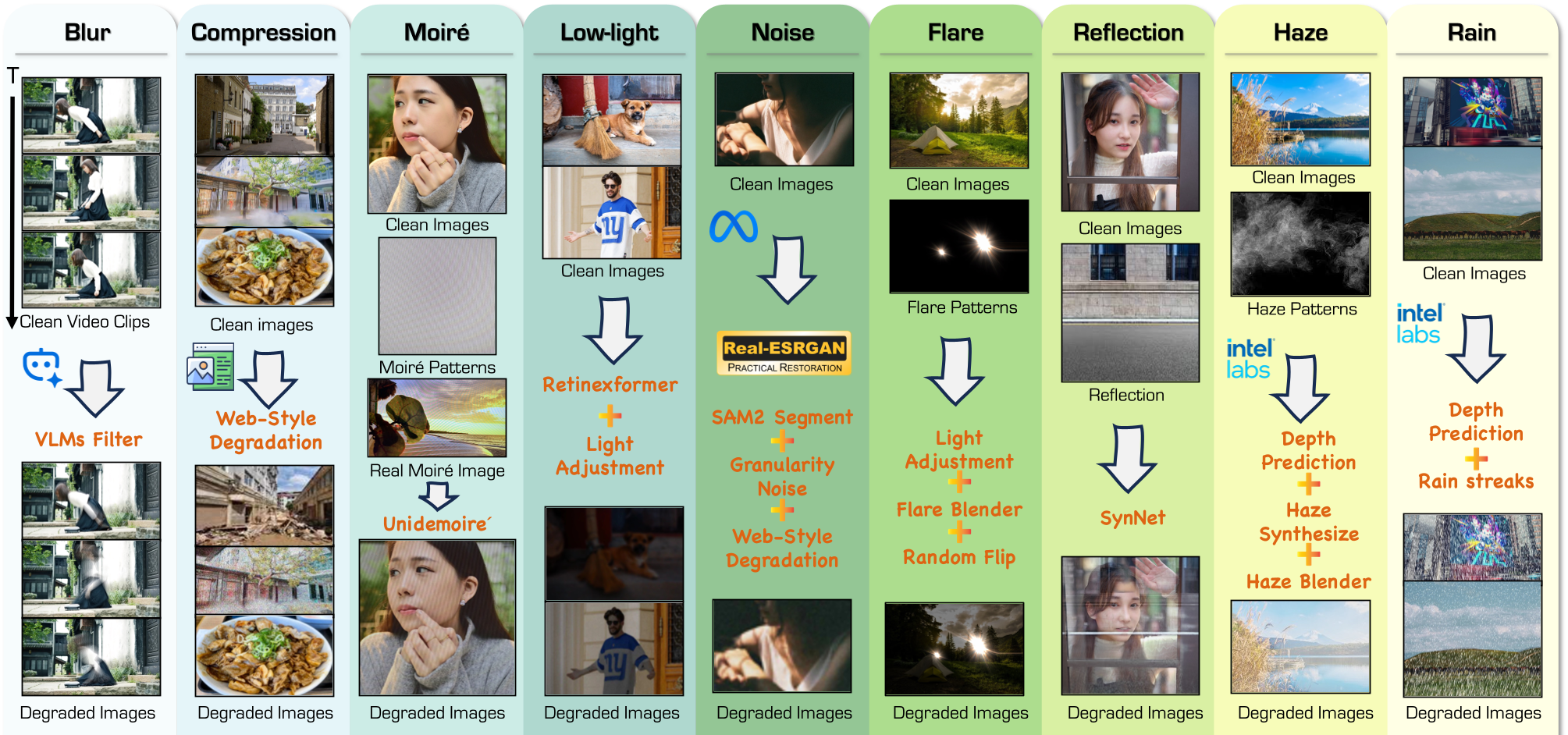

The core innovation of RealRestorer lies in its Data Construction Pipeline and Training Strategy. Instead of jumping straight into restoration, the model undergoes a two-step cognitive shift.

1. Synthetic Transfer Training

The model starts as a general image editor. In this stage, it learns the "physics" of degradation via 1.5 million synthetic pairs. This builds broad foundational knowledge of how to reconstruct pixels.

2. Supervised Fine-Tuning (SFT) with Real Data

The authors collected 100k real-world degraded images from the web and generated "clean" targets using high-tier generative models. By using a Progressively-Mixed strategy (mixing 20% synthetic with 80% real data), they prevented the model from overfitting to specific real-world noise patterns while honing its ability to handle complex textures.

Figure: The synthetic degradation pipeline incorporating granular noise modeling and segment-aware perturbations.

Figure: The synthetic degradation pipeline incorporating granular noise modeling and segment-aware perturbations.

RealIR-Bench: A New Yardstick

How do we measure success when there is no "ground truth" for a real-world blurry photo? The authors introduced RealIR-Bench and a VLM-based evaluation framework.

- Restoration Score (RS): Uses Qwen3-VL to rate image cleanliness from 1-5.

- Perceptual Consistency: Uses LPIPS to ensure the model doesn't "hallucinate" a different scene while fixing it.

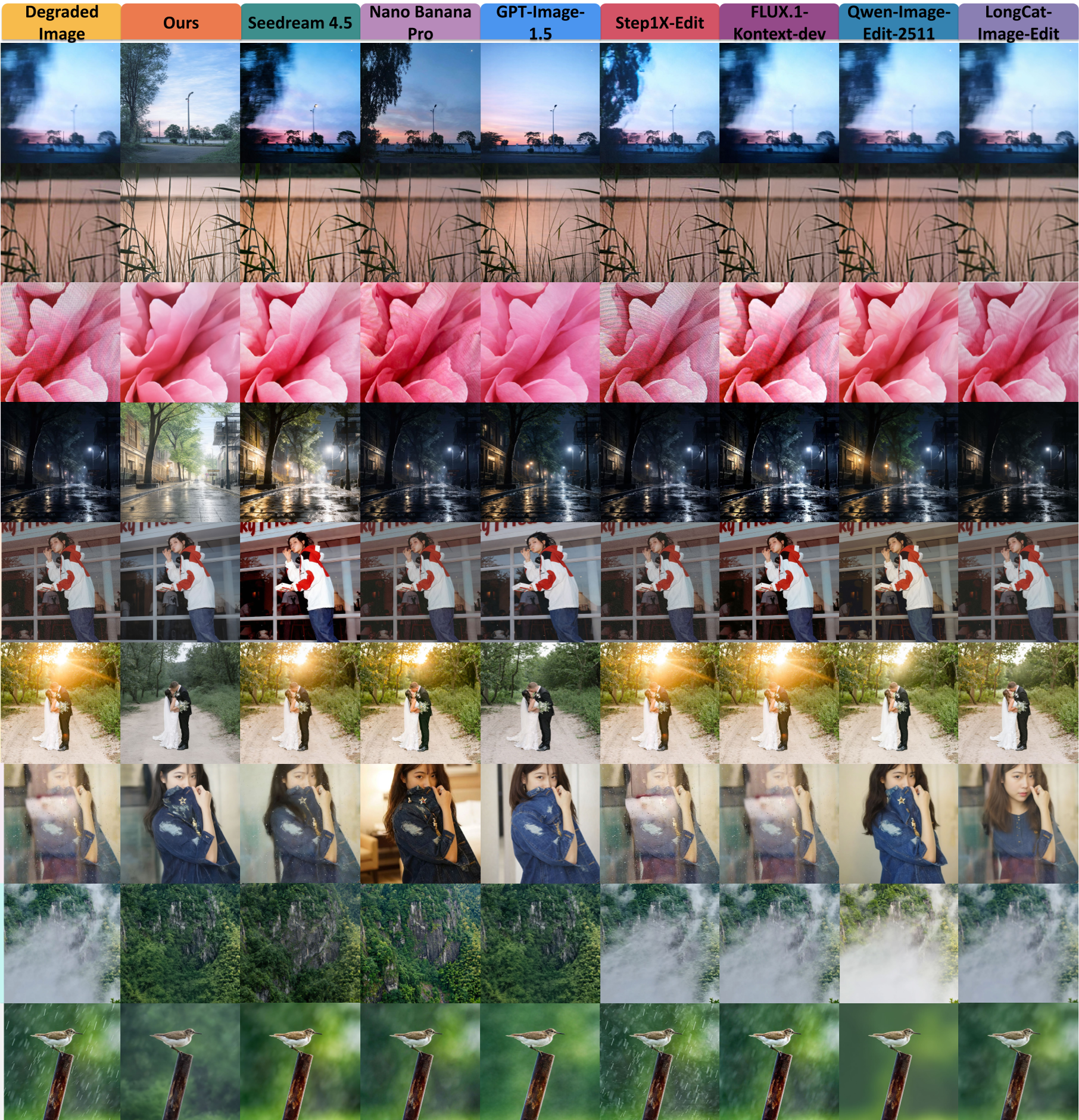

Figure: Qualitative comparison against top-tier models. RealRestorer maintains cleaner edges and better color fidelity.

Figure: Qualitative comparison against top-tier models. RealRestorer maintains cleaner edges and better color fidelity.

Results & Performance

RealRestorer isn't just "good for an open-source model"—it is elite.

- Deblurring & Low-light: Achieved #1 rank globally.

- Overall Rank: 3rd overall (including closed-source), narrowly trailing Nano Banana Pro by only 0.007 points.

- Zero-shot: Surprisingly, the model generalizes to unseen tasks like snow removal and old photo restoration, proving that it has learned general image "priors" rather than just memorized tasks.

Critical Insight: Why it Works

The success of RealRestorer suggests that image restoration is essentially an image editing task. By treating "noise removal" as just another editing instruction, the model utilizes the massive semantic knowledge of the DiT backbone.

Limitations:

- Inference Cost: Being a large DiT model, it requires 28 denoising steps, making it slower than traditional CNN-based restorers.

- Severe Ambiguity: It can still struggle with physical reflections (like mirror selfies) where the AI can't distinguish between the reflection and the subject.

Conclusion

RealRestorer marks a significant milestone for the open-source community. It proves that with the right data synthesis pipeline and a structured two-stage training approach, open-source models can eliminate the "quality gap" previously held by proprietary systems.

Keep an eye on the Project Page for the released code and weights.