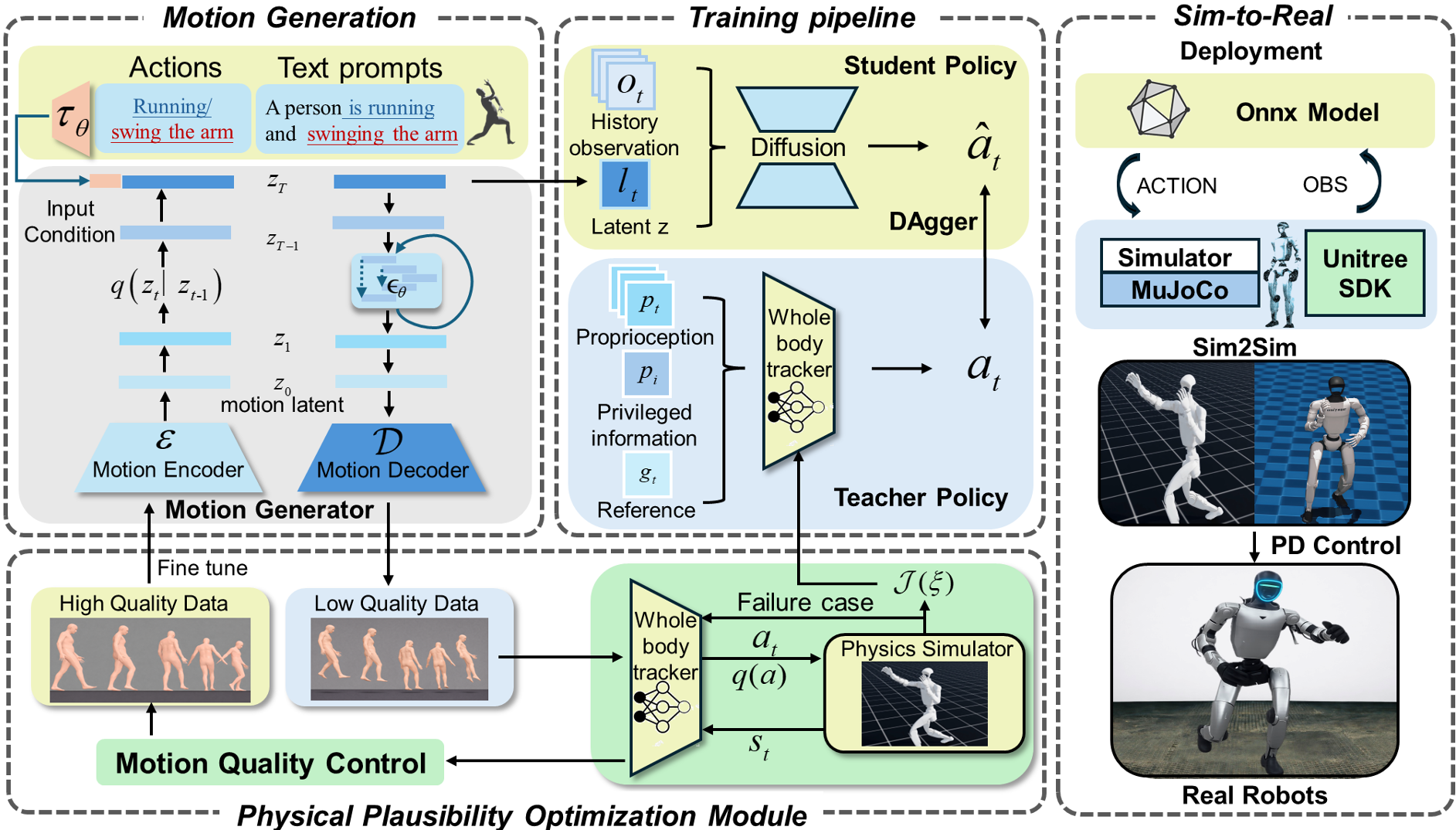

RoboForge is a unified latent-driven framework for text-to-motion humanoid locomotion that features a retarget-free pipeline. It introduces the Physical Plausibility Optimization (PP-Opt) module to bridge natural language descriptions and whole-body execution, achieving SOTA performance in physical realism on the Unitree G1 humanoid.

TL;DR

RoboForge is a breakthrough framework that allows humanoid robots to perform complex tasks—like throwing a javelin or executing martial arts—directly from text prompts. By eliminating the traditional motion retargeting step and using a bidirectional Physical Plausibility Optimization (PP-Opt) module, it ensures that "visual imagination" from diffusion models actually obeys the laws of physics.

Field Positioning: This work moves beyond "animation-style" motion generation into "execution-ready" robotics, establishing a new SOTA for stable, text-driven whole-body control.

The Problem: The "Broken Pipeline" of Humanoid Control

Most current AI researchers treat robot motion like a movie: if it looks smooth, it's good. However, for a 50kg humanoid like the Unitree G1, "looking smooth" isn't enough. Conventional pipelines follow a Decode → Retarget → Track sequence.

The failure points are systemic:

- Visual vs. Physical: Diffusion models often let feet "skate" or "sink" to make transitions look fluid.

- Retargeting Drift: Mapping human MoCap data to robot joints introduces small errors that explode during contact transitions (e.g., when a foot hits the ground).

- Data Scarcity: Real-world dynamical data for robots is incredibly expensive to collect.

Methodology: Bidirectional Physical Plausibility (PP-Opt)

The core innovation of RoboForge is the PP-Opt module, which acts as a "physics filter" and "data generator" simultaneously.

1. The Architecture

Instead of outputting joint angles, the Motion Generator produces a Latent Representation. The controller tracks this latent directly.

2. The Feedback Loop

- Forward Direction (Control): The tracker is optimized in a physics simulator (IsaacLab/MuJoCo) using rewards that specifically penalize Foot Skating, Floating, and Ground Penetration.

- Backward Direction (Generation): The system takes the "physically successful" movements from the simulator and feeds them back into the Motion Generator. This forces the AI to learn a latent space that only represents "possible" robot movements.

Experiments: Precision and Stability

The authors tested RoboForge against traditional "Explicit" pipelines. The results shown in simulation transfers (IsaacLab to MuJoCo) prove that the "Implicit" latent-driven method is significantly more robust.

Key findings include:

- Success Rate: Increased from 0.63 to 0.71 in challenging sim-to-sim transfers.

- Physical Fidelity: Ground penetration was effectively zeroed out (0.000) after the second round of optimization.

- Multi-Cycle Gains: The model experiences "self-improvement." The more it practices in simulation, the better the generated "imagined" motions become.

Deep Insight: Why This Matters

The shift from Explicit Retargeting to Implicit Latent Guidance is the most significant takeaway. By allowing the neural network to handle the mapping between "the idea of a kick" and "the motor torques required for a kick," RoboForge avoids the manual engineering errors that have plagued humanoid robotics for decades.

Limitations & Future Work

While RoboForge excels at flat-ground locomotion, it currently excludes complex object interactions (like carrying boxes) or non-planar terrain. The next frontier will likely involve integrating Visual Perception into this latent-driven loop to allow robots to navigate rubble or climb stairs using the same text-guided logic.

Conclusion

RoboForge provides a robust blueprint for the future of humanoid intelligence. By coupling the creative power of Diffusion Models with the rigorous constraints of physics simulation, it creates a path for robots that not only understand our commands but can execute them with the grace and stability required for the real world.