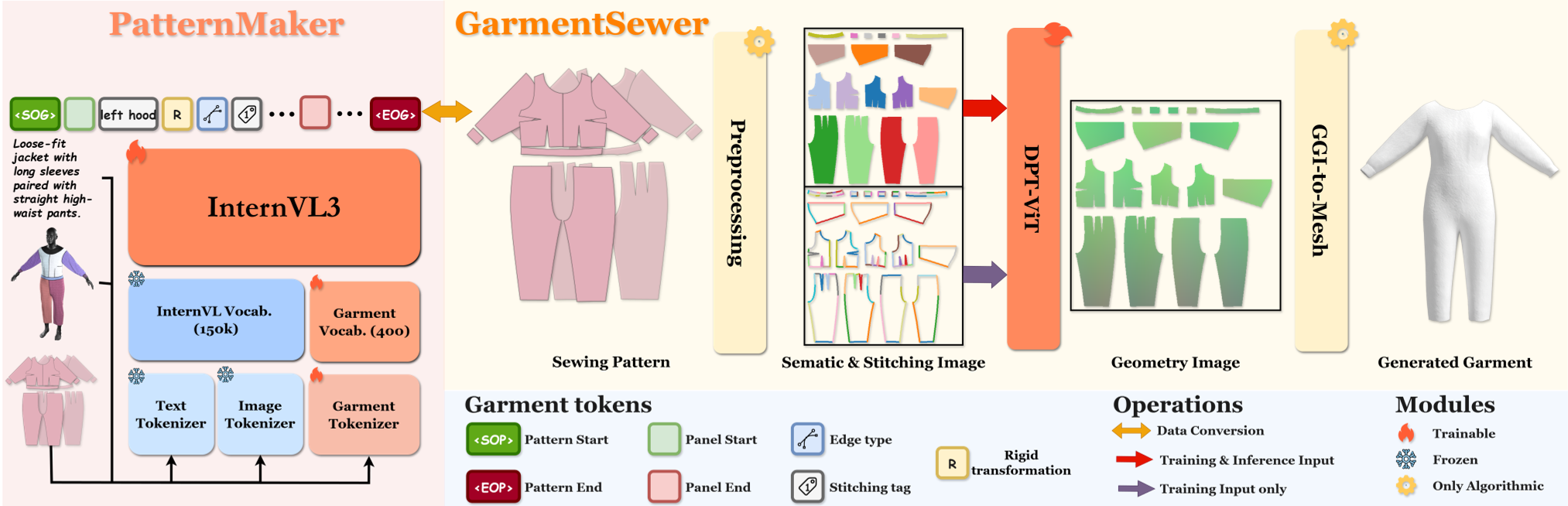

SwiftTailor is a two-stage 3D garment generation framework that utilizes a lightweight multimodal Large Language Model (InternVL-3-2B) and a Dense Prediction Transformer (DPT) to generate simulation-ready meshes from text or images. It introduces the Garment Geometry Image (GGI) to unify 2D sewing patterns with 3D surface geometry, achieving SOTA results on the GarmentCodeData benchmark.

TL;DR

Traditional digital fashion pipelines are bottlenecked by "sewing simulation"—the slow, iterative process of stitching 2D panels together into 3D shapes. SwiftTailor breaks this bottleneck by treating garment assembly as an image-to-image translation task. By introducing the Garment Geometry Image (GGI), the authors enable a lightweight transformer to "predict" the 3D mesh directly from 2D patterns in milliseconds, achieving a 4x total system speedup while maintaining physical plausibility.

The "Simulation Bottleneck" in Digital Fashion

In the world of 3D clothing, accuracy requires following the real-world manufacturing process: design 2D templates (sewing patterns) and then sew them. However, current SOTA methods like AIpparel or ChatGarment rely on physics engines (like NVIDIA Warp) to perform this "sewing."

This approach has two fatal flaws:

- Efficiency: Iterative solvers (XPBD/IPC) take 30-60 seconds per garment.

- Robustness: If the 2D pattern is slightly off, the simulation "explodes" or tangles, leading to unusable 3D meshes.

Methodology: From Reasoning to Synthesis

SwiftTailor addresses this with a elegant two-stage pipeline:

1. PatternMaker (The Brain)

Instead of a massive 7B parameter model, the authors use InternVL-3-2B. Through specific tokenization of panel vertices and rigid transformations, this smaller model actually provides better structural reasoning than its larger predecessors, likely due to a more focused training objective on the Multimodal GarmentCodeData.

2. GarmentSewer & GGI (The Muscle)

The core innovation is the Garment Geometry Image (GGI). Think of it as a "smart UV map." It doesn't just store color; it stores:

- Semantic Channel: What part is this (sleeve, torso)?

- Geometry Channel: What are the 3D XYZ coordinates of this pixel?

- Stitching Channel: Which edges connect to which?

The GarmentSewer (a Dense Prediction Transformer) simply maps the 2D layout to this dense 3D coordinate space. No gravity, no collision loops—just a single feed-forward pass.

Experimental Battleground

The results show a clear shift in the Pareto front of speed vs. quality.

- Speed: Stage 2 inference (mesh construction) drops from ~40 seconds (physics engine) to 0.02 seconds (GarmentSewer).

- Fidelity: Quantitatively, SwiftTailor achieves a Minimum Matching Distance (MMD) of 5.31, significantly lower than the ~7.0 of previous methods.

As seen in the qualitative comparisons, SwiftTailor avoids the "tearing" and "tangling" common in physics-based initializations because the neural network learns to predict a globally coherent shape from the start.

Critical Insight: Why Does This Work?

The success of SwiftTailor hinges on Inductive Bias. By forcing the model to operate in the UV space of the sewing pattern (GGI), the network doesn't have to learn "how to sew" from scratch. It only needs to learn the non-linear mapping from 2D flat panels to their 3D draped positions. The "stitching loss" ensures that paired edges remain close in 3D space, effectively acting as a neural "glue."

Limitations & The Road Ahead

While SwiftTailor is revolutionary for global shape and speed, it currently produces smooth surfaces. It lacks the micro-wrinkles that define the "feel" of different fabrics (denim vs. silk).

The authors suggest that the future lies in Hybrid Pipelines: use SwiftTailor for the stable, ultra-fast global initialization, and then run a very light, 1-2 second physics "pass" to add the high-frequency wrinkles. This could finally make real-time virtual try-ons a reality for mobile devices.

Conclusion

SwiftTailor is a significant step toward "Neural Simulation." It proves that for highly structured domains like fashion design, we can trade off formal physics for specialized neural representations, resulting in massive efficiency gains without sacrificing manufacturability.